Causation vs. Correlation

YouTube ... Quora ...Google search ...Google News ...Bing News

- Causation vs. Correlation ... Autocorrelation ...Convolution vs. Cross-Correlation (Autocorrelation)

- Perspective ... Context ... In-Context Learning (ICL) ... Transfer Learning ... Out-of-Distribution (OOD) Generalization

- Math for Intelligence ... Finding Paul Revere ... Social Network Analysis (SNA) ... Dot Product ... Kernel Trick

- Strategy & Tactics ... Project Management ... Best Practices ... Checklists ... Project Check-in ... Evaluation ... Measures

- Artificial General Intelligence (AGI) to Singularity ... Curious Reasoning ... Emergence ... Moonshots ... Explainable AI ... Automated Learning

- Causal Inference Animated Plots | Nick Huntington-Klein

- Causal Inference | Causal-Inference.org

- The Book of Why: The New Science of Cause and Effect | Judea Pearl

- Correlation is not causation | Anthony Figueroa - Medium ... Why the confusion of these concepts has profound implications, from healthcare to business management

Correlation does not imply causation

One of the benefits of using a causal approach in AI is that it can improve trust in AI systems. Causal AI is transparent and can be held accountable for its actions. The reasoning behind decisions is explainable and easily understood by humans because the AI follows a deterministic approach, based on a causal graph that learns and updates in real time. In turn, a signal’s predictive power does not necessarily imply in any way that that signal is actually related to or explains the phenomena being predicted. This distinction matters when it comes to Machine Learning (ML) because many of the strongest signals these algorithms pick up in their training data are not actually related to the thing being measured. A Reminder That Machine Learning Is About Correlations Not Causation | Kalev Leetaru - Forbes

- Causation means that changes in one variable bring about changes in the other; there is a cause-and-effect relationship between variables. The two variables are correlated with each other and there is also a causal link between them.

- Correlation means there is a relationship or pattern between the values of two variables. A correlation is a statistical indicator of the relationship between variables. These variables change together: they covary. But this covariation isn’t necessarily due to a direct or indirect causal link.

Contents

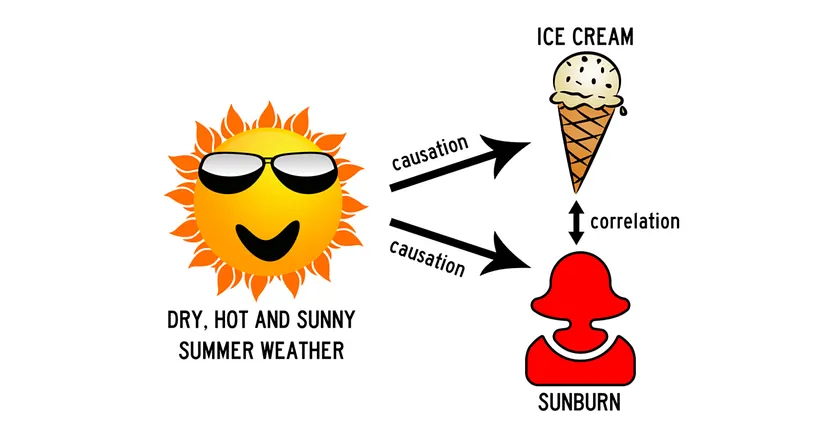

Ice Cream and Sunburn

Correlation describes an association between types of variables while causation describes a cause-and-effect relationship between variables. For example there is a strong positive correlation between ice cream sales and sunburn rates. When ice cream sales rise, so does sunburns. However, there is no causal relationship between the ice cream itself and the rate of sunburns. The sunny weather brings the two factors together.

Causal AI - Cause & Effect

YouTube ... Quora ...Google search ...Google News ...Bing News

Causal AI is an artificial intelligence system that can explain cause and effect. Causal AI technology is used by organizations to help explain decision making and the causes for a decision. Wikipedia

Root Cause Analysis (RCA)

YouTube ... Quora ...Google search ...Google News ...Bing News

Root cause analysis (RCA) is the process of discovering the root causes of problems in order to identify appropriate solutions. RCA assumes that it is much more effective to systematically prevent and solve for underlying issues rather than just treating ad hoc symptoms and putting out fires. Root Cause Analysis Explained: Definition, Examples, and Methods | Tableau

![]()

Multivariate Additive Noise Model (MANM)

Model for general causality that identifies multiple causal connections without time-sequence data. "Uniquely, the model can identify multiple, hierarchical causal factors. It works even if data with time sequencing is not available. The model creates significant opportunities to analyse complex phenomena in areas such as economics, disease outbreaks, climate change and conservation," says Prof Tshilidzi Marwala, a professor of artificial intelligence, and global AI and economics expert at the University of Johannesburg, South Africa. "The model is especially useful at the regional, national or global level where no controlled or natural experiments are possible," adds Marwala. "MANM is based on Directed Acyclic Graph (DAG), which can identify a multi-nodal causal structure. MANM can estimate every possible causal direction in complex feature sets, with no missing or wrong directions." The use of DAGs is a key reason MANM significantly outperforms models previously developed by others, which were based on Independent Component Analysis (ICA), such as Linear Non-Gaussian Acyclic Model (ICA-LiNGAM), Greedy DAG Search (GDS) and Regression with Sub-sequent Independent Test (RESIT), he says. "Another key feature of MANM is the proposed Causal Influence Factor (CIF), for the successful discovery of causal directions in the multivariate system. The CIF score provides a reliable indicator of the quality of the casual inference, which enables avoiding most of the missing or wrong directions in the resulting causal structure," concludes Chakraverty. Where an existing dataset is available, MANM now makes it possible to identify multiple multi-nodal causal structures within the set. As an example, MANM can identify the multiple causes of persistent household debt for low, middle and high-income households in a region. Artificial intelligence trained to analyze causation | University of Johannesburg

Causal Reasoning Approaches

At its core, causal AI is all about understanding the underlying relationships between variables in data and using this information to make predictions and decisions. There are two main approaches to causal AI: the potential outcomes framework and causal graph models. The best approach to causal reasoning in AI depends on the specific problem being addressed. In some cases, statistical methods may be sufficient. In other cases, a more complex approach, such as a causal graph model, may be required. Here are some of the advantages and disadvantages of the different approaches to causal reasoning:

Advantages:

- Statistical methods: Statistical methods are relatively easy to implement and can be used to analyze large datasets.

- Causal graph models: Causal graph models can provide more accurate and reliable predictions than statistical methods.

- Natural experiments: Natural experiments are a powerful tool for estimating causal effects, but they are rare.

- Instrumental variables: Instrumental variables can be used to estimate causal effects in the presence of confounding factors, but they can be difficult to find.

- Regression discontinuity designs: Regression discontinuity designs can be used to estimate causal effects in the presence of confounding factors, but they require a specific type of data.

- Causal Bayesian networks: Causal Bayesian networks can represent complex causal relationships, but they can be difficult to learn and interpret.

Disadvantages:

- Statistical methods: Statistical methods can be susceptible to confounding factors.

- Causal graph models: Causal graph models can be complex and difficult to learn.

- Natural experiments: Natural experiments are rare and may not be generalizable to other populations.

- Instrumental variables: Instrumental variables can be difficult to find and may not be effective in all cases.

- Regression discontinuity designs: Regression discontinuity designs require a specific type of data that may not be available in all cases.

- Causal Bayesian networks: Causal Bayesian networks can be difficult to learn and interpret.

Statistical Method

Potential outcomes framework is a statistical approach that uses randomized controlled trials to test causality.

Causal Graph Model

Causal graph models represent the causal relationships between variables as a graph to represent cause-and-effect relationships between variables. These models can be used to make predictions, test hypotheses, and estimate the effects of interventions. The nodes in the graph represent variables, and the edges represent causal relationships between variables. For example, a causal graph model could be used to predict the effect of a new drug on a patient's health. The model would use the causal relationships between the drug, the patient's health, and other factors to make the prediction. Causal graph models are more complex than statistical methods, but they can provide more accurate and reliable predictions. As a result, causal graph models are becoming increasingly popular in AI.

Here are some examples of how causal methods are used in AI:

- Healthcare: Causal methods are used to develop personalized treatments for patients. For example, a doctor might use causal methods to identify the factors that are most likely to contribute to a patient's illness. The doctor could then use this information to develop a treatment plan that is tailored to the patient's individual needs.

- Finance: Causal methods are used to develop financial models. For example, a financial analyst might use causal methods to identify the factors that are most likely to influence the price of a stock. The analyst could then use this information to make predictions about the stock's future price.

- Marketing: Causal methods are used to develop marketing campaigns. For example, a marketer might use causal methods to identify the factors that are most likely to influence a customer's decision to buy a product. The marketer could then use this information to target their marketing campaign to the customers who are most likely to be interested in the product.

Natural Experiments

Natural experiments are situations in which an intervention is applied to a group of people, but the assignment to the intervention is random. This allows researchers to estimate the causal effect of the intervention, without having to worry about confounding factors.

Instrumental Variables

Instrumental variables are variables that are correlated with the treatment variable, but are not caused by it. Instrumental variables can be used to estimate the causal effect of the treatment variable, even in the presence of confounding factors.

Regression Discontinuity Designs

Regression discontinuity designs are a type of quasi-experiment in which the treatment variable is assigned based on a cutoff value on a continuous covariate. This allows researchers to estimate the causal effect of the treatment variable, even in the presence of confounding factors.

Causal Bayesian networks

Causal Bayesian networks are a type of statistical model that can be used to represent causal relationships between variables. Causal Bayesian networks can be used to make predictions about the effects of interventions, and to identify the factors that are most likely to influence an outcome.

Counterfactual Thinking

Counterfactual explanations are a type of explanation that provides information about what would have to be different in order for a different outcome to occur. In the context of machine learning, counterfactual explanations can be used to explain why a particular prediction was made, or to suggest how a prediction could be changed.

There are a number of different methods for generating counterfactual explanations. One common approach is to use a technique called gradient descent. Gradient descent is a method for finding the minimum of a function. In the context of counterfactual explanations, the function being minimized is the loss function of the machine learning model. The goal of gradient descent is to find a set of feature values that minimizes the loss function, while still satisfying the desired outcome.

Another common approach for generating counterfactual explanations is to use a technique called random perturbation. Random perturbation involves randomly changing the feature values of a data point, and then seeing how the prediction changes. This process is repeated until a change is found that results in the desired outcome.

Counterfactual explanations can be a valuable tool for understanding and improving machine learning models. They can help users to understand why a particular prediction was made, and to identify areas where the model could be improved. Counterfactual explanations can also be used to help users to interact with machine learning models in a more informed way.

Here are some examples of how counterfactual explanations can be used:

- A bank might use counterfactual explanations to help customers understand why their loan application was rejected. The bank could provide the customer with a list of changes that they could make to their application in order to increase their chances of approval.

- A healthcare provider might use counterfactual explanations to help patients understand why they were diagnosed with a particular condition. The healthcare provider could provide the patient with a list of changes that they could make to their lifestyle in order to reduce their risk of developing the condition in the future.

- A marketing company might use counterfactual explanations to help them target their advertising more effectively. The company could use counterfactual explanations to identify the features of their customers that are most likely to influence their purchasing decisions.

Counterfactual explanations are a powerful tool that can be used to improve the usability and fairness of machine learning models. As machine learning models become more widely used, counterfactual explanations will become increasingly important.

Causalens

- CausaLens ... focusing on causality

While traditional machine learning focuses on correlations, Causal AI identifies cause-and-effect relationships, enabling companies to make better-informed decisions and predictions.

Causal AI examples:

- Use Causal AI to improve logistics and supply chain operations. By analyzing the causal relationships between different factors in the supply chain, such as demand, inventory, and transportation, the Organization could identify the most critical drivers of inefficiencies and bottlenecks, and optimize the entire system for greater efficiency and cost savings.

- Use causal AI to improve equipment maintenance and reduce downtime. By analyzing the causal factors that contribute to equipment failures or malfunctions, such as usage patterns, environmental conditions, and maintenance history, the Organization could proactively predict and prevent issues before they occur, reducing costly downtime and increasing the overall reliability of its systems.

- Use causal AI to enhance strategic planning and decision-making. By identifying the causal relationships between different variables such as budget allocation, resource utilization, and operational effectiveness, the Organization could gain deeper insights into the factors that drive success and failure in its operations, and make more informed decisions about where to focus its resources and investments for the greatest impact.

Causality vs Retrocausality

Causality is when an earlier event causes a later event, while Retrocausality is when a later event affects an earlier one.

- Causality is the relationship between an event (the cause) and a second event (the effect), where the second event is understood as a consequence of the first. In other words, causality is the concept that an action or event will produce a certain response to the action in the form of another event.

- Retrocausality, on the other hand, is a concept of cause and effect in which an effect precedes its cause in time and so a later event affects an earlier one1. In quantum physics, the distinction between cause and effect is not made at the most fundamental level and so time-symmetric systems can be viewed as causal or retrocausal.

Retrocausality

- Time ... PNT ... GPS ... Retrocausality ... Delayed Choice Quantum Eraser ... Quantum

- Retrocausality | Wikipedia

- Two-state vector formalism (TSVF) | Wikipedia

- Retrocausality in Quantum Mechanics | Stanford Encyclopedia of Philosophy

- Retrocausality Is the Key to Time Travel. What the Hell Is

Retrocausality, or backwards causation, is a concept of cause and effect in which an effect precedes its cause in time and so a later event affects an earlier one. In quantum physics, the distinction between cause and effect is not made at the most fundamental level and so time-symmetric systems can be viewed as causal or retrocausal. Retrocausality is associated with the Double Inferential state-Vector Formalism (DIVF), later known as the two-state vector formalism (TSVF) in quantum mechanics, where the present is characterized by quantum states of the past and the future taken in combination.

One example of retrocausation is the delayed choice quantum eraser experiment. This experiment involves a variation of the famous double-slit experiment, in which the behavior of a photon seems to be determined by measurements made on another photon in the future. In this experiment, a photon is split into two entangled particles, one of which passes through a double-slit apparatus while the other is measured at a later time. The measurement of the second particle determines whether the first particle behaves as a wave or a particle when passing through the double-slit apparatus, even though the first particle has already passed through the apparatus before the measurement is made. In 2022, the physics Nobel prize was awarded for experimental work showing that the quantum world must break some of our fundamental intuitions about how the universe works. Many physicists agree about what’s been called “the death by experiment” of local realism. However, a growing group of experts think that we should abandon instead the assumption that present actions can’t affect past events. Called “retrocausality,” this option claims to rescue both locality and realism. Another example of retrocausation is the Wheeler-Feynman absorber theory, developed by John Archibald Wheeler and Richard Feynman. This theory suggests that an event can be influenced by events in its future as well as its past.

The Delayed Choice Quantum Eraser, Debunked

The delayed choice quantum eraser is one of the weirdest, if not THE weirdest, experiments in quantum mechanics. It supposedly rewrites the past because the choice of a measurement changes what happened in another measurement earlier. In this video I explain why this is not what's happening. The quantum eraser isn't remotely as weird as you may have heard.