Difference between revisions of "Transformer"

m |

m |

||

| Line 20: | Line 20: | ||

[https://www.bing.com/news/search?q=attention+transformer+ai&qft=interval%3d%228%22 ...Bing News] | [https://www.bing.com/news/search?q=attention+transformer+ai&qft=interval%3d%228%22 ...Bing News] | ||

| − | * [[Large Language Model (LLM)]] ... [[Large Language Model (LLM)#Multimodal|Multimodal]] ... [[Foundation Models (FM)]] ... [[Generative Pre-trained Transformer (GPT)|Generative Pre-trained]] ... [[Transformer]] ... | + | * [[Large Language Model (LLM)]] ... [[Large Language Model (LLM)#Multimodal|Multimodal]] ... [[Foundation Models (FM)]] ... [[Generative Pre-trained Transformer (GPT)|Generative Pre-trained]] ... [[Transformer]] ... [[GPT-4]] ... [[GPT-5]] ... [[Attention]] ... [[Generative Adversarial Network (GAN)|GAN]] ... [[Bidirectional Encoder Representations from Transformers (BERT)|BERT]] |

* [[Natural Language Processing (NLP)]] ... [[Natural Language Generation (NLG)|Generation (NLG)]] ... [[Natural Language Classification (NLC)|Classification (NLC)]] ... [[Natural Language Processing (NLP)#Natural Language Understanding (NLU)|Understanding (NLU)]] ... [[Language Translation|Translation]] ... [[Summarization]] ... [[Sentiment Analysis|Sentiment]] ... [[Natural Language Tools & Services|Tools]] | * [[Natural Language Processing (NLP)]] ... [[Natural Language Generation (NLG)|Generation (NLG)]] ... [[Natural Language Classification (NLC)|Classification (NLC)]] ... [[Natural Language Processing (NLP)#Natural Language Understanding (NLU)|Understanding (NLU)]] ... [[Language Translation|Translation]] ... [[Summarization]] ... [[Sentiment Analysis|Sentiment]] ... [[Natural Language Tools & Services|Tools]] | ||

* [[Assistants]] ... [[Personal Companions]] ... [[Agents]] ... [[Negotiation]] ... [[LangChain]] | * [[Assistants]] ... [[Personal Companions]] ... [[Agents]] ... [[Negotiation]] ... [[LangChain]] | ||

Revision as of 10:56, 6 September 2023

YouTube ... Quora ...Google search ...Google News ...Bing News

- Large Language Model (LLM) ... Multimodal ... Foundation Models (FM) ... Generative Pre-trained ... Transformer ... GPT-4 ... GPT-5 ... Attention ... GAN ... BERT

- Natural Language Processing (NLP) ... Generation (NLG) ... Classification (NLC) ... Understanding (NLU) ... Translation ... Summarization ... Sentiment ... Tools

- Assistants ... Personal Companions ... Agents ... Negotiation ... LangChain

- Excel ... Documents ... Database; Vector & Relational ... Graph ... LlamaIndex

- Sequence to Sequence (Seq2Seq)

- Recurrent Neural Network (RNN)

- Long Short-Term Memory (LSTM)

- Bidirectional Encoder Representations from Transformers (BERT) ... a better model, but less investment than the larger OpenAI organization

- Memory Networks

- Artificial Intelligence (AI) ... Generative AI ... Machine Learning (ML) ... Deep Learning ... Neural Network ... Reinforcement ... Learning Techniques

- Conversational AI ... ChatGPT | OpenAI ... Bing | Microsoft ... Bard | Google ... Claude | Anthropic ... Perplexity ... You ... Ernie | Baidu

- Google Transformer-XL ...T5-XXL ...Google trained a trillion-parameter AI language model | Kyle Wiggers - VB

- The Illustrated Transformer | Jay Alammar

- What is a Transformer? | Maxime Allard - Medium

- Transformers provides state-of-the-art general-purpose architectures (Bidirectional Encoder Representations from Transformers (BERT), Generative Pre-trained Transformer-2 (GPT-2), RoBERTa, XLM, DistilBert, XLNet...) for Natural Language Understanding (NLU) and Natural Language Generation (NLG) with over 32+ pretrained models in 100+ languages and deep interoperability between TensorFlow 2.0 and PyTorch. | GitHub

- How do Transformers Work in NLP? A Guide to the Latest State-of-the-Art Models | Prateek Joshi - Analytics Vidhya

- Illustrated GPT-2 | Jay Alammmar

Transformers are Neural Network architectures using self-attention to understand context and long-term dependencies in language, used in many Natural Language Processing (NLP) applications such as chatbots and Sentiment Analysis tools. Transformer Model uniquely have attention such that every output element is connected to every input element. The weightings between them are calculated dynamically, effectively. | Kyle Wiggers The dominant sequence transduction models are based on complex recurrent or convolutional neural networks in an Autoencoder (AE) / Encoder-Decoder configuration. The best performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train. Attention Is All You Need | A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A.N. Gomez, L. Kaiser, and I. Polosukhin

Competitive programming, conversational question answering, combinatorial optimization issues, and graph learning tasks all incorporate transformers as key components. Transformers models are used in competitive programming to produce solutions from textual descriptions. The well-known chatbot ChatGPT, which is a GPT-based model and a well-liked conversational question-answering model, is the best example of a transformer model. Transformers have also been used to resolve combinatorial optimization issues like the Travelling Salesman Problem, and they have been successful in graph learning tasks, especially when it comes to predicting the characteristics of molecules. Transformer models have shown great versatility in modalities, such as images, audio, video, and undirected Graphs. Click here for directed graphs.

The Transformer is a deep machine learning model introduced in 2017, used primarily in the field of natural language processing (NLP). Like Recurrent Neural Network (RNN), Transformers are designed to handle ordered sequences of data, such as natural language, for various tasks such as machine translation and text summarization. However, unlike RNNs, Transformers do not require that the sequence be processed in order. So, if the data in question is natural language, the Transformer does not need to process the beginning of a sentence before it processes the end. Due to this feature, the Transformer allows for much more parallelization than RNNs during training. Since their introduction, Transformers have become the basic building block of most state-of-the-art architectures in Natural Language Processing (NLP), replacing gated recurrent neural network models such as the Long Short-Term Memory (LSTM) in many cases. Since the Transformer architecture facilitates more parallelization during training computations, it has enabled training on much more data than was possible before it was introduced. This led to the development of pretrained systems such as Bidirectional Encoder Representations from Transformers (BERT) and Generative Pre-trained Transformer (GPT)-2, which have been trained with huge amounts of general language data prior to being released, and can then be fine-tune trained to specific language tasks.Wikipedia

Tensor2Tensor (T2T) | Google Brain

- Tensor2Tensor Transformers: New Deep Models for NLP | Łukasz Kaiser

- Tensor2Tensor | GitHub

- Tensor2Tensor Library | GitHub

- # Welcome to the Tensor2Tensor Colab

Tensor2Tensor, or T2T for short, is a library of deep learning models and datasets designed to make deep learning more accessible and [accelerate ML research](https://research.googleblog.com/2017/06/accelerating-deep-learning-research.html). T2T is actively used and maintained by researchers and engineers within the [Google Brain team](https://research.google.com/teams/brain/) and a community of users. This colab shows you some datasets we have in T2T, how to download and use them, some models we have, how to download pre-trained models and use them, and how to create and train your own models. | Jay Alammar]

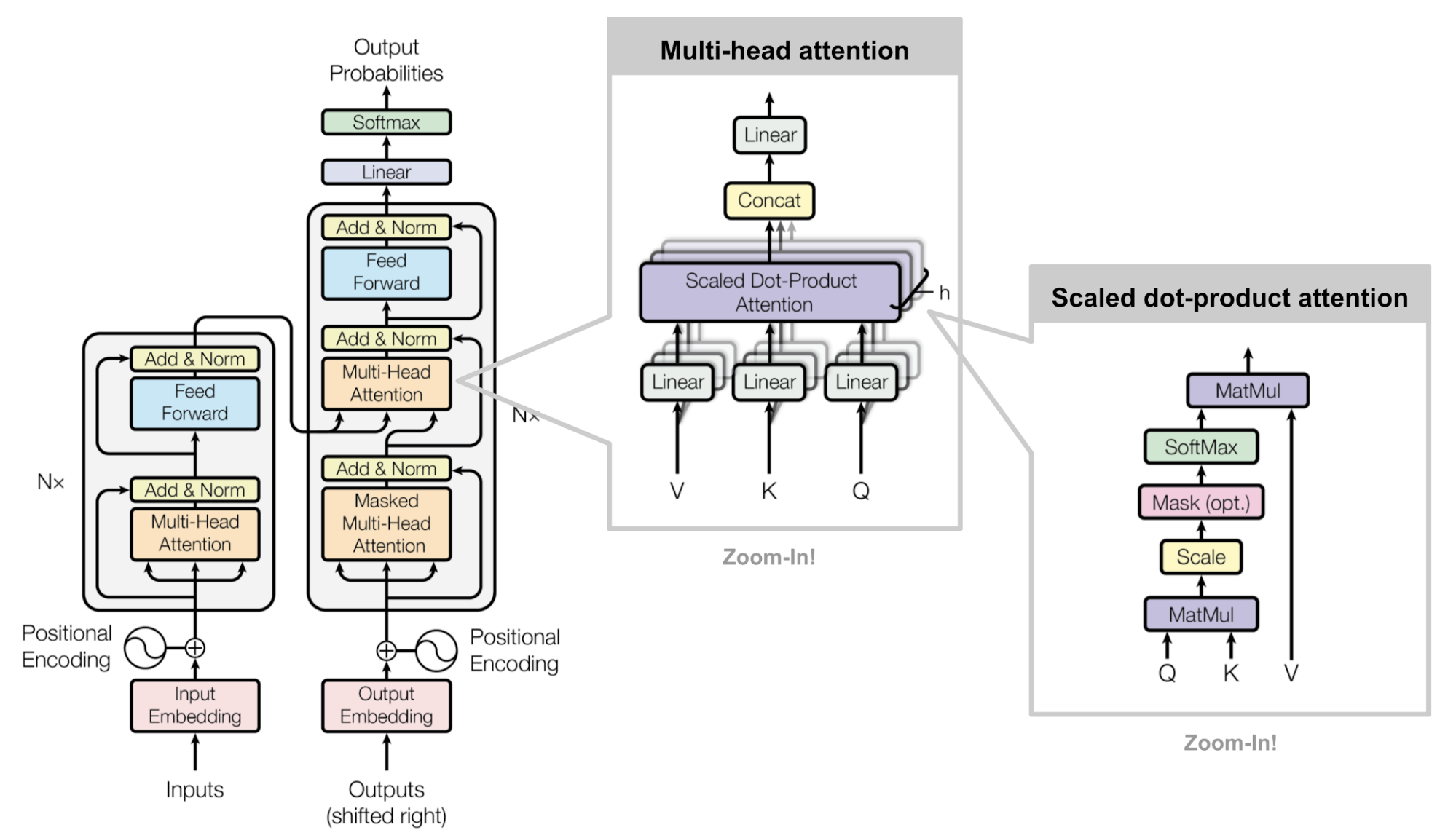

Multi-head scaled dot-product attention mechanism. (Image source: Fig 2 in Vaswani, et al., 2017)