Transformer-XL

YouTube search... ...Google search

- Attention Mechanism ...Transformer ...Generative Pre-trained Transformer (GPT) ... GAN ... BERT

- A Light Introduction to Transformer-XL | Elvis - Medium

- Transformer-XL Explained: Combining Transformers and RNNs into a State-of-the-art Language Model | Rani Horev - Towards Data Science

- Transformer-XL: Language Modeling with Longer-Term Dependency | Z. Dai, Z. Yang, Y. Yang, W.W. Cohen, J. Carbonell, Quoc V. Le, ad R. Salakhutdinov

- Large Language Model (LLM) ... Natural Language Processing (NLP) ...Generation ... Classification ... Understanding ... Translation ... Tools & Services

- Memory Networks

- Autoencoder (AE) / Encoder-Decoder

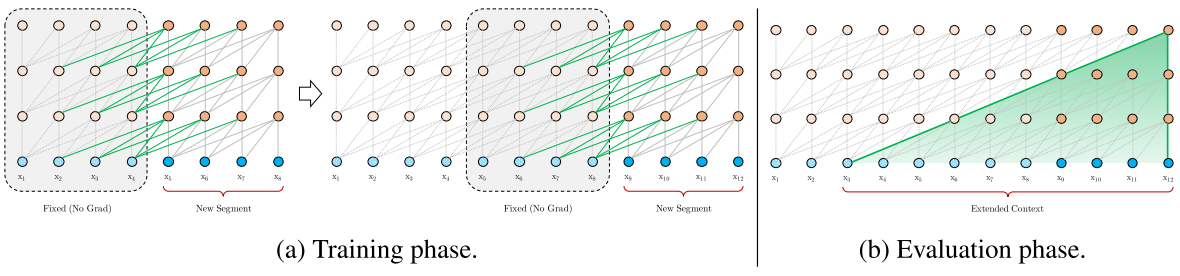

Combines the two leading architectures for language modeling:

- Recurrent Neural Network (RNN) to handles the input tokens — words or characters — one by one to learn the relationship between them

- Attention Mechanism/Transformer Model to receive a segment of tokens and learns the dependencies between at once them using an attention mechanism.