Difference between revisions of "Time"

m (→Significance) |

m (→Light Clock 1905 - Einstein's Thought Experiment) |

||

| (2 intermediate revisions by the same user not shown) | |||

| Line 240: | Line 240: | ||

<youtube>b2Vd9HGB5XQ</youtube> | <youtube>b2Vd9HGB5XQ</youtube> | ||

| − | |||

= <span id="Precision Time Protocol (PTP)"></span>Precision Time Protocol (PTP) = | = <span id="Precision Time Protocol (PTP)"></span>Precision Time Protocol (PTP) = | ||

| Line 890: | Line 889: | ||

Scientists use the ''molecular clock'', which assumes steady genetic changes, to estimate species divergence times, but recent models like the ''Covariant Evolutionary Tempo (CET)'' by Budd & Mann suggest evolution isn't always steady, predicting rapid bursts in major groups (like mammals or birds) early on, explaining mismatches with fossil records by showing faster initial evolution and diversification, thus refining our understanding of how large animal groups rapidly emerge. | Scientists use the ''molecular clock'', which assumes steady genetic changes, to estimate species divergence times, but recent models like the ''Covariant Evolutionary Tempo (CET)'' by Budd & Mann suggest evolution isn't always steady, predicting rapid bursts in major groups (like mammals or birds) early on, explaining mismatches with fossil records by showing faster initial evolution and diversification, thus refining our understanding of how large animal groups rapidly emerge. | ||

| − | + | ''' How the Molecular Clock Works ''' | |

* '''Rate of Mutation:''' The core idea is that mutations in DNA accumulate at a relatively constant rate over time. | * '''Rate of Mutation:''' The core idea is that mutations in DNA accumulate at a relatively constant rate over time. | ||

* '''Genetic Differences:''' By comparing DNA or protein sequences between species, scientists count the genetic differences. | * '''Genetic Differences:''' By comparing DNA or protein sequences between species, scientists count the genetic differences. | ||

* '''Dating Divergence:''' More differences imply a longer time since the species shared a common ancestor, allowing estimation of evolutionary timelines. | * '''Dating Divergence:''' More differences imply a longer time since the species shared a common ancestor, allowing estimation of evolutionary timelines. | ||

| − | + | ''' Challenges & New Models (Budd & Mann's CET) ''' | |

the Covariant Evolutionary Tempo model suggests that when a big group of organisms appear, evolution actually speeds up. This would make it appear like more time was passing when evolution was really on fast-forward, differentiating into various groups that eventually appeared in the fossil record. “While the speeding clock idea needs testing,” Telford wrote, “it could explain other mismatches between molecular clocks and the fossil record.” | the Covariant Evolutionary Tempo model suggests that when a big group of organisms appear, evolution actually speeds up. This would make it appear like more time was passing when evolution was really on fast-forward, differentiating into various groups that eventually appeared in the fossil record. “While the speeding clock idea needs testing,” Telford wrote, “it could explain other mismatches between molecular clocks and the fossil record.” | ||

| Line 910: | Line 909: | ||

<youtube>mzKXfz-QPF0</youtube> | <youtube>mzKXfz-QPF0</youtube> | ||

| − | <youtube> | + | <youtube>JbtfyRUxXB0</youtube> |

= <span id="Time Travel in Fiction"></span>Time Travel in Fiction = | = <span id="Time Travel in Fiction"></span>Time Travel in Fiction = | ||

Latest revision as of 11:37, 3 April 2026

YouTube ... Quora ...Google search ...Google News ...Bing News

- Time ... PNT ... GPS ... Retrocausality ... Delayed Choice Quantum Eraser ... Quantum

- Government Services:

- National Institute of Standards and Technology (NIST) ... Time and Frequency Division, Physical Measurement Laboratory

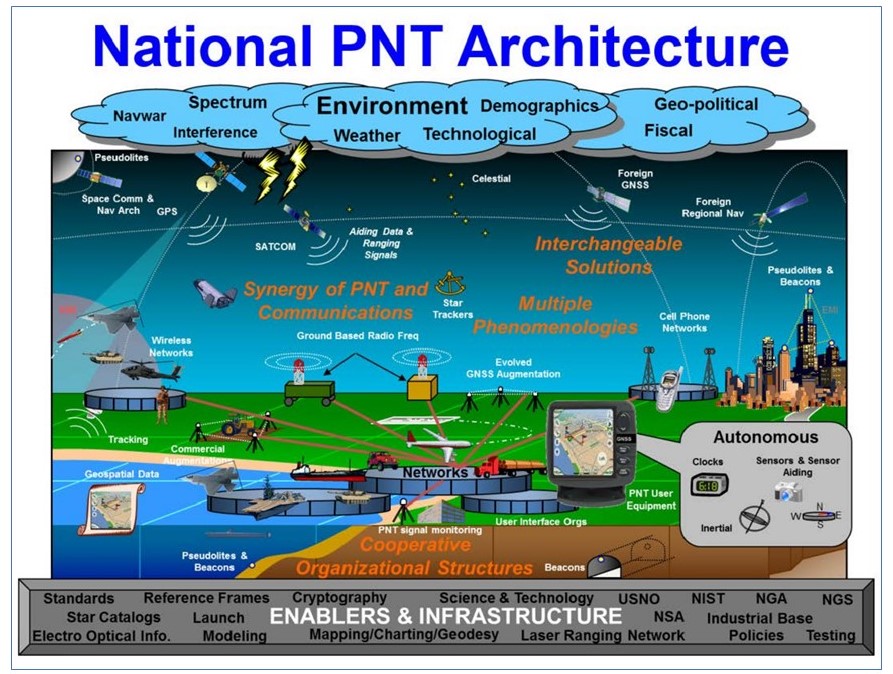

- U.S. Department of Homeland Security (DHS) ... Science and Technology (S&T) Positioning, Navigation, and Timing (PNT) Program

- Defense ... Precise Time Department ... U.S. Naval Observatory has maintained a Time Service Department since 1880

- Perspective ... Context ... In-Context Learning (ICL) ... Transfer Learning ... Out-of-Distribution (OOD) Generalization

- National Timing Centre ... Assured Time and Frequency for the UK

- Time ...Coordinated Universal Time UTC ... Clock ...Timekeeping | Wikipedia

- The Very Long and Fascinating History of Clocks | Christopher McFadden - Interesting Engineering

- What Is a Leap Second? | Konstantin Bikos and Anne Buckle - timeanddate.com

- Atomic clocks ...Tide Clock | Amazon

- Clock synchronization

- Time: Do the past, present, and future exist all at once? | BigThink (video) ... astrophysicist Michelle Thaller, science educator Bill Nye, author James Gleick, and neuroscientist Dean Buonomano discuss how the human brain perceives of the passage of time, the idea in theoretical physics of time as a fourth dimension, and the theory that space and time are interwoven.

- Cybersecurity

- Crown Sterling ... changing the face of digital security with its non-integer-based algorithms that leverage time, AI and irrational numbers.

- Quantum cryptography ... the infosec industry looks to quantum cryptography and quantum key distribution (QKD)

- What’s a Time Crystal? | Charles Q. Choi - IEEE Spectrum ... And how do Google researchers use quantum computers to make them? ... quantum system of many particles that organize themselves into a periodic pattern of motion—periodic in time rather than in space—that persists in perpetuity.

- This Mirror Reverses How Light Travels in Time There are already applications in wireless, radar, and optical-computing | Charles Q. Choi - IEEE Spectrum ... There are already applications in wireless, radar, and optical-computing ... These applications often reverse the order of signals to help process them.

Sequence/Time-based Algorithms

- 10 Incredibly Useful Time Series Forecasting Algorithms

- Artificial intelligence (AI) algorithms: a complete overview

- New AI Algorithms Streamline Data Processing for Space-based Instruments

- Unlocking The Power Of Predictive Analytics With AI - Forbes

- A Comparison of Time Series Databases and Netsil’s Use of Druid | Netsil

- Microsoft announces the general availability of Azure Time Series Insights | Ryan Waite - Microsoft

- Top 10 Time Series Databases | Outlyer

Time-based AI algorithms are algorithms that use time series data to make predictions or analyses. Time series data are data that are collected over time and have a temporal order. For example, the daily temperature, the stock prices, or the number of visitors to a website are all time series data. These algorithms can be used for a variety of purposes, such as forecasting future values, detecting trends and patterns, and making informed decisions based on historical data. They can be applied to many different fields, including finance, economics, meteorology, and healthcare.

Whenever we have developed better clocks, we’ve learned something new about the world.

- Alexander Smith New Time Dilation Phenomenon Revealed: Timekeeping Theory Combines Quantum Clocks and Einstein’s Relativity - Dartmouth College

Common

There are different types of sequence/time-based AI algorithms, depending on the goal and the method of the algorithm. Some of the most common ones are:

- Time Series Forecasting:

- Statistical:

- Autoregressive (AR): uses past values of the time series to predict future values. It assumes that the current value is a linear function of previous values. For example, AR can be used to forecast the weather based on historical data.

- Autoregressive Integrated Moving Average (ARIMA): is an extension of AR that also accounts for the trend and the seasonality of the time series. It uses differencing to make the time series stationary (i.e., having constant mean and variance) and then applies AR and moving average (MA) models. For example, ARIMA can be used to forecast the sales of a product based on past sales and seasonal patterns.

- Seasonal Autoregressive Integrated Moving Average (SARIMA): is a further extension of ARIMA that also accounts for the cyclic variations of the time series. It uses seasonal differencing and seasonal AR and MA models to capture the periodic fluctuations of the time series. For example, SARIMA can be used to forecast the electricity demand based on past demand and seasonal factors.

- Exponential Smoothing (ES): uses weighted averages of past values of the time series to predict future values. It gives more weight to recent values than older values, and it can also incorporate trend and seasonality components. For example, ES can be used to forecast the inventory level based on past demand and supply.

- Deep Learning:

- Prophet: is a modern and flexible approach to time series forecasting developed by Facebook. It uses a decomposable model that consists of trend, seasonality, and holiday components, and it allows for adding custom effects and prior information. For example, Prophet can be used to forecast the web traffic for a data science blog website based on past traffic and special events.

- Neural Turing Machine (NTM): the fuzzy pattern matching capabilities of Neural Networks with the algorithmic power of programmable computers. NTMs are an instance of Memory Augmented Neural Networks, a new class of Recurrent Neural Network (RNN)s which decouple computation from memory by introducing an external memory unit. NTMs have demonstrated superior performance over Long Short-Term Memory Cells in several sequence learning tasks.

- Statistical:

- Neural Networks:

- Recurrent Neural Network (RNN): is a type of Deep Learning model that can process sequential data such as time series. It uses a network of neurons that have feedback loops, which enable them to store information from previous inputs. For example, RNN can be used to forecast the prices of Bitcoin based on past prices and other factors.

- Gated Recurrent Unit (GRU): are a gating mechanism in Recurrent Neural Network (RNN) architecture. Like other RNNs, a GRU can process sequential data such as time series, natural language, and speech1. The GRU is similar to a Long Short-Term Memory (LSTM) with a forget gate, but has fewer parameters than LSTM, as it lacks an output gate. This means that GRUs are generally easier and faster to train than their LSTM counterparts. GRUs have been found to perform similarly to LSTMs on certain tasks such as polyphonic music modeling, speech signal modeling, and natural language processing. They have shown that gating is indeed helpful in general.

- Long Short-Term Memory (LSTM): is a special type of RNN that can handle long-term dependencies in sequential data. It uses a memory cell that can store, update, and forget information over time, and it has gates that control the flow of information in and out of the cell. For example, LSTM can be used to forecast the generation of wind power based on past generation and weather conditions:

- Bidirectional Long Short-Term Memory (BI-LSTM): is a type of Recurrent Neural Network (RNN) architecture that processes data in both forward and backward directions. It consists of two LSTMs: one taking the input in a forward direction, and the other in a backward direction. BI-LSTMs effectively increase the amount of information available to the network, improving the context available to the algorithm. For example, knowing what words immediately follow and precede a word in a sentence. Compared to LSTM, BI-LSTM combines the forward hidden layer and the backward hidden layer, which can access both the preceding and succeeding contexts¹. This feature of flow of data in both directions makes the BI-LSTM different from other LSTMs. BI-LSTMs have been successfully applied to various tasks such as natural language processing, speech recognition, and traffic forecasting.

- Bidirectional Long Short-Term Memory (BI-LSTM) with Attention Mechanism: is a type of Recurrent Neural Network (RNN) architecture that processes data in both forward and backward directions, and uses an attention mechanism to weigh the importance of different parts of the input sequence. The attention mechanism allows the network to focus on specific parts of the input sequence when making predictions, rather than treating all parts of the sequence equally. This can be particularly useful when dealing with long input sequences, where some parts of the sequence may be more relevant to the prediction than others. BI-LSTMs with Attention Mechanism have been successfully applied to various tasks such as text classification, Sentiment Analysis, and human activity recognition.

- Average-Stochastic Gradient Descent (SGD) Weight-Dropped LSTM (AWD-LSTM): is a variant of LSTM that employs DropConnect for regularization, as well as NT-ASGD for optimization. NT-ASGD stands for non-monotonically triggered averaged stochastic gradient descent, which returns an average of the last iterations of weights. AWD-LSTM has shown great results on both word-level and character-level models. It has been used in research papers on word-level models and has shown great results on character-level models as well.

- Sequence to Sequence (Seq2Seq): can map a variable-length input sequence to a variable-length output sequence. It is often used for natural language processing tasks, such as machine translation, text summarization, conversational models, and question answering. The Seq2Seq algorithm consists of two main components: an encoder and a decoder. The encoder reads the input sequence one timestep at a time and produces a hidden vector representation of the input. The decoder then uses the hidden vector as the initial state and generates the output sequence one timestep at a time, using the previous output as the input context.

- Transformer: is a state-of-the-art Deep Learning model that can process sequential data such as time series. It uses layers of attention mechanisms that can learn how to focus on relevant parts of the input data, and it can handle long-term dependencies and parallel computations efficiently. For example, Transformer can be used to forecast the spread of COVID-19 based on past cases and interventions. Transformer can process sequential data using layers of attention mechanisms, without using recurrent or convolutional layers. It can handle long-term dependencies and parallel computations efficiently, and it can achieve better results than RNN-based Seq2Seq models on various tasks.

- Generative Pre-trained Transformer (GPT): are a family of language models that use Deep Learning techniques to generate natural language text. They are based on the transformer architecture and can be fine-tuned for various natural language processing tasks such as text generation, language translation, and text classification. The first GPT was introduced in 2018 by the American artificial intelligence (AI) company OpenAI. GPT models are artificial Neural Networks that are based on the transformer architecture, pre-trained on large data sets of unlabelled text, and able to generate novel human-like content

- Attention Mechanism: allows the decoder to selectively focus on different parts of the input sequence when generating the output, instead of relying on a single fixed vector. This can improve the performance and accuracy of the Seq2Seq model, especially for long sequences

- Transformer-XL: is a transformer-based language model that introduces the notion of recurrence to the deep self-attention network. It was designed to enable learning dependency beyond a fixed length without disrupting temporal coherence. The model consists of a segment-level recurrence mechanism and a novel positional encoding scheme. This method not only enables capturing longer-term dependency, but also resolves the context fragmentation problem. As a result, Transformer-XL learns dependency that is 80% longer than RNNs and 450% longer than vanilla Transformers, achieves better performance on both short and long sequences, and is up to 1,800+ times faster than vanilla Transformers during evaluation.

- Beam search: is a technique to find the most probable output sequence given the input sequence, by keeping track of multiple candidate sequences and expanding them based on their probabilities. This can improve the quality and diversity of the output, compared to using a greedy or random search.

- Convolutional Neural Network (CNN): is another type of Deep Learning model that can process sequential data such as time series. It uses layers of filters that can extract features from local regions of the input data, and it can capture complex patterns and relationships in the data. For example, CNN can be used to forecast an avalanche in a famous ski resort based on past snowfall and temperature data.

- Spatial-Temporal Dynamic Network (STDN): a Deep Learning framework proposed to address the challenge of modeling complex spatial dependencies and temporal dynamics in traffic prediction. A flow gating mechanism is introduced to learn the dynamic similarity between locations, and a periodically shifted attention mechanism is designed to handle long-term periodic temporal shifting. This approach has been shown to be effective in predicting taxi demand

- Recurrent Neural Network (RNN): is a type of Deep Learning model that can process sequential data such as time series. It uses a network of neurons that have feedback loops, which enable them to store information from previous inputs. For example, RNN can be used to forecast the prices of Bitcoin based on past prices and other factors.

- Other:

- Gaussian Process (GP): is a type of probabilistic model that can handle uncertainty and noise in time series data. It uses a function that defines how similar any two points in the input space are, and it produces a distribution over possible outputs for any given input. For example, GP can be used to forecast the depletion level of stocks in stores based on past sales and inventory data.

- End-to-End Speech: translation is an approach to speech translation that is gaining high interest from the research world in the last few years. It consists of using a single Deep Learning model that learns to generate translated text of the input audio in an end-to-end fashion. This approach, known as “end-to-end” or “direct” ST, supposes many advantages over the former, such as avoiding the concatenation of errors, the direct use of prosodic from speech and a lower inference time.

- (Tree) Recursive Neural (Tensor) Network (RNTN): type of Neural Network that is mostly used for natural language processing. It has a tree structure with a neural net at each node. The purpose of these nets is to analyze data that have a hierarchy of structure. An RNTN is a powerful tool for deciphering and labeling patterns. Structurally, an RNTN is a binary tree with three nodes: a root and two leaves. The root and leaf nodes are not neurons, but instead, they are groups of neurons – the more complicated the input data, the more neurons are required. RNTNs have been successfully applied to Sentiment Analysis, where the input is a sentence in its parse tree structure, and the output is the classification for the input sentence, i.e., whether the meaning is very negative, negative, neutral, positive, or very positive

- Temporal Difference (TD) Learning: refers to a class of model-free Reinforcement Learning (RL) methods which learn by bootstrapping from the current estimate of the value function. These methods sample from the environment, like Monte Carlo methods, and perform updates based on current estimates, like dynamic programming methods. While Monte Carlo methods only adjust their estimates once the final outcome is known, TD methods adjust predictions to match later, more accurate, predictions about the future before the final outcome is known.

What Time Is It?

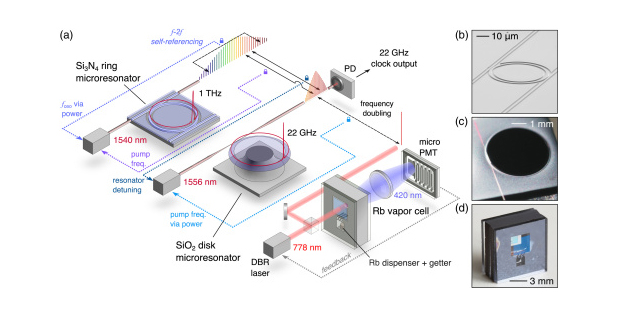

- DARPA Making Progress on Miniaturized Atomic Clocks for Future PNT Applications | US Defense Advanced Research Projects Agency (DARPA)

|

|

|

|

|

|

|

|

|

|

The Earth's rotation is so accurate it varies only in milliseconds ...do you feel the Earth rotation slowing down?

Light Clock 1905 - Einstein's Thought Experiment

Imagine you have a special clock that works with light. This clock has two mirrors facing each other, and a beam of light bounces up and down between them. Every time the light goes from the bottom mirror to the top and back down, it counts as one tick of the clock. Einstein's light clock thought experiment shows that when things move fast, time slows down for them. This surprising idea helps us understand the nature of time and motion in our universe. Now, let's think about this clock in two different situations.

Situation 1: Standing Still: First, picture the clock sitting on a table, not moving at all. The light goes straight up to the top mirror and straight back down to the bottom mirror. If you measured the time it takes for the light to do this, you would see it takes a certain amount of time for one tick.

Situation 2: Moving Clock: Now, imagine you place the clock on a skateboard and push it so it's moving. As the clock moves, the light beam has to travel a different path. Instead of going straight up and down, it now has to go in a diagonal path because the mirrors are moving while the light is traveling. It's like when you throw a ball to a friend while running; the ball has to cover more distance because both of you are moving.

What This Means ... Because the light in the moving clock has to travel a longer, diagonal path, it takes more time for one tick to happen compared to when the clock is standing still. This means that for someone watching the moving clock, time appears to run slower for the moving clock compared to a clock that's not moving. This idea is called time dilation. It means that time actually passes at different rates depending on how fast something is moving. If you were riding on the skateboard with the clock, you wouldn't notice anything different about the clock's ticks. But someone standing still and watching you would see that your clock ticks more slowly.

Why It Matters ... This thought experiment helps us understand that time isn't the same everywhere and can be different depending on how fast things are moving. This concept is a key part of Einstein's theory of special relativity, which helps scientists understand how the universe works, especially when things are moving very fast, like spaceships or particles in a collider.

Precision Time Protocol (PTP)

YouTube search... ...Google search

- Precision Time Protocol PTP-1588 | IEEE ...High precision clock synchronization that computes latency and offset

- How Precision Time Protocol is being deployed at Meta | Oleg Obleukhov & Ahmad Byagowi - CONNECTIVITY, NETWORKING & TRAFFIC, OPEN SOURCE, PRODUCTION ENGINEERING, UNCATEGORIZED, WEB

- PTP IEEE 1588v2 | Juniper Networks ...Time Management Administration Guide

The Precision Time Protocol (PTP) is a protocol used to synchronize clocks throughout a computer network. On a local area network, it achieves clock accuracy in the sub-microsecond range, making it suitable for measurement and control systems.[1] PTP is currently employed to synchronize financial transactions, mobile phone tower transmissions, sub-sea acoustic arrays, and networks that require precise timing but lack access to satellite navigation signals.Wikipedia

Overall, its structure is similar to NTP in that there are different levels within it and GPS satellites can serve as its time source. However, the major difference between Network Time Protocol (NTP) and PTP is that PTP is accurate to microseconds, meaning that it is more exact than NTP

|

|

YouTube search... ...Google search

- Time ... PNT ... GPS ... Retrocausality ... Delayed Choice Quantum Eraser ... Quantum

- Case Studies

- Autonomous Drones

- Deepmind teaches AI to follow navigational directions like humans | Tristan Greene

- History of Navigation | Wikipedia

- Department of Homeland Security (DHS) Science and Technology (S&T) Positioning, Navigation, and Timing (PNT) Program

- Navigation Aids | Department of Transportation, Federal Aviation Administration

- VN-300 | Vectornav ...miniature, high-performance Dual Antenna Global Navigation Satellite Systems (GNSS)-Aided Inertial Navigation System (INS) that combines micro-electromechanical systems (MEMS) inertial sensors, two high-sensitivity GNSS receivers, and advanced Kalman filtering algorithms to provide optimal estimates of position, velocity, and orientation.

Navigation is a field of study that focuses on the process of monitoring and controlling the movement of a craft or vehicle from one place to another.[1] The field of navigation includes four general categories: land navigation, marine navigation, aeronautic navigation, and space navigation. Navigation | Wikipedia

|

|

|

|

Global Positioning System (GPS)

YouTube search... ...Google search

- Time ... PNT ... GPS ... Retrocausality ... Delayed Choice Quantum Eraser ... Quantum

- Astronomy

- GPS has been copied by Russia's GLONASS, Europe’s Galileo, China's BeiDou, India’s IRNSS, and Japan’s QZSS

- Artificial intelligence in GPS navigation systems | Jeffrey L. Duffany

- RoadTagger: GPS system upgrade utilizes AI to make sure you're in the right lane | David Nield - New Atlas ...Artificial intelligence to update digital maps and improve GPS navigation | Amit Malewar - InceptiveMind

- GPS.gov ...Timing

- Inside GNSS ...Global Navigation Satellite Systems

- Navstar | Space.com ...is a network of U.S. satellites that provide GPS services

- SpaceX launches third-generation GPS navigation satellite | CBS News ...GPS-3 satellite — the fourth in a series of more powerful third-generation navigation stations built by Lockheed Martin — was expected to be deployed about a 90 minutes after liftoff. Assuming tests and checkout go well, it will join a globe-spanning constellation of 31 GPS satellites.

- Air Force asks three U.S. contractors to develop miniature ASIC technology for next-gen GPS receivers | John Keller - Military & Aerospace Electronics ...small low-power-consumption GPS enabling technologies to include a next-generation ASIC for secure GPS land navigation.

- China Launches Beidou, Its Own Version of GPS | Andrew Jones - IEEE Spectrum ...China places the final Beidou navigation system satellite into orbit

- Big News For ISRO! Indian Navigation System (IRNSS) Gets Approval By IMP For Global Operations | Smriti Chaudhary - The EurAsuan Times

GPS receivers that use the L5 band can pinpoint to within 30 centimeters or 11.8 inches. The GPS concept is based on time and the known position of GPS specialized satellites. The satellites carry very stable atomic clocks that are synchronized with one another and with the ground clocks. Any drift from time maintained on the ground is corrected daily. In the same manner, the satellite locations are known with great precision. GPS receivers have clocks as well, but they are less stable and less precise. Each GPS satellite continuously transmits a radio signal containing the current time and data about its position. Since the speed of radio waves is constant and independent of the satellite speed, the time delay between when the satellite transmits a signal and the receiver receives it is proportional to the distance from the satellite to the receiver. A GPS receiver monitors multiple satellites and solves equations to determine the precise position of the receiver and its deviation from true time. At a minimum, four satellites must be in view of the receiver for it to compute four unknown quantities (three position coordinates and clock deviation from satellite time). Global Positioning System | Wikipedia

|

|

|

|

|

|

|

|

Deep-Space Positioning System (DPS)

YouTube search... ...Google search

- NASA is Making An AI-Based GPS For Space | Kristin Houser

- Frontier Development Lab (FDL) ...Artificial Intelligence Research for Space Science, Exploration & All Humankind

|

Jamming and Spoofing

YouTube search... ...Google search

- The Resilient Navigation and Timing Foundation

- Department of Homeland Security (DHS) Science and Technology (S&T) Resilient Positioning, Navigation, and Timing (PNT) Conformance Framework

- The Space Force: A Conversation With United States Secretary Of The Air Force Barbara Barrett | Steve Forbes - Forbes ... We are vulnerable. For example, the U.S. and the global economy are totally dependent on satellites, most especially the GPS, which is operated by the Space Force.

|