Difference between revisions of "3D Model"

m |

m |

||

| Line 18: | Line 18: | ||

* [[Robotics]] ... [[Transportation (Autonomous Vehicles)|Vehicles]] ... [[Autonomous Drones|Drones]] ... [[3D Model]] ... [[Point Cloud]] | * [[Robotics]] ... [[Transportation (Autonomous Vehicles)|Vehicles]] ... [[Autonomous Drones|Drones]] ... [[3D Model]] ... [[Point Cloud]] | ||

| − | * [[Simulation]] ... [[Simulated Environment Learning]] ... [[Minecraft]]: [[Minecraft#Voyager|Voyager]] | + | * [[Simulation]] ... [[Simulated Environment Learning]] ... [[World Models]] ... [[Minecraft]]: [[Minecraft#Voyager|Voyager]] |

* [[Cybersecurity]] ... [[Open-Source Intelligence - OSINT |OSINT]] ... [[Cybersecurity Frameworks, Architectures & Roadmaps | Frameworks]] ... [[Cybersecurity References|References]] ... [[Offense - Adversarial Threats/Attacks| Offense]] ... [[National Institute of Standards and Technology (NIST)|NIST]] ... [[U.S. Department of Homeland Security (DHS)| DHS]] ... [[Screening; Passenger, Luggage, & Cargo|Screening]] ... [[Law Enforcement]] ... [[Government Services|Government]] ... [[Defense]] ... [[Joint Capabilities Integration and Development System (JCIDS)#Cybersecurity & Acquisition Lifecycle Integration| Lifecycle Integration]] ... [[Cybersecurity Companies/Products|Products]] ... [[Cybersecurity: Evaluating & Selling|Evaluating]] | * [[Cybersecurity]] ... [[Open-Source Intelligence - OSINT |OSINT]] ... [[Cybersecurity Frameworks, Architectures & Roadmaps | Frameworks]] ... [[Cybersecurity References|References]] ... [[Offense - Adversarial Threats/Attacks| Offense]] ... [[National Institute of Standards and Technology (NIST)|NIST]] ... [[U.S. Department of Homeland Security (DHS)| DHS]] ... [[Screening; Passenger, Luggage, & Cargo|Screening]] ... [[Law Enforcement]] ... [[Government Services|Government]] ... [[Defense]] ... [[Joint Capabilities Integration and Development System (JCIDS)#Cybersecurity & Acquisition Lifecycle Integration| Lifecycle Integration]] ... [[Cybersecurity Companies/Products|Products]] ... [[Cybersecurity: Evaluating & Selling|Evaluating]] | ||

* [[Spatial-Temporal Dynamic Network (STDN)]] | * [[Spatial-Temporal Dynamic Network (STDN)]] | ||

Latest revision as of 09:07, 16 June 2024

Youtube search... ...Google search

- Robotics ... Vehicles ... Drones ... 3D Model ... Point Cloud

- Simulation ... Simulated Environment Learning ... World Models ... Minecraft: Voyager

- Cybersecurity ... OSINT ... Frameworks ... References ... Offense ... NIST ... DHS ... Screening ... Law Enforcement ... Government ... Defense ... Lifecycle Integration ... Products ... Evaluating

- Spatial-Temporal Dynamic Network (STDN)

- Hyperdimensional Computing (HDC)

- Video/Image ... Vision ... Enhancement ... Fake ... Reconstruction ... Colorize ... Occlusions ... Predict image ... Image/Video Transfer Learning

- A survey on Deep Learning Advances on Different 3D Data Representations | E. Ahmed, A. Saint, A. Shabayek, K. Cherenkova, R. Das, G. Gusev, and D. Aouada - extending 2D deep learning to 3D data is not a straightforward tasks depending on the data representation itself and the task at hand.

- The Amazing Ways YouTube Uses Artificial Intelligence And Machine Learning | Bernard Marr - Forbes

- Azure Custom Vision ...an AI service and end-to-end platform for applying computer vision

Contents

Geometric Deep Learning

3D Machine Learning | GitHub

- Courses

- Datasets

- 3D Pose Estimation

- Single Object Classification

- Multiple Objects Detection

- Scene/Object Semantic Segmentation

- 3D Geometry Synthesis/Reconstruction

- Texture/Material Analysis and Synthesis

- Style Learning and Transfer

- Scene Synthesis/Reconstruction

- Scene Understanding

3D Convolutional Neural Networks (3DCNN)

Youtube search... ...Google search

3D CNN models are widely used for object classification and detection within varying data modalities such as LiDAR Point Cloud, RGB-Depth data, 3D Computer Aided Design (CAD) models, and medical CT imagery. RGB-Depth data can also be processed using CNN by firstly extracting proposals from 2D RGB images using a 2D object detector and transforming the proposals and corresponding depth information into 3D Point Clouds. The generated 3D point clouds can be further explored by 3D CNN models such as PointNet. These models designed for Point Clouds, RGBD data, CAD models or medical CT images are not readily transferable to our volumetric 3D CT imagery for baggage security screening since the modality of input data for 3D CNN can differ significantly. However, the design of 3D CNN architectures and the training strategies used in existing work can be repurposed towards our prohibited item classification and detection within 3D CT baggage imagery. On the Evaluation of Prohibited Item Classification and Detection in Volumetric 3D Computed Tomography Baggage Security Screening Imagery | Q. Wang, N. Bhowmik, and T. Breckon - Durham, UK

Schematic diagram of the Deep 3D Convolutional Neural Network and FEATURE-Softmax Classifier models. a Deep 3D Convolutional Neural Network. The feature extraction stage includes 3D convolutional and Pooling / Sub-sampling: Max, Mean layers. 3D filters in the 3D convolutional layers search for recurrent spatial patterns that best capture the local biochemical features to separate the 20 amino acid microenvironments. Pooling / Sub-sampling: Max, Mean layers perform down-sampling to the input to increase translational invariances of the network. By following the 3DCNN and 3D Pooling / Sub-sampling: Max, Mean layers with fully connected layers, the pooled filter responses of all filters across all positions in the protein box can be integrated. The integrated information is then fed to the Softmax classifier layer to calculate class probabilities and to make the final predictions. Prediction error drives parameter updates of the trainable parameters in the classifier, fully connected layers, and convolutional filters to learn the best feature for the optimal performances. b The FEATURE Softmax Classifier. The FEATURE Softmax model begins with an input layer, which takes in FEATURE vectors, followed by two fully-connected layers, and ends with a Softmax classifier layer. In this case, the input layer is equivalent to the feature extraction stage. In contrast to 3DCNN, the prediction error only drives parameter learning of the fully connected layers and classifier. The input feature is fixed during the whole training process

LiDAR

Youtube search... ...Google search

- VoxelNet: End-to-End Learning for Point Cloud Based 3D Object Detection | Yin Zhou and Oncel Tuzel

- VoxNet: A 3D Convolutional Neural Network for real-time object recognition | Daniel Maturana and Sebastian Scherer

LiDAR involves firing rapid laser pulses at objects and measuring how much time they take to return to the sensor. This is similar to the "time of flight" technology for RGB-D cameras we described above, but LiDAR has significantly longer range, captures many more points, and is much more robust to interference from other light sources. Most 3D LiDAR sensors today have several (up to 64) beams aligned vertically, spinning rapidly to see in all directions around the sensor. These are the sensors used in most self-driving cars because of their accuracy, range, and robustness, but the problem with LiDAR sensors is that they're often large, heavy, and extremely expensive (the 64-beam sensor that most self-driving cars use costs $75,000!). As a result, many companies are currently trying to develop cheaper “solid state LiDAR” systems that can sense in 3D without having to spin. Beyond the pixel plane: sensing and learning in 3D | Mihir Garimella, Prathik Naidu - Stanford

VoxelNet is an end-to-end 3D object detector specially designed for LiDAR data. It consists of three modules:

- feature learning network (subdivide the Point Cloud into many subvolumes/voxels, feature engineering + fully connected neural network),

- convolutional middle layer (3D convolution applied to the stacked voxel feature volumes, each subvolume/voxel is a feature vector)

- and region proposal networks.

VoxNet in a more generic model being able to handle different types of 3D data including LiDAR Point Cloud, CAD and RGBD data. Qi et al. improved the performance of VoxNet by introducing the auxiliary subvolume supervision to alleviate the overfitting issue.On the Evaluation of Prohibited Item Classification and Detection in Volumetric 3D Computed Tomography Baggage Security Screening Imagery | Q. Wang, N. Bhowmik, and T. Breckon - Durham, UK

MVCNN

Youtube search... ...Google search

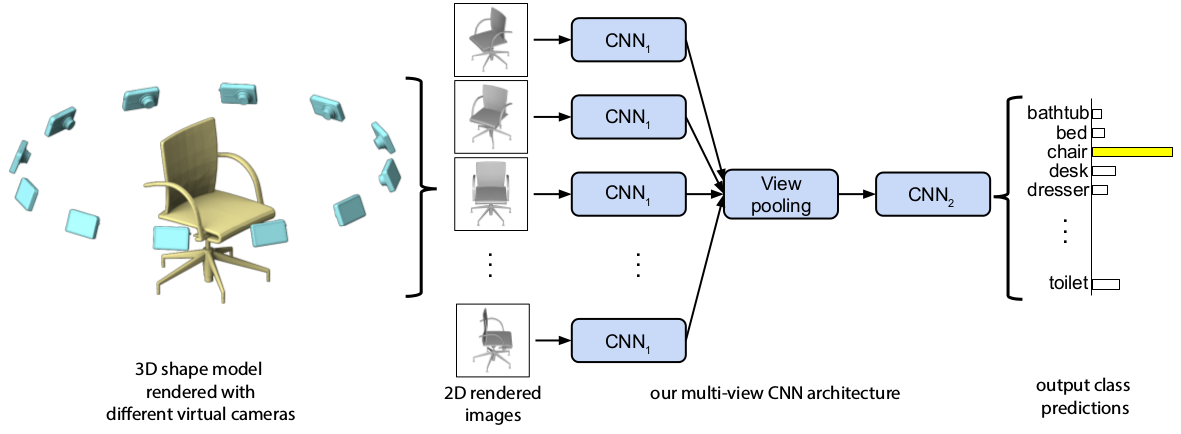

A longstanding question in computer vision concerns the representation of 3D shapes for recognition: should 3D shapes be represented with descriptors operating on their native 3D formats, such as voxel grid or polygon mesh, or can they be effectively represented with view-based descriptors? We address this question in the context of learning to recognize 3D shapes from a collection of their rendered views on 2D images. We first present a standard CNN architecture trained to recognize the shapes’ rendered views independently of each other, and show that a 3D shape can be recognized even from a single view at an accuracy far higher than using state-of-the-art 3D shape descriptors. Recognition rates further increase when multiple views of the shapes are provided. In addition, we present a novel CNN architecture that combines information from multiple views of a 3D shape into a single and compact shape descriptor offering even better recognition performance. The same architecture can be applied to accurately recognize human hand-drawn sketches of shapes. We conclude that a collection of 2D views can be highly informative for 3D shape recognition and is amenable to emerging CNN architectures and their derivatives. Multi-view Convolutional Neural Networks for 3D Shape Recognition H. Su, S. Maji, E. Kalogerakis, and E. Learned-Miller and MVCNN with PyTorch

Quadtrees and Octrees

Youtube search... ...Google search

- O-CNN: Octree-based convolutional neural networks for 3D shape analysis | P. Wang, Y. Liu, Y. Guo, C. Sun, and X. Tong

- O-CNN | P. Wang, Y. Liu, Y. Guo, C. Sun, and X. Tong | GitHub repository contains the implementation of O-CNN and Aadptive O-CNN ...built upon the Caffe framework and it supports octree-based convolution, deconvolution, pooling, and unpooling.

- OctNet: Learning Deep 3D Representations at High Resolutions | G. Riegler, A. Ulusoy, and A. Geiger a representation for deep learning with sparse 3D data. In contrast to existing models, our representation enables 3D convolutional networks which are both deep and high resolution. Towards this goal, we exploit the sparsity in the input data to hierarchically partition the space using a set of unbalanced octrees where each leaf node stores a pooled feature representation. This allows to focus memory allocation and computation to the relevant dense regions and enables deeper networks without compromising resolution. OctNet uses efficient space partitioning structures (i.e. octrees) to reduce memory and compute requirements of 3D convolutional neural networks, thereby enabling deep learning at high resolutions.

3D Models from 2D Images

ChatGPT & Blender

3D Printing

YouTube ... Quora ...Google search ...Google News ...Bing News

Generative AI & 3D Printing

YouTube ... Quora ...Google search ...Google News ...Bing News

- Generative AI ... Conversational AI ... OpenAI's ChatGPT ... Perplexity ... Microsoft's Bing ... You ...Google's Bard ... Baidu's Ernie

- How Can ChatGPT Be Used for 3D Printing | Andrew Sink - 3DWithUs

- Blender ... free and open-source 3D creation suite that supports the entirety of the 3D pipeline—modeling, sculpting, rigging, 3D and 2D animation, simulation, rendering, compositing, motion tracking and video editing

- Generating 3d Models From Text With Nvidia’s Magic3D | Ada Shaikhnag - 3D Printing Industry ... combining 3D printing and generative AI

While ChatGPT isn’t quite ready to create a functional model of a complex engine, it is capable of making simple shapes and also creating programs that can be used to make 3D models.