Difference between revisions of "Sequence to Sequence (Seq2Seq)"

m |

m |

||

| (47 intermediate revisions by the same user not shown) | |||

| Line 2: | Line 2: | ||

|title=PRIMO.ai | |title=PRIMO.ai | ||

|titlemode=append | |titlemode=append | ||

| − | |keywords=artificial, intelligence, machine, learning, models | + | |keywords=ChatGPT, artificial, intelligence, machine, learning, NLP, NLG, NLC, NLU, models, data, singularity, moonshot, Sentience, AGI, Emergence, Moonshot, Explainable, TensorFlow, Google, Nvidia, Microsoft, Azure, Amazon, AWS, Hugging Face, OpenAI, Tensorflow, OpenAI, Google, Nvidia, Microsoft, Azure, Amazon, AWS, Meta, LLM, metaverse, assistants, agents, digital twin, IoT, Transhumanism, Immersive Reality, Generative AI, Conversational AI, Perplexity, Bing, You, Bard, Ernie, prompt Engineering LangChain, Video/Image, Vision, End-to-End Speech, Synthesize Speech, Speech Recognition, Stanford, MIT |description=Helpful resources for your journey with artificial intelligence; videos, articles, techniques, courses, profiles, and tools |

| − | |description=Helpful resources for your journey with artificial intelligence; videos, articles, techniques, courses, profiles, and tools | + | |

| + | <!-- Google tag (gtag.js) --> | ||

| + | <script async src="https://www.googletagmanager.com/gtag/js?id=G-4GCWLBVJ7T"></script> | ||

| + | <script> | ||

| + | window.dataLayer = window.dataLayer || []; | ||

| + | function gtag(){dataLayer.push(arguments);} | ||

| + | gtag('js', new Date()); | ||

| + | |||

| + | gtag('config', 'G-4GCWLBVJ7T'); | ||

| + | </script> | ||

}} | }} | ||

| − | [ | + | [https://www.youtube.com/results?search_query=sequence+Seq2seq+ai YouTube] |

| − | [ | + | [https://www.quora.com/search?q=sequence%20%Seq2seq20AI ... Quora] |

| + | [https://www.google.com/search?q=sequence+Seq2seq+ai ...Google search] | ||

| + | [https://news.google.com/search?q=sequence+Seq2seq+ai ...Google News] | ||

| + | [https://www.bing.com/news/search?q=sequence+Seq2seq+ai&qft=interval%3d%228%22 ...Bing News] | ||

| − | * [[Natural Language Processing (NLP)]] | + | * [[State Space Model (SSM)]] ... [[Mamba]] ... [[Sequence to Sequence (Seq2Seq)]] ... [[Recurrent Neural Network (RNN)]] ... [[(Deep) Convolutional Neural Network (DCNN/CNN)|Convolutional Neural Network (CNN)]] |

| + | * [[Large Language Model (LLM)]] ... [[Large Language Model (LLM)#Multimodal|Multimodal]] ... [[Foundation Models (FM)]] ... [[Generative Pre-trained Transformer (GPT)|Generative Pre-trained]] ... [[Transformer]] ... [[Attention]] ... [[Generative Adversarial Network (GAN)|GAN]] ... [[Bidirectional Encoder Representations from Transformers (BERT)|BERT]] | ||

| + | * [[Natural Language Processing (NLP)]] ... [[Natural Language Generation (NLG)|Generation (NLG)]] ... [[Natural Language Classification (NLC)|Classification (NLC)]] ... [[Natural Language Processing (NLP)#Natural Language Understanding (NLU)|Understanding (NLU)]] ... [[Language Translation|Translation]] ... [[Summarization]] ... [[Sentiment Analysis|Sentiment]] ... [[Natural Language Tools & Services|Tools]] | ||

* [http://github.com/NVIDIA/OpenSeq2Seq Open Seq2Seq | NVIDIA] | * [http://github.com/NVIDIA/OpenSeq2Seq Open Seq2Seq | NVIDIA] | ||

| − | * | + | * [http://jalammar.github.io/visualizing-neural-machine-translation-mechanics-of-seq2seq-models-with-attention/ Visualizing A Neural Machine Translation Model (Mechanics of Seq2seq Models With Attention) | Jay Alammar] |

* [[Autoencoder (AE) / Encoder-Decoder]] | * [[Autoencoder (AE) / Encoder-Decoder]] | ||

| − | * | + | * Embedding - projecting an input into another more convenient representation space; e.g. word represented by a vector |

| − | + | ** [[Embedding]] ... [[Fine-tuning]] ... [[Retrieval-Augmented Generation (RAG)|RAG]] ... [[Agents#AI-Powered Search|Search]] ... [[Clustering]] ... [[Recommendation]] ... [[Anomaly Detection]] ... [[Classification]] ... [[Dimensional Reduction]]. [[...find outliers]] | |

| − | |||

* [[NLP Keras model in browser with TensorFlow.js]] | * [[NLP Keras model in browser with TensorFlow.js]] | ||

| + | * [[Diagrams for Business Analysis|LOOKING FOR SEQUENCE DIAGRAMS]] | ||

* [http://towardsdatascience.com/nlp-sequence-to-sequence-networks-part-1-processing-text-data-d141a5643b72 NLP - Sequence to Sequence Networks - Part 1 - Processing text data | Mohammed Ma'amari - Towards Data Science] | * [http://towardsdatascience.com/nlp-sequence-to-sequence-networks-part-1-processing-text-data-d141a5643b72 NLP - Sequence to Sequence Networks - Part 1 - Processing text data | Mohammed Ma'amari - Towards Data Science] | ||

| − | * [ | + | * [https://towardsdatascience.com/understanding-encoder-decoder-sequence-to-sequence-model-679e04af4346 Understanding Encoder-Decoder Sequence to Sequence Model | Simeon Kostadinov - Towards Data Science] |

| − | * [[End-to-End Speech]] | + | * [[End-to-End Speech]] ... [[Synthesize Speech]] ... [[Speech Recognition]] ... [[Music]] |

| − | * [[Generative]] | + | * [[What is Artificial Intelligence (AI)? | Artificial Intelligence (AI)]] ... [[Generative AI]] ... [[Machine Learning (ML)]] ... [[Deep Learning]] ... [[Neural Network]] ... [[Reinforcement Learning (RL)|Reinforcement]] ... [[Learning Techniques]] |

| + | * [[Conversational AI]] ... [[ChatGPT]] | [[OpenAI]] ... [[Bing/Copilot]] | [[Microsoft]] ... [[Gemini]] | [[Google]] ... [[Claude]] | [[Anthropic]] ... [[Perplexity]] ... [[You]] ... [[phind]] ... [[Ernie]] | [[Baidu]] | ||

| + | ** [https://www.technologyreview.com/2023/02/08/1068068/chatgpt-is-everywhere-heres-where-it-came-from/ ChatGPT is everywhere. Here’s where it came from | Will Douglas Heaven - MIT Technology Review] | ||

| + | *** [[ChatGPT]] | [[OpenAI]] | ||

| + | |||

| + | A general-purpose [[Autoencoder (AE) / Encoder-Decoder|encoder-decoder]] that can be used for machine translation, text [[summarization]], [[[[Conversational AI|conversational modeling]], [[Video/Image|image captioning]], interpreting dialects of software code, and more. The encoder processes each item in the input sequence, it compiles the information it captures into a vector (called the [[context]]). After processing the entire input sequence, the encoder sends the [[context]] over to the decoder, which begins producing the output sequence item by item. The [[context]] is a vector (an array of numbers, basically) in the case of [[Language Translation|machine translation]]. The encoder and decoder tend to both be [[Recurrent Neural Network (RNN)]]. [http://jalammar.github.io/visualizing-neural-machine-translation-mechanics-of-seq2seq-models-with-attention/ Visualizing A Neural Machine Translation Model (Mechanics of Seq2seq Models With Attention) | Jay Alammar] | ||

| − | |||

| − | http://3.bp.blogspot.com/-3Pbj_dvt0Vo/V-qe-Nl6P5I/AAAAAAAABQc/z0_6WtVWtvARtMk0i9_AtLeyyGyV6AI4wCLcB/s1600/nmt-model-fast.gif | + | <img src="http://3.bp.blogspot.com/-3Pbj_dvt0Vo/V-qe-Nl6P5I/AAAAAAAABQc/z0_6WtVWtvARtMk0i9_AtLeyyGyV6AI4wCLcB/s1600/nmt-model-fast.gif" width="800"> |

[http://google.github.io/seq2seq/ Seq2seq | GitHub] | [http://google.github.io/seq2seq/ Seq2seq | GitHub] | ||

| Line 31: | Line 49: | ||

| − | http://cdn-images-1.medium.com/max/1080/1*yG2htcHJF9h0sohcZbBEkg.png | + | <img src="http://cdn-images-1.medium.com/max/1080/1*yG2htcHJF9h0sohcZbBEkg.png" width="800"> |

<youtube>G5RY_SUJih4</youtube> | <youtube>G5RY_SUJih4</youtube> | ||

| Line 41: | Line 59: | ||

<youtube>oF0Rboc4IJw</youtube> | <youtube>oF0Rboc4IJw</youtube> | ||

| − | = Retrieval Augmented Generation (RAG) | + | = <span id="Retrieval Augmented Generation (RAG)"></span>Retrieval Augmented Generation (RAG) = |

| − | + | ||

| + | * [[Meta|Facebook]] | ||

| + | * [http://ai.facebook.com/blog/retrieval-augmented-generation-streamlining-the-creation-of-intelligent-natural-language-processing-models/ Retrieval Augmented Generation: Streamlining the creation of intelligent natural language processing models] [[Meta|Facebook]] AI | ||

| + | * [http://syncedreview.com/2020/09/29/facebooks-flexible-rag-language-model-achieves-sota-results-on-open-domain-qa/ Facebook’s Flexible ‘RAG’ Language Model Achieves SOTA Results on Open-Domain QA | Synced] | ||

| − | + | Building a model that researches and [[context]]ualizes is more challenging, but it's essential for future advancements. We recently made substantial progress in this realm with our Retrieval Augmented Generation (RAG) architecture, an end-to-end differentiable model that combines an information retrieval component ([[Meta|Facebook]] AI’s dense-passage retrieval system ) with a seq2seq generator (our Bidirectional and Auto-Regressive Transformers [BART] model). RAG can be fine-tuned on knowledge-intensive downstream tasks to achieve state-of-the-art results compared with even the largest pretrained seq2seq language models. And unlike these pretrained models, RAG’s internal knowledge can be easily altered or even supplemented on the fly, enabling researchers and engineers to control what RAG knows and doesn’t know without wasting time or compute power retraining the entire model. | |

| + | <img src="http://i0.wp.com/syncedreview.com/wp-content/uploads/2020/09/image-146.png " width="800"> | ||

| + | |||

| + | {|<!-- T --> | ||

| + | | valign="top" | | ||

| + | {| class="wikitable" style="width: 550px;" | ||

| + | || | ||

<youtube>JGpmQvlYRdU</youtube> | <youtube>JGpmQvlYRdU</youtube> | ||

| + | <b>Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks, with Patrick Lewis, [[Meta|Facebook]] AI | ||

| + | </b><br>Patrick Lewis with [[Meta|Facebook]] AI Research and University College London presented on "Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks" in a natural language processing multi-meetup program on July 2, 2020. | ||

| + | |} | ||

| + | |<!-- M --> | ||

| + | | valign="top" | | ||

| + | {| class="wikitable" style="width: 550px;" | ||

| + | || | ||

<youtube>E2AcqHuqFuk</youtube> | <youtube>E2AcqHuqFuk</youtube> | ||

| + | <b>Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks | NLP journal club | ||

| + | </b><br>Nikola Nikolov Link: https://arxiv.org/pdf/2005.11401.pdf | ||

| + | |||

| + | Abstract: Large pre-trained language models have been shown to store factual knowledge in their parameters, and achieve state-of-the-art results when fine-tuned on downstream NLP tasks. However, their ability to access and precisely manipulate knowledge is still limited, and hence on knowledge-intensive tasks, their performance lags behind task-specific architectures. Additionally, providing provenance for their decisions and updating their world knowledge remain open research problems. Pre-trained models with a differentiable access mechanism to explicit non-parametric [[memory]] can overcome this issue, but have so far been only investigated for extractive downstream tasks. We explore a general-purpose [[fine-tuning]] recipe for retrieval-augmented generation (RAG) -- models which combine pre-trained parametric and non-parametric [[memory]] for language generation. We introduce RAG models where the parametric [[memory]] is a pre-trained seq2seq model and the non-parametric [[memory]] is a dense vector index of Wikipedia, accessed with a pre-trained neural retriever. We compare two RAG formulations, one which conditions on the same retrieved passages across the whole generated sequence, the other can use different passages per token. We fine-tune and evaluate our models on a wide range of knowledge-intensive NLP tasks and set the state-of-the-art on three open domain QA tasks, outperforming parametric seq2seq models and task-specific retrieve-and-extract architectures. For language generation tasks, we find that RAG models generate more specific, diverse and factual language than a state-of-the-art parametric-only seq2seq baseline. | ||

| + | |} | ||

| + | |}<!-- B --> | ||

Latest revision as of 10:26, 28 May 2025

YouTube ... Quora ...Google search ...Google News ...Bing News

- State Space Model (SSM) ... Mamba ... Sequence to Sequence (Seq2Seq) ... Recurrent Neural Network (RNN) ... Convolutional Neural Network (CNN)

- Large Language Model (LLM) ... Multimodal ... Foundation Models (FM) ... Generative Pre-trained ... Transformer ... Attention ... GAN ... BERT

- Natural Language Processing (NLP) ... Generation (NLG) ... Classification (NLC) ... Understanding (NLU) ... Translation ... Summarization ... Sentiment ... Tools

- Open Seq2Seq | NVIDIA

- Visualizing A Neural Machine Translation Model (Mechanics of Seq2seq Models With Attention) | Jay Alammar

- Autoencoder (AE) / Encoder-Decoder

- Embedding - projecting an input into another more convenient representation space; e.g. word represented by a vector

- Embedding ... Fine-tuning ... RAG ... Search ... Clustering ... Recommendation ... Anomaly Detection ... Classification ... Dimensional Reduction. ...find outliers

- NLP Keras model in browser with TensorFlow.js

- LOOKING FOR SEQUENCE DIAGRAMS

- NLP - Sequence to Sequence Networks - Part 1 - Processing text data | Mohammed Ma'amari - Towards Data Science

- Understanding Encoder-Decoder Sequence to Sequence Model | Simeon Kostadinov - Towards Data Science

- End-to-End Speech ... Synthesize Speech ... Speech Recognition ... Music

- Artificial Intelligence (AI) ... Generative AI ... Machine Learning (ML) ... Deep Learning ... Neural Network ... Reinforcement ... Learning Techniques

- Conversational AI ... ChatGPT | OpenAI ... Bing/Copilot | Microsoft ... Gemini | Google ... Claude | Anthropic ... Perplexity ... You ... phind ... Ernie | Baidu

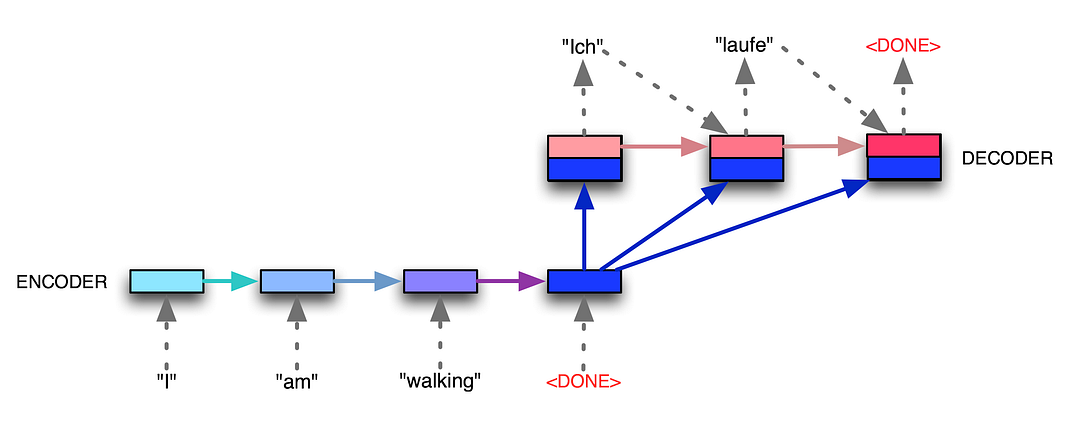

A general-purpose encoder-decoder that can be used for machine translation, text summarization, [[conversational modeling, image captioning, interpreting dialects of software code, and more. The encoder processes each item in the input sequence, it compiles the information it captures into a vector (called the context). After processing the entire input sequence, the encoder sends the context over to the decoder, which begins producing the output sequence item by item. The context is a vector (an array of numbers, basically) in the case of machine translation. The encoder and decoder tend to both be Recurrent Neural Network (RNN). Visualizing A Neural Machine Translation Model (Mechanics of Seq2seq Models With Attention) | Jay Alammar

We essentially have two different recurrent neural networks tied together here — the encoder RNN (bottom left boxes) listens to the input tokens until it gets a special <DONE> token, and then the decoder RNN (top right boxes) takes over and starts generating tokens, also finishing with its own <DONE> token. The encoder RNN evolves its internal state (depicted by light blue changing to dark blue while the English sentence tokens come in), and then once the <DONE> token arrives, we take the final encoder state (the dark blue box) and pass it, unchanged and repeatedly, into the decoder RNN along with every single generated German token. The decoder RNN also has its own dynamic internal state, going from light red to dark red. Voila! Variable-length input, variable-length output, from a fixed-size architecture. seq2seq: the clown car of deep learning | Dev Nag - Medium

Retrieval Augmented Generation (RAG)

- Retrieval Augmented Generation: Streamlining the creation of intelligent natural language processing models Facebook AI

- Facebook’s Flexible ‘RAG’ Language Model Achieves SOTA Results on Open-Domain QA | Synced

Building a model that researches and contextualizes is more challenging, but it's essential for future advancements. We recently made substantial progress in this realm with our Retrieval Augmented Generation (RAG) architecture, an end-to-end differentiable model that combines an information retrieval component (Facebook AI’s dense-passage retrieval system ) with a seq2seq generator (our Bidirectional and Auto-Regressive Transformers [BART] model). RAG can be fine-tuned on knowledge-intensive downstream tasks to achieve state-of-the-art results compared with even the largest pretrained seq2seq language models. And unlike these pretrained models, RAG’s internal knowledge can be easily altered or even supplemented on the fly, enabling researchers and engineers to control what RAG knows and doesn’t know without wasting time or compute power retraining the entire model.

|

|