Difference between revisions of "Neural Architecture"

m |

m |

||

| (7 intermediate revisions by the same user not shown) | |||

| Line 2: | Line 2: | ||

|title=PRIMO.ai | |title=PRIMO.ai | ||

|titlemode=append | |titlemode=append | ||

| − | |keywords=artificial, intelligence, machine, learning, models | + | |keywords=ChatGPT, artificial, intelligence, machine, learning, GPT-4, GPT-5, NLP, NLG, NLC, NLU, models, data, singularity, moonshot, Sentience, AGI, Emergence, Moonshot, Explainable, TensorFlow, Google, Nvidia, Microsoft, Azure, Amazon, AWS, Hugging Face, OpenAI, Tensorflow, OpenAI, Google, Nvidia, Microsoft, Azure, Amazon, AWS, Meta, LLM, metaverse, assistants, agents, digital twin, IoT, Transhumanism, Immersive Reality, Generative AI, Conversational AI, Perplexity, Bing, You, Bard, Ernie, prompt Engineering LangChain, Video/Image, Vision, End-to-End Speech, Synthesize Speech, Speech Recognition, Stanford, MIT |description=Helpful resources for your journey with artificial intelligence; videos, articles, techniques, courses, profiles, and tools |

| − | |description=Helpful resources for your journey with artificial intelligence; videos, articles, techniques, courses, profiles, and tools | + | |

| + | <!-- Google tag (gtag.js) --> | ||

| + | <script async src="https://www.googletagmanager.com/gtag/js?id=G-4GCWLBVJ7T"></script> | ||

| + | <script> | ||

| + | window.dataLayer = window.dataLayer || []; | ||

| + | function gtag(){dataLayer.push(arguments);} | ||

| + | gtag('js', new Date()); | ||

| + | |||

| + | gtag('config', 'G-4GCWLBVJ7T'); | ||

| + | </script> | ||

}} | }} | ||

[http://www.youtube.com/results?search_query=Neural+Architecture+machine+learning YouTube search...] | [http://www.youtube.com/results?search_query=Neural+Architecture+machine+learning YouTube search...] | ||

[http://www.google.com/search?q=Neural+Architecture+machine+learning ...Google search] | [http://www.google.com/search?q=Neural+Architecture+machine+learning ...Google search] | ||

| − | * [[What is Artificial Intelligence (AI)? | Artificial Intelligence (AI)]] ... [[Machine Learning (ML)]] ... [[Deep Learning]] ... [[Neural Network]] ...[[Learning Techniques]] | + | * [[What is Artificial Intelligence (AI)? | Artificial Intelligence (AI)]] ... [[Machine Learning (ML)]] ... [[Deep Learning]] ... [[Neural Network]] ... [[Reinforcement Learning (RL)|Reinforcement]] ... [[Learning Techniques]] |

* [[Hierarchical Temporal Memory (HTM)]] | * [[Hierarchical Temporal Memory (HTM)]] | ||

| − | |||

* [[Codeless Options, Code Generators, Drag n' Drop]] | * [[Codeless Options, Code Generators, Drag n' Drop]] | ||

| − | * | + | * [[Artificial General Intelligence (AGI) to Singularity]] ... [[Inside Out - Curious Optimistic Reasoning| Curious Reasoning]] ... [[Emergence]] ... [[Moonshots]] ... [[Explainable / Interpretable AI|Explainable AI]] ... [[Algorithm Administration#Automated Learning|Automated Learning]] |

* [[Auto Keras]] | * [[Auto Keras]] | ||

| − | * [[Evolutionary Computation / Genetic Algorithms]] | + | * [[Symbiotic Intelligence]] ... [[Bio-inspired Computing]] ... [[Neuroscience]] ... [[Connecting Brains]] ... [[Nanobots#Brain Interface using AI and Nanobots|Nanobots]] ... [[Molecular Artificial Intelligence (AI)|Molecular]] ... [[Neuromorphic Computing|Neuromorphic]] ... [[Evolutionary Computation / Genetic Algorithms| Evolutionary/Genetic]] |

* [[Algorithm Administration#Hyperparameter|Hyperparameter]]s Optimization | * [[Algorithm Administration#Hyperparameter|Hyperparameter]]s Optimization | ||

* [[Model Search]] | * [[Model Search]] | ||

* [[Google AutoML]] | * [[Google AutoML]] | ||

| + | * [http://www.engadget.com/2019/03/22/mit-ai-automated-neural-network-design/ MIT’s AI can train neural networks faster than ever before | Christine Fisher - Engadget] | ||

= <span id="Neural Architecture Search (NAS)"></span>Neural Architecture Search (NAS) = | = <span id="Neural Architecture Search (NAS)"></span>Neural Architecture Search (NAS) = | ||

| Line 38: | Line 47: | ||

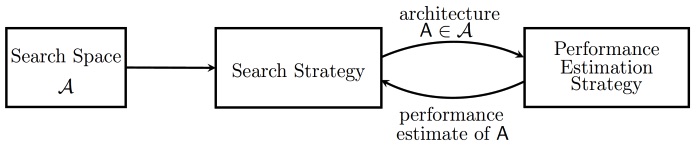

Various approaches to Neural Architecture Search (NAS) have designed networks that are on par or even outperform hand-designed architectures. Methods for NAS can be categorized according to the search space, search strategy and performance estimation strategy used: | Various approaches to Neural Architecture Search (NAS) have designed networks that are on par or even outperform hand-designed architectures. Methods for NAS can be categorized according to the search space, search strategy and performance estimation strategy used: | ||

| − | * The search space defines which type of ANN can be designed and optimized in principle. | + | * The search space defines which type of [[Neural Network|artificial neural networks (ANN}]] can be designed and optimized in principle. |

| − | * The search strategy defines which strategy is used to find optimal ANN's within the search space. | + | * The search strategy defines which strategy is used to find optimal [[Neural Network|ANN]]'s within the search space. |

| − | * Obtaining the performance of an ANN is costly as this requires training the ANN first. Therefore, performance estimation strategies are used obtain less costly estimates of a model's performance. [http://en.wikipedia.org/wiki/Neural_architecture_search Neural Architecture Search | Wikipedia] | + | * Obtaining the performance of an [[Neural Network|ANN]] is costly as this requires training the [[Neural Network|ANN]] first. Therefore, performance estimation strategies are used obtain less costly estimates of a model's performance. [http://en.wikipedia.org/wiki/Neural_architecture_search Neural Architecture Search | Wikipedia] |

<youtube>sROrvtXnT7Q</youtube> | <youtube>sROrvtXnT7Q</youtube> | ||

Latest revision as of 20:07, 8 September 2023

YouTube search... ...Google search

- Artificial Intelligence (AI) ... Machine Learning (ML) ... Deep Learning ... Neural Network ... Reinforcement ... Learning Techniques

- Hierarchical Temporal Memory (HTM)

- Codeless Options, Code Generators, Drag n' Drop

- Artificial General Intelligence (AGI) to Singularity ... Curious Reasoning ... Emergence ... Moonshots ... Explainable AI ... Automated Learning

- Auto Keras

- Symbiotic Intelligence ... Bio-inspired Computing ... Neuroscience ... Connecting Brains ... Nanobots ... Molecular ... Neuromorphic ... Evolutionary/Genetic

- Hyperparameters Optimization

- Model Search

- Google AutoML

- MIT’s AI can train neural networks faster than ever before | Christine Fisher - Engadget

Neural Architecture Search (NAS)

YouTube search... ...Google search

- Literature on Neural Architecture Search | AutoML.org

- Awesome NAS; a curated list

- Neural Architecture Search (NAS) with Reinforcement Learning | Wikipedia

- Neural Architecture Search (NAS) with Evolution | Wikipedia

- Multi-objective Neural architecture search | Wikipedia

- Neural Architecture Search for Deep Face Recognition | Ning Zhu

An alternative to manual design is “neural architecture search” (NAS), a series of machine learning techniques that can help discover optimal neural networks for a given problem. Neural architecture search is a big area of research and holds a lot of promise for future applications of deep learning. * Need to find the best AI model for your problem? Try neural architecture search | Ben Dickson - TDW NAS algorithms are efficient problem solvers ... What is neural architecture search (NAS)? | Ben Dickson - TechTalks

Various approaches to Neural Architecture Search (NAS) have designed networks that are on par or even outperform hand-designed architectures. Methods for NAS can be categorized according to the search space, search strategy and performance estimation strategy used:

- The search space defines which type of artificial neural networks (ANN} can be designed and optimized in principle.

- The search strategy defines which strategy is used to find optimal ANN's within the search space.

- Obtaining the performance of an ANN is costly as this requires training the ANN first. Therefore, performance estimation strategies are used obtain less costly estimates of a model's performance. Neural Architecture Search | Wikipedia

Differentiable Neural Computer (DNC)

Neural Operator

YouTube search... ...Google search

- Geology: Mining, Oil & Gas

- Neural Operator: Learning Maps Between Function Spaces | N. Kovachki, Z. Li, B. Liu, K. Azizzadenesheli, K. Bhattacharya, A. Stuart, Animashree (Anima) Anandkumar

- Neural Operator – Solving PDEs; Partial Differential Equations | Animashree (Anima) Anandkumar, Andrew Stuart, & Kaushik Bhattacharya]

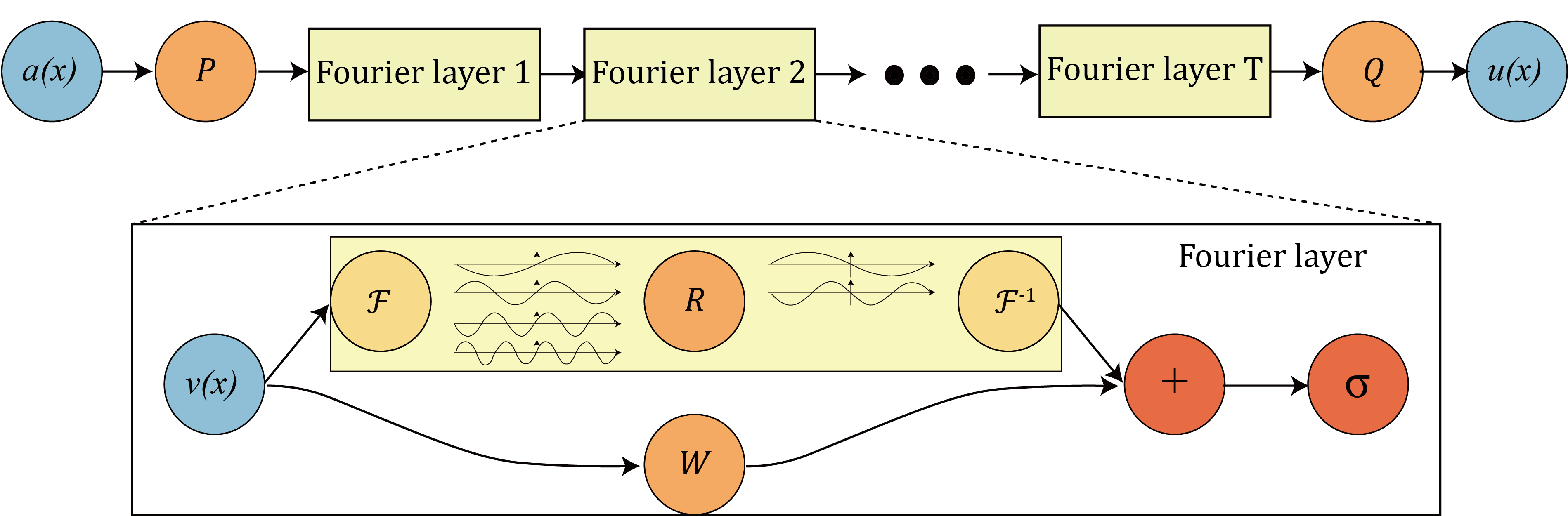

A generalization of neural networks to learn operators, termed neural operators, that map between infinite dimensional function spaces. A universal approximator in the function space. the Fourier neural operator model has shown state-of-the-art performance with 1000x speedup in learning turbulent Navier-Stokes equation, as well as promising applications in weather forecast and CO2 migration, as shown in the figure below. Neural Operator Machine learning for scientific computing | Zongy Li