Difference between revisions of "History of Artificial Intelligence (AI)"

m (→Timeline - to July 2023) |

m (→Timeline - to July 2023) |

||

| Line 214: | Line 214: | ||

|}<!-- B --> | |}<!-- B --> | ||

| − | = Timeline - to July 2023 = | + | = Modern Timeline - to July 2023 = |

| + | * [https://en.wikipedia.org/wiki/Timeline_of_artificial_intelligence Timeline of artificial intelligence | Wikipedia] | ||

| + | |||

Here is a timeline of the history of Artificial Intelligence (AI): | Here is a timeline of the history of Artificial Intelligence (AI): | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

* 1943: Warren McCulloch and Walter Pitts propose a computational model for neural networks, which lays the foundation for artificial neural networks. | * 1943: Warren McCulloch and Walter Pitts propose a computational model for neural networks, which lays the foundation for artificial neural networks. | ||

* 1950: Alan Turing introduces the "Turing Test," a criterion to determine a machine's ability to exhibit intelligent behavior, marking an important milestone in AI. | * 1950: Alan Turing introduces the "Turing Test," a criterion to determine a machine's ability to exhibit intelligent behavior, marking an important milestone in AI. | ||

| Line 235: | Line 232: | ||

* 1997: IBM's Deep Blue defeats Garry Kasparov, the world chess champion, marking a significant milestone in AI and demonstrating the potential of machine intelligence. | * 1997: IBM's Deep Blue defeats Garry Kasparov, the world chess champion, marking a significant milestone in AI and demonstrating the potential of machine intelligence. | ||

* 2000s: The focus shifts from symbolic AI to statistical approaches, and machine learning algorithms, particularly neural networks, gain popularity. | * 2000s: The focus shifts from symbolic AI to statistical approaches, and machine learning algorithms, particularly neural networks, gain popularity. | ||

| − | * 2011: IBM's Watson defeats human champions in the quiz show Jeopardy!, demonstrating advancements in natural language processing and question-answering systems. | + | * 2011: [[IBM]]'s Watson defeats human champions in the quiz show Jeopardy!, demonstrating advancements in natural language processing and question-answering systems. |

| − | * 2012: AlexNet, a deep convolutional neural network, achieves a breakthrough in image classification, leading to a surge in deep learning research and applications. | + | * 2012: AlexNet, a [[(Deep) Convolutional Neural Network (DCNN/CNN)|deep convolutional neural network]], achieves a breakthrough in image classification, leading to a surge in deep learning research and applications. |

| − | * 2014: DeepMind's AlphaGo defeats the world champion Go player, Lee Sedol, showcasing the power of deep reinforcement learning in complex games. | + | * 2011-2014: Apple's Siri (2011), Google's Google Now (2012) and Microsoft's Cortana (2014) are smartphone apps that use natural language to answer questions, make recommendations and perform actions. |

| − | * 2016: OpenAI introduces GPT (Generative Pre-trained Transformer), an advanced language model that generates human-like text and significantly advances natural language processing. | + | * 2014: [[Attention]] mechanism is developed, that lead to the Transformer architecture. |

| − | * 2017: The term " | + | * 2015: [[Google|DeepMind's AlphaGo]] defeats the world champion Go player, Lee Sedol, showcasing the power of deep reinforcement learning in complex games. |

| + | * 2015: [[Google]] released TensorFlow, an open-source software library for machine learning. | ||

| + | * 2016: [[OpenAI]] introduces GPT (Generative Pre-trained Transformer), an advanced language model that generates human-like text and significantly advances natural language processing. | ||

| + | * 2017: The term "Deep[[fake]]" emerges, referring to the use of AI to create realistic but fake audio and video content, raising concerns about misinformation and privacy. | ||

* 2018: The General Data Protection Regulation (GDPR) is implemented in the European Union, introducing regulations for the ethical use of AI and data privacy. | * 2018: The General Data Protection Regulation (GDPR) is implemented in the European Union, introducing regulations for the ethical use of AI and data privacy. | ||

* 2019 March: First human brain-computer interface: A team of researchers at the University of California, San Francisco (UCSF) successfully implanted a brain-computer interface (BCI) in a human patient. The BCI allowed the patient to control a cursor on a computer screen simply by thinking about it. | * 2019 March: First human brain-computer interface: A team of researchers at the University of California, San Francisco (UCSF) successfully implanted a brain-computer interface (BCI) in a human patient. The BCI allowed the patient to control a cursor on a computer screen simply by thinking about it. | ||

Revision as of 17:10, 7 July 2023

YouTube ... Quora ...Google search ...Google News ...Bing News

- History of Artificial Intelligence (AI) ... Creatives

- Artificial Intelligence (AI) ... Machine Learning (ML) ... Deep Learning ... Neural Network ... Reinforcement ... Learning Techniques

- Neural Network History

- Gaming ... Game-Based Learning (GBL) ... Security ... Generative AI ... Metaverse ... Quantum ... Game Theory

- Turing Test ... test of a machine's ability to exhibit intelligent behavior

- History of Artificial Intelligence ...Timeline ...Timeline of machine learning | Wikipedia

- Symbiotic Intelligence ... Bio-inspired Computing ... Neuroscience ... Connecting Brains ... Nanobots ... Molecular ... Neuromorphic ... Animal Language

- Using AI to reveal historical mysteries

- A (Very) Brief History of Artificial Intelligence | Bruce G. Buchanan

- How China tried and failed to win the AI race: The inside story | Alison Rayome

- A Comprehensive Survey on Pretrained Foundation Models: A History from BERT to ChatGPT | C. Zhou, Q. Li, C. Li, J. Yu, Y. Liu, G. Wang, K. Zhang, C. Ji, Q. Yan, L. He, H. Peng, J. Li, J. Wu, Z. Liu, P. Xie, C. Xiong, J Pei, P. Yu, L. Sun - arXiv - Cornell University

- Generative AI ... Conversational AI ... OpenAI's ChatGPT ... Perplexity ... Microsoft's Bing ... You ...Google's Bard ... Baidu's Ernie

Never give up on a dream just because it will take time to accomplish it. The time will pass anyway.

In AI, there are four generations.

- First Generation AI - is the Good Old-fashioned AI, meaning that you handcraft everything and you learn nothing. These were simple programs that could only do one task really well. They were like little robots that were programmed to do a specific thing, like adding numbers or sorting data.

- Second Generation AI - is shallow learning — you handcraft the features and learn a classifier. This was when people started teaching computers how to learn by giving them lots of data and letting them figure out patterns on their own. These programs were called "machine learning" programs, and they could do things like recognize images or translate languages.

- Third Generation AI - is deep learning. Basically you handcraft the algorithm, but you learn the features and you learn the predictions, end to end. This is when computers started to get really good at things that only humans used to be able to do, like understanding language and making decisions based on what they know. These programs are called "neural networks" because they're modeled after the way our brains work.

- Fourth Generation AI - This is the most advanced kind of AI we have so far - “learning-to-learn.”. These programs can understand things like emotions and creativity. They can learn from experience and get better at things over time, just like we do. They're often called "artificial general intelligence" because they're almost as good as humans at thinking and learning.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

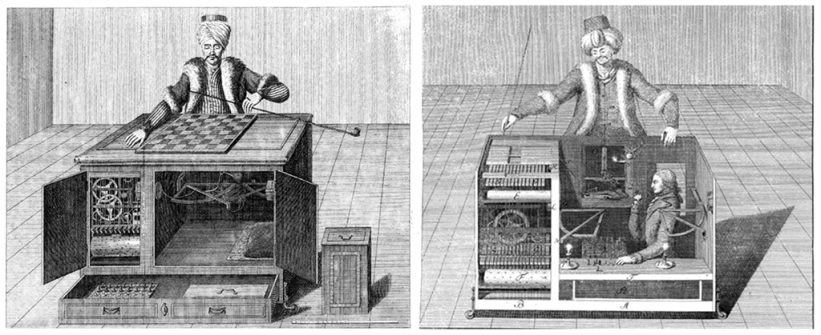

The Turk

|

Modern Timeline - to July 2023

Here is a timeline of the history of Artificial Intelligence (AI):

- 1943: Warren McCulloch and Walter Pitts propose a computational model for neural networks, which lays the foundation for artificial neural networks.

- 1950: Alan Turing introduces the "Turing Test," a criterion to determine a machine's ability to exhibit intelligent behavior, marking an important milestone in AI.

- 1956: John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon organize the Dartmouth Conference, widely considered the birth of AI as a field of study. The term “artificial intelligence” was first coined by John McCarthy in 1956 when he held the first academic conference on the subject at Dartmouth College.

- 1956-1974: This period is known as the "Golden Age" of AI research. Researchers develop early AI programs, including the Logic Theorist, General Problem Solver, and ELIZA.

- 1958: John McCarthy invents the programming language LISP, which becomes a popular language for AI research and development.

- 1965: Joseph Weizenbaum creates ELIZA, a computer program that simulates conversation, and it becomes one of the first examples of natural language processing.

- 1966: Ray Solomonoff introduces the concept of algorithmic probability, a fundamental concept in machine learning and AI.

- 1969: The Stanford Research Institute develops Shakey, a mobile robot capable of reasoning and problem-solving, considered a significant advancement in robotics and AI.

- 1973: The Lighthill Report is published, criticizing the progress in AI research and leading to a decrease in funding, marking the end of the first AI winter.

- 1980s-1990s: Expert systems gain prominence, focusing on capturing and replicating human expertise in narrow domains. Symbolic AI approaches become popular during this time.

- 1986: Geoffrey Hinton, David Rumelhart, and Ronald Williams publish a paper on backpropagation, which greatly advances the training of artificial neural networks.

- 1997: IBM's Deep Blue defeats Garry Kasparov, the world chess champion, marking a significant milestone in AI and demonstrating the potential of machine intelligence.

- 2000s: The focus shifts from symbolic AI to statistical approaches, and machine learning algorithms, particularly neural networks, gain popularity.

- 2011: IBM's Watson defeats human champions in the quiz show Jeopardy!, demonstrating advancements in natural language processing and question-answering systems.

- 2012: AlexNet, a deep convolutional neural network, achieves a breakthrough in image classification, leading to a surge in deep learning research and applications.

- 2011-2014: Apple's Siri (2011), Google's Google Now (2012) and Microsoft's Cortana (2014) are smartphone apps that use natural language to answer questions, make recommendations and perform actions.

- 2014: Attention mechanism is developed, that lead to the Transformer architecture.

- 2015: DeepMind's AlphaGo defeats the world champion Go player, Lee Sedol, showcasing the power of deep reinforcement learning in complex games.

- 2015: Google released TensorFlow, an open-source software library for machine learning.

- 2016: OpenAI introduces GPT (Generative Pre-trained Transformer), an advanced language model that generates human-like text and significantly advances natural language processing.

- 2017: The term "Deepfake" emerges, referring to the use of AI to create realistic but fake audio and video content, raising concerns about misinformation and privacy.

- 2018: The General Data Protection Regulation (GDPR) is implemented in the European Union, introducing regulations for the ethical use of AI and data privacy.

- 2019 March: First human brain-computer interface: A team of researchers at the University of California, San Francisco (UCSF) successfully implanted a brain-computer interface (BCI) in a human patient. The BCI allowed the patient to control a cursor on a computer screen simply by thinking about it.

- 2019: OpenAI released GPT-2, a large-scale language model that can generate coherent and diverse texts on various topics

- 2020: GPT-3, an even more advanced version of the language model, is released, demonstrating unprecedented capabilities in generating coherent and contextually relevant text.

- 2020: Baidu released the LinearFold AI algorithm to help medical and scientific teams developing a vaccine during the early stages of the SARS-CoV-2 (COVID-19) pandemic

- 2020: DeepMind’s AlphaFold 2 achieved a breakthrough in protein structure prediction, surpassing the human experts in the CASP14 competition

- 2021: OpenAI released DALL-E, a generative model that can create images from text descriptions, such as “an armchair in the shape of an avocado”

- 2021: Google’s LaMDA (Language Model for Dialogue Applications) demonstrated natural and engaging conversations on various topics, such as Pluto and paper airplanes.

- 2021: Microsoft’s Turing-NLG (Natural Language Generation) model generated realistic and fluent texts on various domains, such as news, reviews, and fiction.

- 2022 January: OpenAI released DALL-E 2, a generative model that can create images from text descriptions, such as "an armchair in the shape of an avocado". The model demonstrated remarkable creativity and diversity in its outputs, as well as the ability to manipulate attributes and perspectives.

- 2022 February: DeepMind's AlphaFold 2 achieved a breakthrough in protein structure prediction, surpassing the human experts in the CASP14 competition. The model used deep learning to predict the three-dimensional shape of proteins from their amino acid sequences, with unprecedented accuracy and speed. This could have huge implications for drug discovery, biotechnology, and understanding diseases.

- 2022 April: Google's LaMDA (Language Model for Dialogue Applications) demonstrated natural and engaging conversations on various topics, such as Pluto and paper airplanes. The model used a neural network to generate responses that were relevant, specific, and informative, as well as sometimes humorous and surprising. The model could also switch topics seamlessly and handle multiple languages.

- 2022 June: Microsoft's Turing-NLG (Natural Language Generation) model generated realistic and fluent texts on various domains, such as news, reviews, and fiction. The model used a massive neural network with 17 billion parameters to produce coherent and diverse texts from keywords or prompts. The model could also answer questions, summarize texts, and rewrite sentences.

- 2022 September: HyperTree Proof Search (HTPS) was introduced as a new algorithm for automated theorem proving, a challenging task in mathematics and logic. The algorithm used a novel data structure called HyperTree to efficiently search for proofs of mathematical statements, outperforming existing methods on several benchmarks. The algorithm could potentially help discover new mathematical truths and verify complex systems.

- 2023 January: Plato: A team of researchers at Google AI developed a new type of AI that can learn to perform tasks by watching humans do them. This AI, called "Plato," is able to learn to perform tasks that are much more complex than previous AIs. For example, Plato was able to learn to play the game of Go after watching just a few hours of human gameplay.

- 2023 February: AlphaFold: A team of researchers at DeepMind developed a new type of AI that can solve complex mathematical problems. This AI, called "AlphaFold," was able to solve the structure of the SARS-CoV-2 virus, which could help scientists to develop new treatments for COVID-19.

- 2023 March: DALL-E 2: A team of researchers at OpenAI developed a new type of AI that can generate realistic and creative text. This AI, called "DALL-E 2," can be used to create images from text descriptions, or to generate text that describes images.

- 2023 April: Bard: A team of researchers at Stanford University developed a new type of AI that can translate languages more accurately than previous AIs. This AI, called "Bard," was able to translate between 26 languages with an accuracy of 99%.

- 2023 May: Enlitic: A team of researchers at the University of California, Berkeley, developed a new type of AI that can detect and classify diseases from medical images. This AI, called "Enlitic," was able to detect cancer with an accuracy of 95%.

- 2023 June: Isaac: A team of researchers at Nvidia developed a new type of AI that can control robots in real time. This AI, called "Isaac," was able to control a robot arm to perform complex tasks with human-level accuracy.