Difference between revisions of "Bidirectional Encoder Representations from Transformers (BERT)"

m |

m |

||

| Line 10: | Line 10: | ||

* [[Large Language Model (LLM)]] ... [[Natural Language Processing (NLP)]] ...[[Natural Language Generation (NLG)|Generation]] ... [[Natural Language Classification (NLC)|Classification]] ... [[Natural Language Processing (NLP)#Natural Language Understanding (NLU)|Understanding]] ... [[Language Translation|Translation]] ... [[Natural Language Tools & Services|Tools & Services]] | * [[Large Language Model (LLM)]] ... [[Natural Language Processing (NLP)]] ...[[Natural Language Generation (NLG)|Generation]] ... [[Natural Language Classification (NLC)|Classification]] ... [[Natural Language Processing (NLP)#Natural Language Understanding (NLU)|Understanding]] ... [[Language Translation|Translation]] ... [[Natural Language Tools & Services|Tools & Services]] | ||

* [[Assistants]] ... [[Agents]] ... [[Negotiation]] ... [[Hugging_Face#HuggingGPT|HuggingGPT]] ... [[LangChain]] | * [[Assistants]] ... [[Agents]] ... [[Negotiation]] ... [[Hugging_Face#HuggingGPT|HuggingGPT]] ... [[LangChain]] | ||

| − | * [[Attention]] Mechanism ...[[Transformer]] | + | * [[Attention]] Mechanism ...[[Transformer]] ...[[Generative Pre-trained Transformer (GPT)]] ... [[Generative Adversarial Network (GAN)|GAN]] ... [[Bidirectional Encoder Representations from Transformers (BERT)|BERT]] |

* [[SMART - Multi-Task Deep Neural Networks (MT-DNN)]] | * [[SMART - Multi-Task Deep Neural Networks (MT-DNN)]] | ||

* [[Deep Distributed Q Network Partial Observability]] | * [[Deep Distributed Q Network Partial Observability]] | ||

Revision as of 13:36, 3 May 2023

Youtube search... ...Google search

- Large Language Model (LLM) ... Natural Language Processing (NLP) ...Generation ... Classification ... Understanding ... Translation ... Tools & Services

- Assistants ... Agents ... Negotiation ... HuggingGPT ... LangChain

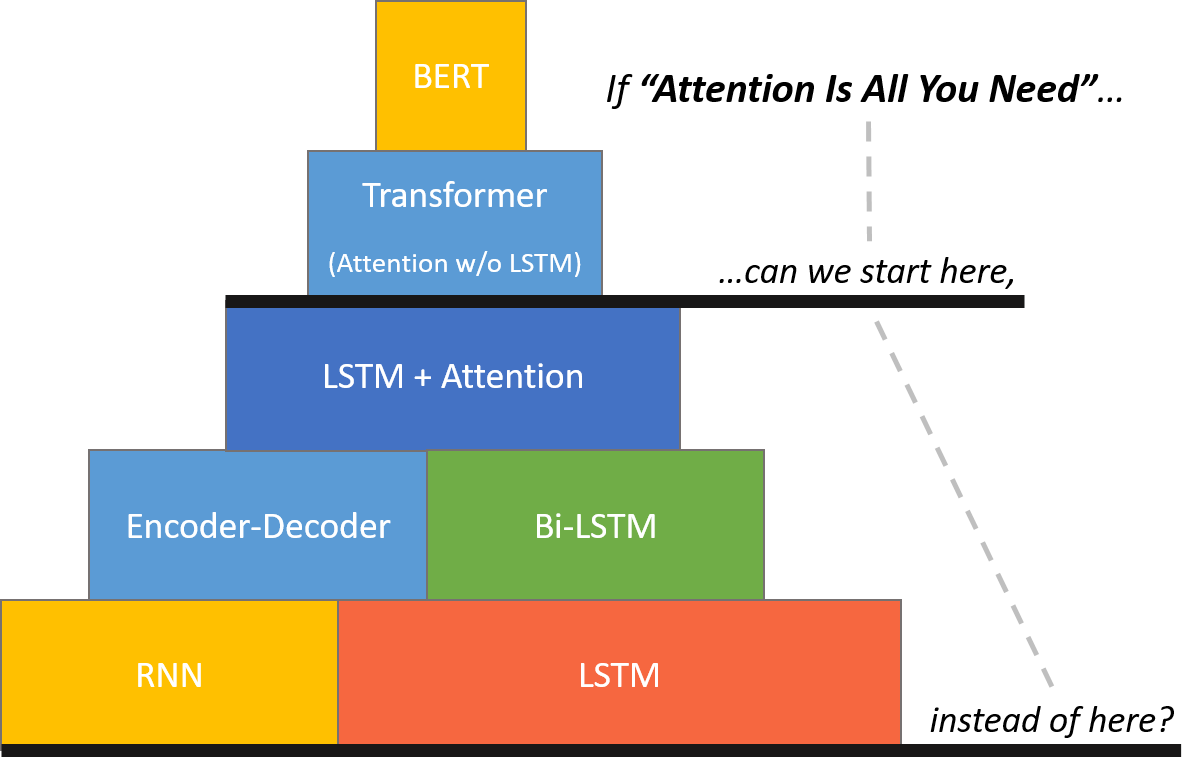

- Attention Mechanism ...Transformer ...Generative Pre-trained Transformer (GPT) ... GAN ... BERT

- SMART - Multi-Task Deep Neural Networks (MT-DNN)

- Deep Distributed Q Network Partial Observability

- TaBERT

- Google is improving 10 percent of searches by understanding language context - Say hello to BERT | Dieter Bohn - The Verge ...the old Google search algorithm treated that sentence as a “Bag-of-Words (BoW)”

- Google AI’s ALBERT claims top spot in multiple NLP performance benchmarks | Khari Johnson - VentureBeat

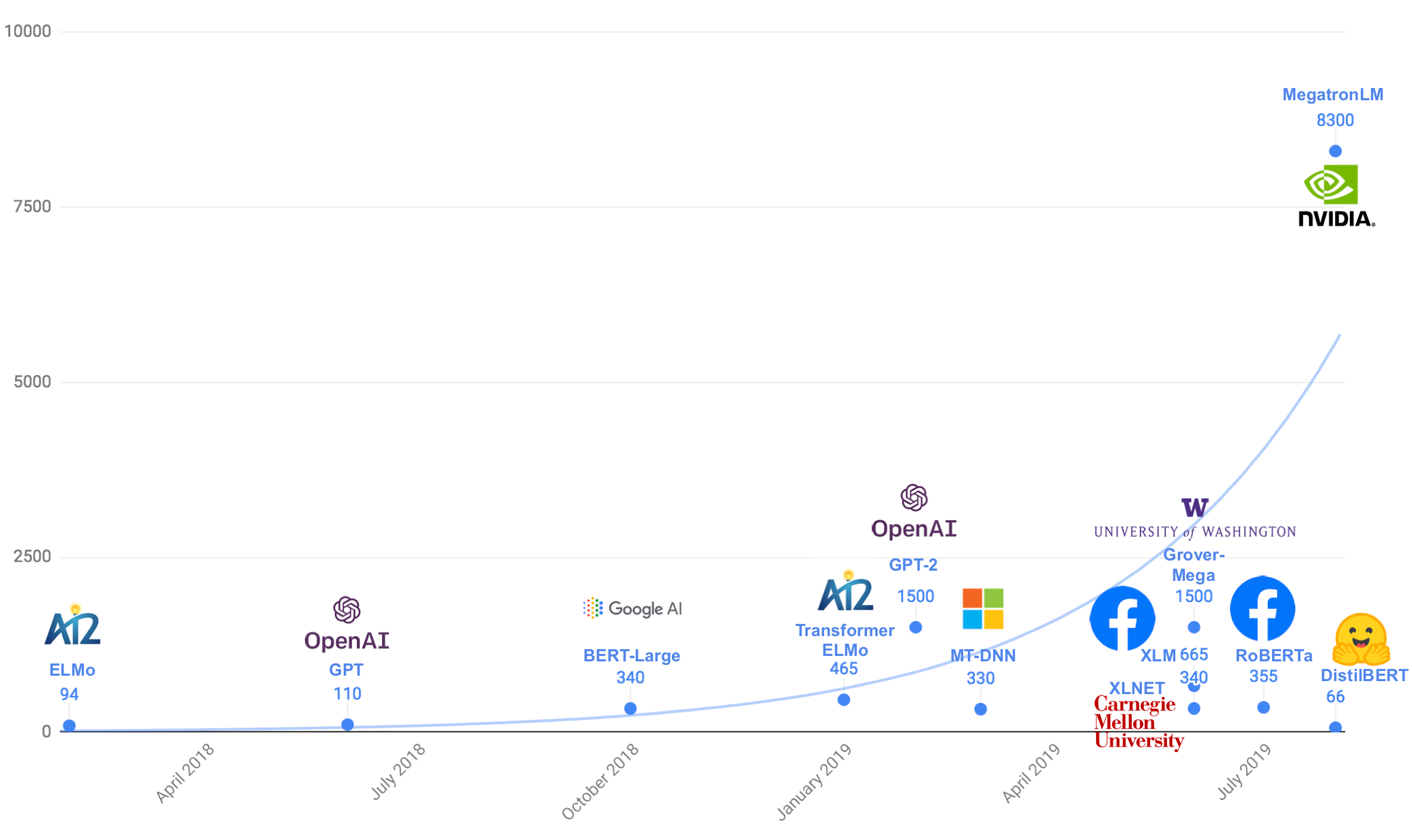

- RoBERTa:

- RoBERTa: A Robustly Optimized BERT Pretraining Approach | Y. Li, M. Ott, N. Goyal, J. Du, M. Joshi, D. Chen, O. Levy, M. Lewis, L. Zettlemoyer, and V. Stoyanov

- RoBERTa: A Robustly Optimized BERT Pretraining Approach | GitHub - iterates on BERT's pretraining procedure, including training the model longer, with bigger batches over more data; removing the next sentence prediction objective; training on longer sequences; and dynamically changing the masking pattern applied to the training data.

- Facebook AI’s RoBERTa improves Google’s BERT pretraining methods | Khari Johnson - VentureBeat

- Google's BERT - built on ideas from ULMFiT, ELMo, and OpenAI

- Attention Mechanism/Transformer Model

- Generative AI ... Conversational AI ... OpenAI's ChatGPT ... Perplexity ... Microsoft's Bing ... You ...Google's Bard ... Baidu's Ernie

- Transformer-XL

- Microsoft makes Google’s BERT NLP model better | Khari Johnson - VentureBeat

- Watch me Build a Finance Startup | Siraj Raval

- Smaller, faster, cheaper, lighter: Introducing DistilBERT, a distilled version of BERT | Victor Sanh - Medium

- TinyBERT: Distilling BERT for Natural Language Understanding | X. Jiao, Y. Yin, L. Shang, X. Jiang, X. Chen, L. Li, F. Wang, and Q. Liu researchers at Huawei produces a model called TinyBERT that is 7.5 times smaller and nearly 10 times faster than the original. It also reaches nearly the same language understanding performance as the original.

- Understanding BERT: Is it a Game Changer in NLP? | Bharat S Raj - Towards Data Science

- Allen Institute for Artificial Intelligence, or AI2’s Aristo AI system finally passes an eighth-grade science test | Alan Boyle - GeekWire

- 7 Leading Language Models for NLP in 2020 | Mariya Yao - TOPBOTS

- BERT Inner Workings | George Mihaila - TOPBOTS

BERT Research | Chris McCormick

- BERT Research | Chris McCormick

- ChrisMcCormickAI online education