Difference between revisions of "XLNet"

| Line 19: | Line 19: | ||

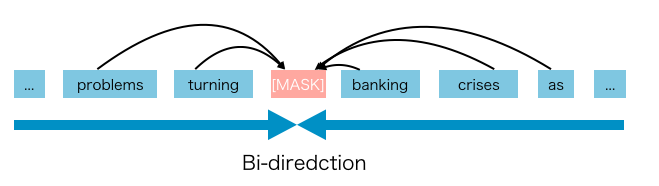

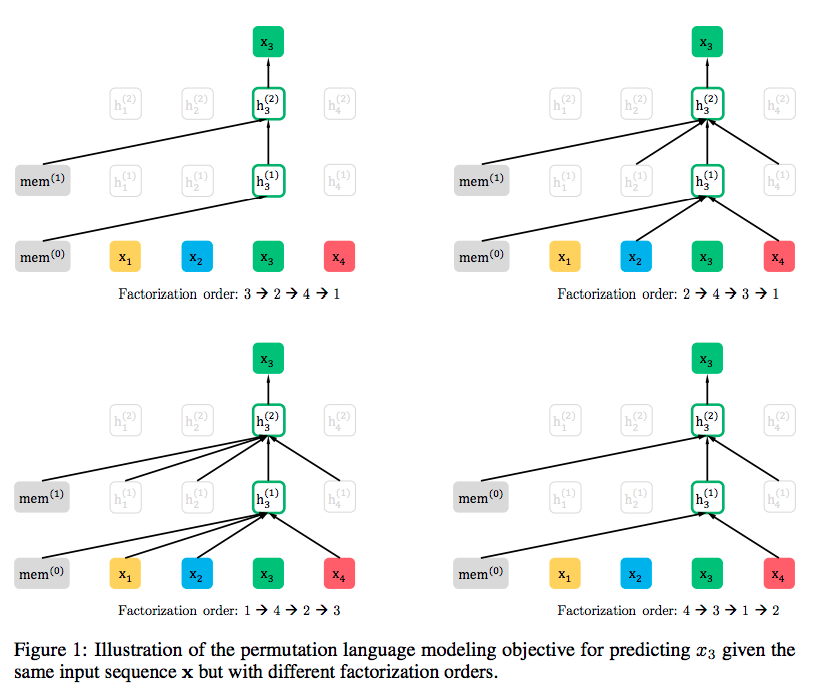

With the capability of modeling bidirectional contexts, denoising autoencoding based pretraining like [[Bidirectional Encoder Representations from Transformers (BERT)]] achieves better performance than pretraining approaches based on autoregressive language modeling. However, relying on corrupting the input with masks, [[Bidirectional Encoder Representations from Transformers (BERT)]] neglects dependency between the masked positions and suffers from a pretrain-finetune discrepancy. In light of these pros and cons, we propose XLNet, a generalized autoregressive pretraining method that (1) enables learning bidirectional contexts by maximizing the expected likelihood over all permutations of the factorization order and (2) overcomes the limitations of [[Bidirectional Encoder Representations from Transformers (BERT)]] thanks to its autoregressive formulation. Furthermore, XLNet integrates ideas from [[Transformer-XL]], the state-of-the-art autoregressive model, into pretraining. Empirically, XLNet outperforms [[Bidirectional Encoder Representations from Transformers (BERT)]] on 20 tasks, often by a large margin, and achieves state-of-the-art results on 18 tasks including question answering, natural language inference, sentiment analysis, and document ranking. [http://arxiv.org/abs/1906.08237 : Generalized Autoregressive Pretraining for Language Understanding | Z. Yang, Z. Dai, Y Yang, J. Carbonell, R. Salakhutdinov, and Q Le] | With the capability of modeling bidirectional contexts, denoising autoencoding based pretraining like [[Bidirectional Encoder Representations from Transformers (BERT)]] achieves better performance than pretraining approaches based on autoregressive language modeling. However, relying on corrupting the input with masks, [[Bidirectional Encoder Representations from Transformers (BERT)]] neglects dependency between the masked positions and suffers from a pretrain-finetune discrepancy. In light of these pros and cons, we propose XLNet, a generalized autoregressive pretraining method that (1) enables learning bidirectional contexts by maximizing the expected likelihood over all permutations of the factorization order and (2) overcomes the limitations of [[Bidirectional Encoder Representations from Transformers (BERT)]] thanks to its autoregressive formulation. Furthermore, XLNet integrates ideas from [[Transformer-XL]], the state-of-the-art autoregressive model, into pretraining. Empirically, XLNet outperforms [[Bidirectional Encoder Representations from Transformers (BERT)]] on 20 tasks, often by a large margin, and achieves state-of-the-art results on 18 tasks including question answering, natural language inference, sentiment analysis, and document ranking. [http://arxiv.org/abs/1906.08237 : Generalized Autoregressive Pretraining for Language Understanding | Z. Yang, Z. Dai, Y Yang, J. Carbonell, R. Salakhutdinov, and Q Le] | ||

| + | |||

| + | http://cdn-images-1.medium.com/max/1600/1*bmSZYhV6XlzRFcStRs1iDw.png | ||

http://cdn-images-1.medium.com/max/1600/1*RGdAU7tXXKqckbDjhoIWmQ.png | http://cdn-images-1.medium.com/max/1600/1*RGdAU7tXXKqckbDjhoIWmQ.png | ||

Revision as of 05:37, 10 July 2019

Youtube search... | ...Google search

- What is XLNet and why It outperforms BERT | BrambleXu - Towards Data Science

- Paper Dissected: “XLNet: Generalized Autoregressive Pretraining for Language Understanding” Explained | Machine Learning Explained

- Natural Language Processing (NLP)

- XLNet combines the best of:

- Autoregressive; such as Bidirectional Long Short-Term Memory (BI-LSTM), but XLNet uses Self-Attention instead of Long Short-Term Memory (LSTM) and 'permutations' on both sides of the bidirectional scan

- Autoencoder (AE) / Encoder-Decoder such as autoencoding denoiser of Bidirectional Encoder Representations from Transformers (BERT) by integrating methods from Transformer-XL

XLNet is a new unsupervised language representation learning method based on a novel generalized permutation language modeling objective. Additionally, XLNet employs Transformer-XL as the backbone model, exhibiting excellent performance for language tasks involving long context. Overall, XLNet achieves state-of-the-art (SOTA) results on various downstream language tasks including question answering, natural language inference, sentiment analysis, and document ranking. XLNet | zihangdai - GitHub

With the capability of modeling bidirectional contexts, denoising autoencoding based pretraining like Bidirectional Encoder Representations from Transformers (BERT) achieves better performance than pretraining approaches based on autoregressive language modeling. However, relying on corrupting the input with masks, Bidirectional Encoder Representations from Transformers (BERT) neglects dependency between the masked positions and suffers from a pretrain-finetune discrepancy. In light of these pros and cons, we propose XLNet, a generalized autoregressive pretraining method that (1) enables learning bidirectional contexts by maximizing the expected likelihood over all permutations of the factorization order and (2) overcomes the limitations of Bidirectional Encoder Representations from Transformers (BERT) thanks to its autoregressive formulation. Furthermore, XLNet integrates ideas from Transformer-XL, the state-of-the-art autoregressive model, into pretraining. Empirically, XLNet outperforms Bidirectional Encoder Representations from Transformers (BERT) on 20 tasks, often by a large margin, and achieves state-of-the-art results on 18 tasks including question answering, natural language inference, sentiment analysis, and document ranking. : Generalized Autoregressive Pretraining for Language Understanding | Z. Yang, Z. Dai, Y Yang, J. Carbonell, R. Salakhutdinov, and Q Le