Difference between revisions of "Evaluation - Measures"

(→Precision & Recall) |

(→Precision & Recall) |

||

| Line 40: | Line 40: | ||

(also called positive predictive value) is the fraction of relevant instances among the retrieved instances, while recall (also known as sensitivity) is the fraction of relevant instances that have been retrieved over the total amount of relevant instances. Both precision and recall are therefore based on an understanding and measure of relevance. [http://en.wikipedia.org/wiki/Precision_and_recall Precision and recall | Wikipedia] | (also called positive predictive value) is the fraction of relevant instances among the retrieved instances, while recall (also known as sensitivity) is the fraction of relevant instances that have been retrieved over the total amount of relevant instances. Both precision and recall are therefore based on an understanding and measure of relevance. [http://en.wikipedia.org/wiki/Precision_and_recall Precision and recall | Wikipedia] | ||

| − | Precision | + | * Precision: measure that tells us what proportion of patients that we diagnosed as having cancer, actually had cancer. The predicted positives (People predicted as cancerous are TP and FP) and the people actually having a cancer are TP. |

http://cdn-images-1.medium.com/max/600/1*KhlD7Js9leo0B0zfsIfAIA.png | http://cdn-images-1.medium.com/max/600/1*KhlD7Js9leo0B0zfsIfAIA.png | ||

| + | |||

| + | * Recall or Sensitivity: measure that tells us what proportion of patients that actually had cancer was diagnosed by the algorithm as having cancer. The actual positives (People having cancer are TP and FN) and the people diagnosed by the model having a cancer are TP. (Note: FN is included because the Person actually had a cancer even though the model predicted otherwise). | ||

| + | |||

| + | http://cdn-images-1.medium.com/max/600/1*a8hkMGVHg3fl4kDmSIDY_A.png | ||

| + | |||

| + | ==== When to use Precision and When to use Recall? ==== | ||

| + | |||

| + | It is clear that recall gives us information about a classifier’s performance with respect to false negatives (how many did we miss), while precision gives us information about its performance with respect to false positives(how many did we caught). | ||

| + | |||

| + | * Precision is about being precise. So even if we managed to capture only one cancer case, and we captured it correctly, then we are 100% precise. | ||

| + | |||

| + | * Recall is not so much about capturing cases correctly but more about capturing all cases that have “cancer” with the answer as “cancer”. So if we simply always say every case as “cancer”, we have 100% recall. | ||

| + | |||

| + | So basically if we want to focus more on: | ||

| + | |||

| + | * minimising False Negatives, we would want our Recall to be as close to 100% as possible without precision being too bad | ||

| + | * minimising False Positives, then our focus should be to make Precision as close to 100% as possible. | ||

http://upload.wikimedia.org/wikipedia/commons/thumb/2/26/Precisionrecall.svg/525px-Precisionrecall.svg.png | http://upload.wikimedia.org/wikipedia/commons/thumb/2/26/Precisionrecall.svg/525px-Precisionrecall.svg.png | ||

Revision as of 12:17, 22 September 2018

Contents

Error Metric

Predictive Modeling works on constructive feedback principle. You build a model. Get feedback from metrics, make improvements and continue until you achieve a desirable accuracy. Evaluation metrics explain the performance of a model. An important aspects of evaluation metrics is their capability to discriminate among model results. 7 Important Model Evaluation Error Metrics Everyone should know | Tavish Srivastava

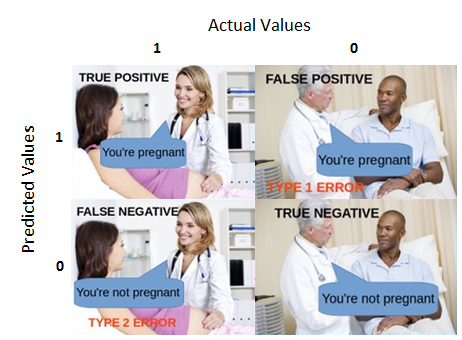

Confusion Matrix

A performance measurement for machine learning classification Understanding Confusion Matrix | Sarang Narkhede - Medium

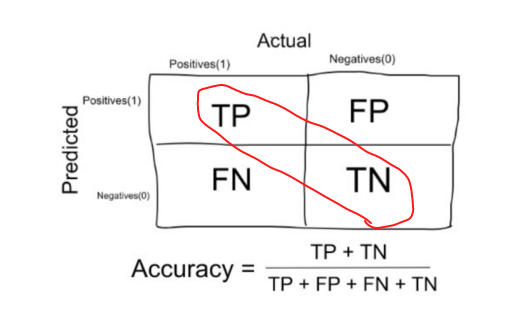

Accuracy

The number of correct predictions made by the model over all kinds predictions made.

Precision & Recall

(also called positive predictive value) is the fraction of relevant instances among the retrieved instances, while recall (also known as sensitivity) is the fraction of relevant instances that have been retrieved over the total amount of relevant instances. Both precision and recall are therefore based on an understanding and measure of relevance. Precision and recall | Wikipedia

- Precision: measure that tells us what proportion of patients that we diagnosed as having cancer, actually had cancer. The predicted positives (People predicted as cancerous are TP and FP) and the people actually having a cancer are TP.

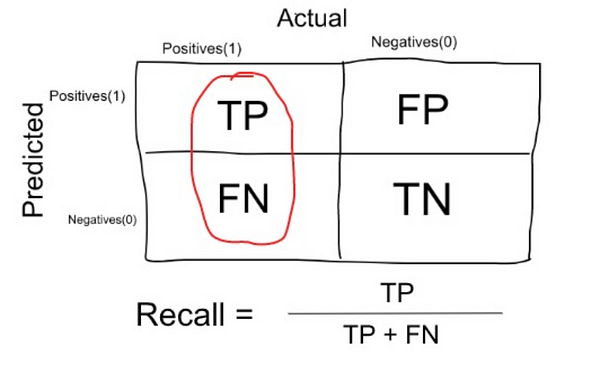

- Recall or Sensitivity: measure that tells us what proportion of patients that actually had cancer was diagnosed by the algorithm as having cancer. The actual positives (People having cancer are TP and FN) and the people diagnosed by the model having a cancer are TP. (Note: FN is included because the Person actually had a cancer even though the model predicted otherwise).

When to use Precision and When to use Recall?

It is clear that recall gives us information about a classifier’s performance with respect to false negatives (how many did we miss), while precision gives us information about its performance with respect to false positives(how many did we caught).

- Precision is about being precise. So even if we managed to capture only one cancer case, and we captured it correctly, then we are 100% precise.

- Recall is not so much about capturing cases correctly but more about capturing all cases that have “cancer” with the answer as “cancer”. So if we simply always say every case as “cancer”, we have 100% recall.

So basically if we want to focus more on:

- minimising False Negatives, we would want our Recall to be as close to 100% as possible without precision being too bad

- minimising False Positives, then our focus should be to make Precision as close to 100% as possible.

F1 Score (F-Measure)

Receiver Operator Curves (ROC) and Area Under the Curve (AUC)

Example Use: Tradeoffs

'Sensitivity' & 'Specificity':

'True Positive Rate' & 'False Positive Rate':