Difference between revisions of "Manifold Hypothesis"

m |

m |

||

| Line 17: | Line 17: | ||

[http://www.google.com/search?q=Backpropagation+deep+machine+learning+ML ...Google search] | [http://www.google.com/search?q=Backpropagation+deep+machine+learning+ML ...Google search] | ||

| − | * [[Backpropagation]] ... [[Feed Forward Neural Network (FF or FFNN)|FFNN]] ... [[Forward-Forward]] ... [[Activation Functions]] ... [[Loss]] ... [[Boosting]] ... [[Gradient Descent Optimization & Challenges|Gradient Descent]] ... [[Algorithm Administration#Hyperparameter|Hyperparameter]] ... [[Manifold Hypothesis]] ... [[Principal Component Analysis (PCA)|PCA]] | + | * [[Backpropagation]] ... [[Feed Forward Neural Network (FF or FFNN)|FFNN]] ... [[Forward-Forward]] ... [[Activation Functions]] ...[[Softmax]] ... [[Loss]] ... [[Boosting]] ... [[Gradient Descent Optimization & Challenges|Gradient Descent]] ... [[Algorithm Administration#Hyperparameter|Hyperparameter]] ... [[Manifold Hypothesis]] ... [[Principal Component Analysis (PCA)|PCA]] |

* [[Dimensional Reduction]] | * [[Dimensional Reduction]] | ||

** [[Dimensional Reduction#Projection |Projection]] | ** [[Dimensional Reduction#Projection |Projection]] | ||

Revision as of 02:04, 11 July 2023

Youtube search... ...Google search

- Backpropagation ... FFNN ... Forward-Forward ... Activation Functions ...Softmax ... Loss ... Boosting ... Gradient Descent ... Hyperparameter ... Manifold Hypothesis ... PCA

- Dimensional Reduction

- Objective vs. Cost vs. Loss vs. Error Function

- Manifold Wikipedia

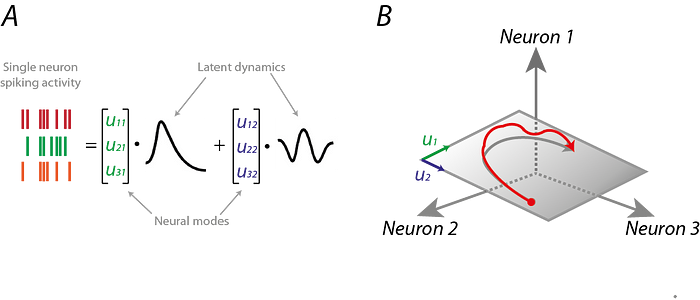

- Manifolds and Neural Activity: An Introduction | Kevin Luxem - Towards Data Science

The Manifold Hypothesis states that real-world high-dimensional data (images, neural activity) lie on low-dimensional manifolds

manifolds embedded within the high-dimensional space. ...manifolds are topological spaces that look locally like Euclidean spaces.

The Manifold Hypothesis explains (heuristically) why machine learning techniques are able to find useful features and produce accurate predictions from datasets that have a potentially large number of dimensions ( variables). The fact that the actual data set of interest actually lives on in a space of low dimension, means that a given machine learning model only needs to learn to focus on a few key features of the dataset to make decisions. However these key features may turn out to be complicated functions of the original variables. Many of the algorithms behind machine learning techniques focus on ways to determine these (embedding) functions. What is the Manifold Hypothesis? | DeepAI

|

|