Difference between revisions of "Reinforcement Learning (RL) from Human Feedback (RLHF)"

m |

m |

||

| Line 14: | Line 14: | ||

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/rlhf/rlhf.png" width="1000"> | <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/rlhf/rlhf.png" width="1000"> | ||

| + | |||

| + | {|<!-- T --> | ||

| + | | valign="top" | | ||

| + | {| class="wikitable" style="width: 550px;" | ||

| + | || | ||

| + | <youtube>2MBJOuVq380</youtube> | ||

| + | <b>Reinforcement Learning from Human Feedback: From Zero to [[ChatGPT]] | ||

| + | </b><br>In this talk, we will cover the basics of Reinforcement Learning from Human Feedback (RLHF) and how this technology is being used to enable state-of-the-art ML tools like [[ChatGPT]]. Most of the talk will be an overview of the interconnected ML models and cover the basics of Natural Language Processing and Reinforcement Learning (RL) that one needs to understand how Reinforcement Learning (RL) from Human Feedback (RLHF) is used on large language models. It will conclude with open question in RLHF. | ||

| + | |||

| + | * [https://huggingface.co/blog/rlhf RLHF Blogpost] | ||

| + | * [https://hf.co/deep-rl-course The Deep RL Course] | ||

| + | * [https://docs.google.com/presentation/d/1eI9PqRJTCFOIVihkig1voRM4MHDpLpCicX9lX1J2fqk/edit#slide=id.g12c29d7e5c3_0_0 Slides from this talk] | ||

| + | * [https://twitter.com/natolambert Nathan Twitter] | ||

| + | * [https://twitter.com/thomassimonini Thomas Twitter] | ||

| + | |||

| + | Nathan Lambert is a Research Scientist at HuggingFace. He received his PhD from the University of California, Berkeley working at the intersection of machine learning and robotics. He was advised by Professor Kristofer Pister in the Berkeley Autonomous Microsystems Lab and Roberto Calandra at Meta AI Research. He was lucky to intern at Facebook AI and DeepMind during his Ph.D. Nathan was was awarded the UC Berkeley EECS Demetri Angelakos Memorial Achievement Award for Altruism for his efforts to better community norms. | ||

| + | |} | ||

| + | |<!-- M --> | ||

| + | | valign="top" | | ||

| + | {| class="wikitable" style="width: 550px;" | ||

| + | || | ||

| + | <youtube>Fw5ybNwwSbg</youtube> | ||

| + | <b>I challenged ChatGPT to code and hack (Are we doomed?) | ||

| + | </b><br>Are we doomed? Will AI like ChatGPT replace us? I put it to the test and challenged it to write C code, Python hacking scripts, | ||

| + | |} | ||

| + | |}<!-- B --> | ||

Revision as of 08:50, 29 January 2023

YouTube search... ...Google search

- Reinforcement Learning (RL)

- ChatGPT

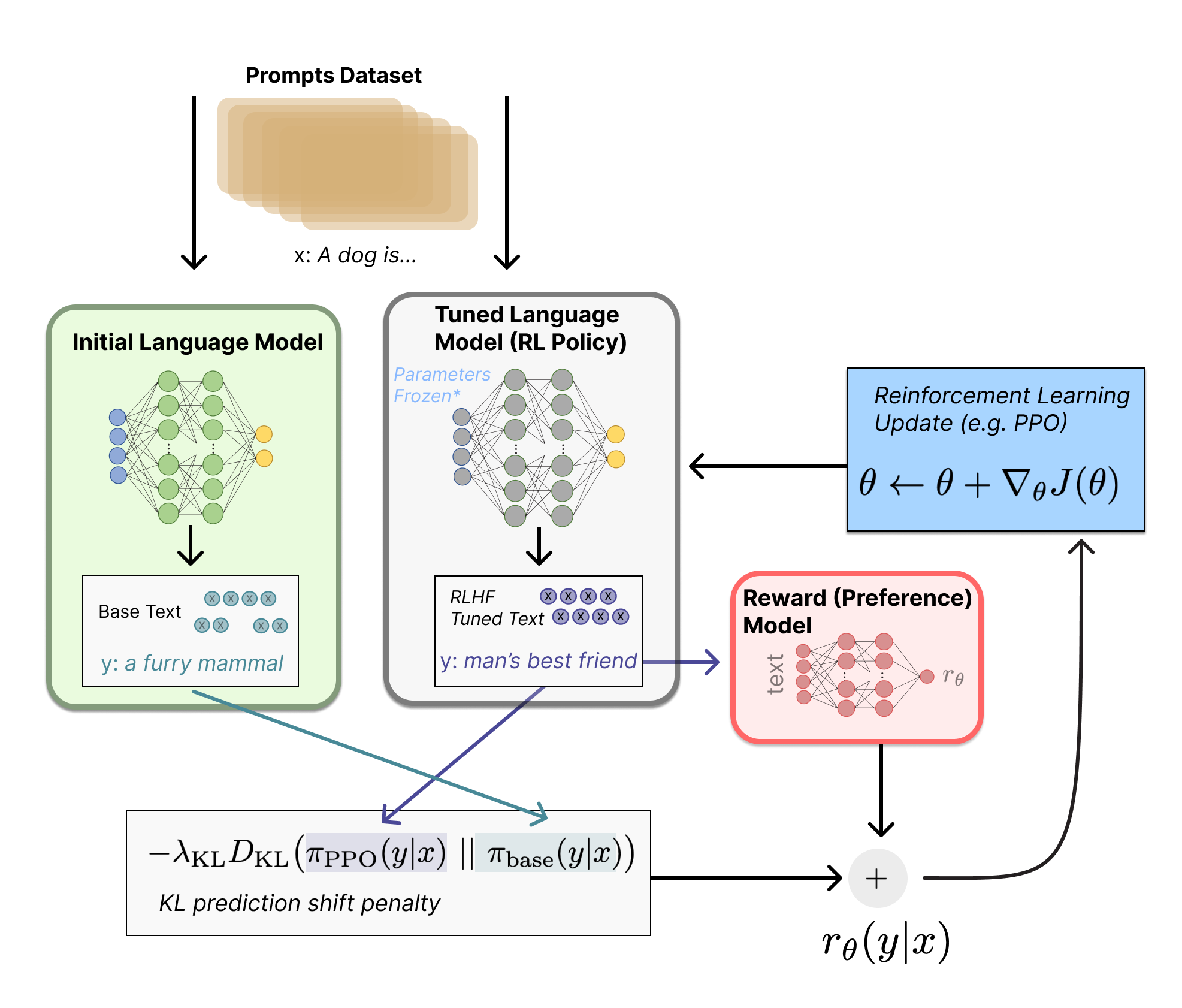

- Illustrating Reinforcement Learning from Human Feedback (RLHF) | N. Lambert, L. Castricato, L. von Werra, and A. Havrilla - Hugging Face

|

|