Difference between revisions of "Sequence to Sequence (Seq2Seq)"

| Line 16: | Line 16: | ||

* [[Attention]] Mechanism/[[Transformer]] Model | * [[Attention]] Mechanism/[[Transformer]] Model | ||

* [[NLP Keras model in browser with TensorFlow.js]] | * [[NLP Keras model in browser with TensorFlow.js]] | ||

| + | * [http://towardsdatascience.com/understanding-encoder-decoder-sequence-to-sequence-model-679e04af4346 Understanding Encoder-Decoder Sequence to Sequence Model | Simeon Kostadinov - Towards Data Science] | ||

* [[Natural Language Processing (NLP)]] | * [[Natural Language Processing (NLP)]] | ||

Revision as of 20:32, 30 June 2019

YouTube search... ...Google search

- Open Seq2Seq | NVIDIA

- Recurrent Neural Network (RNN)]]

- Autoencoder (AE) / Encoder-Decoder

- Attention Models

- Natural Language Processing (NLP)

- Assistants

- Attention Mechanism/Transformer Model

- NLP Keras model in browser with TensorFlow.js

- Understanding Encoder-Decoder Sequence to Sequence Model | Simeon Kostadinov - Towards Data Science

- Natural Language Processing (NLP)

- Visualizing A Neural Machine Translation Model (Mechanics of Seq2seq Models With Attention) | Jay Alammar

a general-purpose encoder-decoder that can be used for machine translation, text summarization, conversational modeling, image captioning, interpreting dialects of software code, and more.

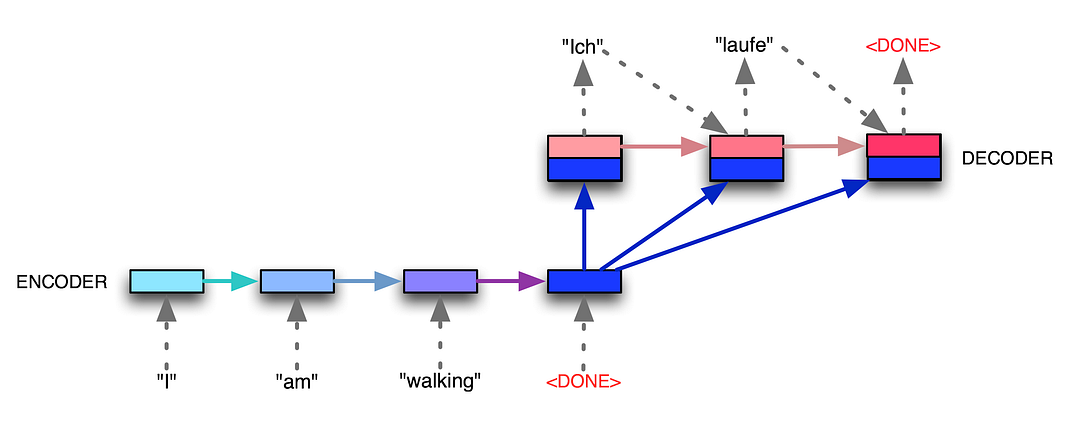

We essentially have two different recurrent neural networks tied together here — the encoder RNN (bottom left boxes) listens to the input tokens until it gets a special <DONE> token, and then the decoder RNN (top right boxes) takes over and starts generating tokens, also finishing with its own <DONE> token. The encoder RNN evolves its internal state (depicted by light blue changing to dark blue while the English sentence tokens come in), and then once the <DONE> token arrives, we take the final encoder state (the dark blue box) and pass it, unchanged and repeatedly, into the decoder RNN along with every single generated German token. The decoder RNN also has its own dynamic internal state, going from light red to dark red. Voila! Variable-length input, variable-length output, from a fixed-size architecture. seq2seq: the clown car of deep learning | Dev Nag - Medium