Difference between revisions of "Evaluation - Measures"

| Line 10: | Line 10: | ||

<youtube>e2vurxnd124</youtube> | <youtube>e2vurxnd124</youtube> | ||

<youtube>lonOMIYvZlE</youtube> | <youtube>lonOMIYvZlE</youtube> | ||

| + | <youtube>jjsRC1Wv750</youtube> | ||

| + | <youtube>4Xw19NpQCGA</youtube> | ||

== Confusion Matrix == | == Confusion Matrix == | ||

[http://www.youtube.com/results?search_query=Confusion+Matrix+artificial+intelligence YouTube search...] | [http://www.youtube.com/results?search_query=Confusion+Matrix+artificial+intelligence YouTube search...] | ||

| + | |||

| + | A performance measurement for machine learning classification [http://towardsdatascience.com/understanding-confusion-matrix-a9ad42dcfd62 Understanding Confusion Matrix | Sarang Narkhede - Medium] | ||

| + | |||

| + | http://cdn-images-1.medium.com/max/1600/1*7EYylA6XlXSGBCF77j_rOA.png | ||

<youtube>bpsmoQdoYpQ</youtube> | <youtube>bpsmoQdoYpQ</youtube> | ||

<youtube>sHHnCmy6q00</youtube> | <youtube>sHHnCmy6q00</youtube> | ||

| − | |||

=== Precision & Recall === | === Precision & Recall === | ||

| Line 31: | Line 36: | ||

[http://www.youtube.com/results?search_query=Accuracy+artificial+intelligence YouTube search...] | [http://www.youtube.com/results?search_query=Accuracy+artificial+intelligence YouTube search...] | ||

| − | + | The number of correct predictions made by the model over all kinds predictions made. | |

http://cdn-images-1.medium.com/max/800/1*5XuZ_86Rfce3qyLt7XMlhw.png | http://cdn-images-1.medium.com/max/800/1*5XuZ_86Rfce3qyLt7XMlhw.png | ||

Revision as of 12:07, 22 September 2018

Contents

Error Metric

Predictive Modeling works on constructive feedback principle. You build a model. Get feedback from metrics, make improvements and continue until you achieve a desirable accuracy. Evaluation metrics explain the performance of a model. An important aspects of evaluation metrics is their capability to discriminate among model results. 7 Important Model Evaluation Error Metrics Everyone should know | Tavish Srivastava

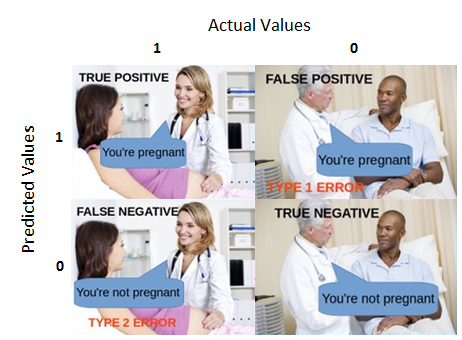

Confusion Matrix

A performance measurement for machine learning classification Understanding Confusion Matrix | Sarang Narkhede - Medium

Precision & Recall

(also called positive predictive value) is the fraction of relevant instances among the retrieved instances, while recall (also known as sensitivity) is the fraction of relevant instances that have been retrieved over the total amount of relevant instances. Both precision and recall are therefore based on an understanding and measure of relevance. Precision and recall | Wikipedia

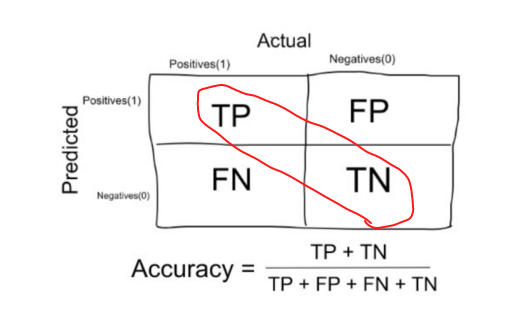

Accuracy

The number of correct predictions made by the model over all kinds predictions made.

F1 Score (F-Measure)

Receiver Operator Curves (ROC) and Area Under the Curve (AUC)

Example Use: Tradeoffs

'Sensitivity' & 'Specificity':

'True Positive Rate' & 'False Positive Rate':