Difference between revisions of "End-to-End Speech"

m |

m |

||

| Line 8: | Line 8: | ||

[http://www.google.com/search?q=Translatotron+End-to-End+neural+networks+deep+machine+learning+ML ...Google search] | [http://www.google.com/search?q=Translatotron+End-to-End+neural+networks+deep+machine+learning+ML ...Google search] | ||

| + | * [[End-to-End Speech]] ... [[Synthesize Speech]] ... [[Speech Recognition]] | ||

* [[Autoencoder (AE) / Encoder-Decoder]] | * [[Autoencoder (AE) / Encoder-Decoder]] | ||

* [[Sequence to Sequence (Seq2Seq)]] | * [[Sequence to Sequence (Seq2Seq)]] | ||

* [[Natural Language Processing (NLP)]] | * [[Natural Language Processing (NLP)]] | ||

| − | |||

* [[Assistants]] ... [[Hybrid Assistants]] ... [[Agents]] ... [[Negotiation]] | * [[Assistants]] ... [[Hybrid Assistants]] ... [[Agents]] ... [[Negotiation]] | ||

* [[Language Translation]] | * [[Language Translation]] | ||

Revision as of 06:53, 13 March 2023

YouTube search... ...Google search

- End-to-End Speech ... Synthesize Speech ... Speech Recognition

- Autoencoder (AE) / Encoder-Decoder

- Sequence to Sequence (Seq2Seq)

- Natural Language Processing (NLP)

- Assistants ... Hybrid Assistants ... Agents ... Negotiation

- Language Translation

Translatotron

YouTube search... ...Google search

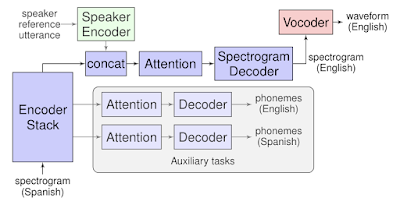

Translatotron is the first end-to-end model that can directly translate speech from one language into speech in another language. It is also able to retain the source speaker’s voice in the translated speech. We hope that this work can serve as a starting point for future research on end-to-end speech-to-speech translation systems. ... demonstrating that a single sequence-to-sequence model can directly translate speech from one language into speech in another language, without relying on an intermediate text representation in either language, as is required in cascaded systems.... Translatotron is based on a sequence-to-sequence network which takes source spectrograms as input and generates spectrograms of the translated content in the target language. It also makes use of two other separately trained components: a neural vocoder that converts output spectrograms to time-domain waveforms, and, optionally, a speaker encoder that can be used to maintain the character of the source speaker’s voice in the synthesized translated speech. During training, the sequence-to-sequence model uses a multitask objective to predict source and target transcripts at the same time as generating target spectrograms. However, no transcripts or other intermediate text representations are used during inference. Translatotron | Ye Jia and Ron Weiss - Google AI