Difference between revisions of "Reinforcement Learning (RL) from Human Feedback (RLHF)"

m |

m |

||

| Line 16: | Line 16: | ||

* [https://medium.com/@sthanikamsanthosh1994/reinforcement-learning-from-human-feedback-rlhf-532e014fb4ae Reinforcement Learning from Human Feedback(RLHF)-ChatGPT | Sthanikam Santhosh - Medium] | * [https://medium.com/@sthanikamsanthosh1994/reinforcement-learning-from-human-feedback-rlhf-532e014fb4ae Reinforcement Learning from Human Feedback(RLHF)-ChatGPT | Sthanikam Santhosh - Medium] | ||

* [https://www.deepmind.com/blog/learning-through-human-feedback Learning through human feedback |] [[Google]] DeepMind | * [https://www.deepmind.com/blog/learning-through-human-feedback Learning through human feedback |] [[Google]] DeepMind | ||

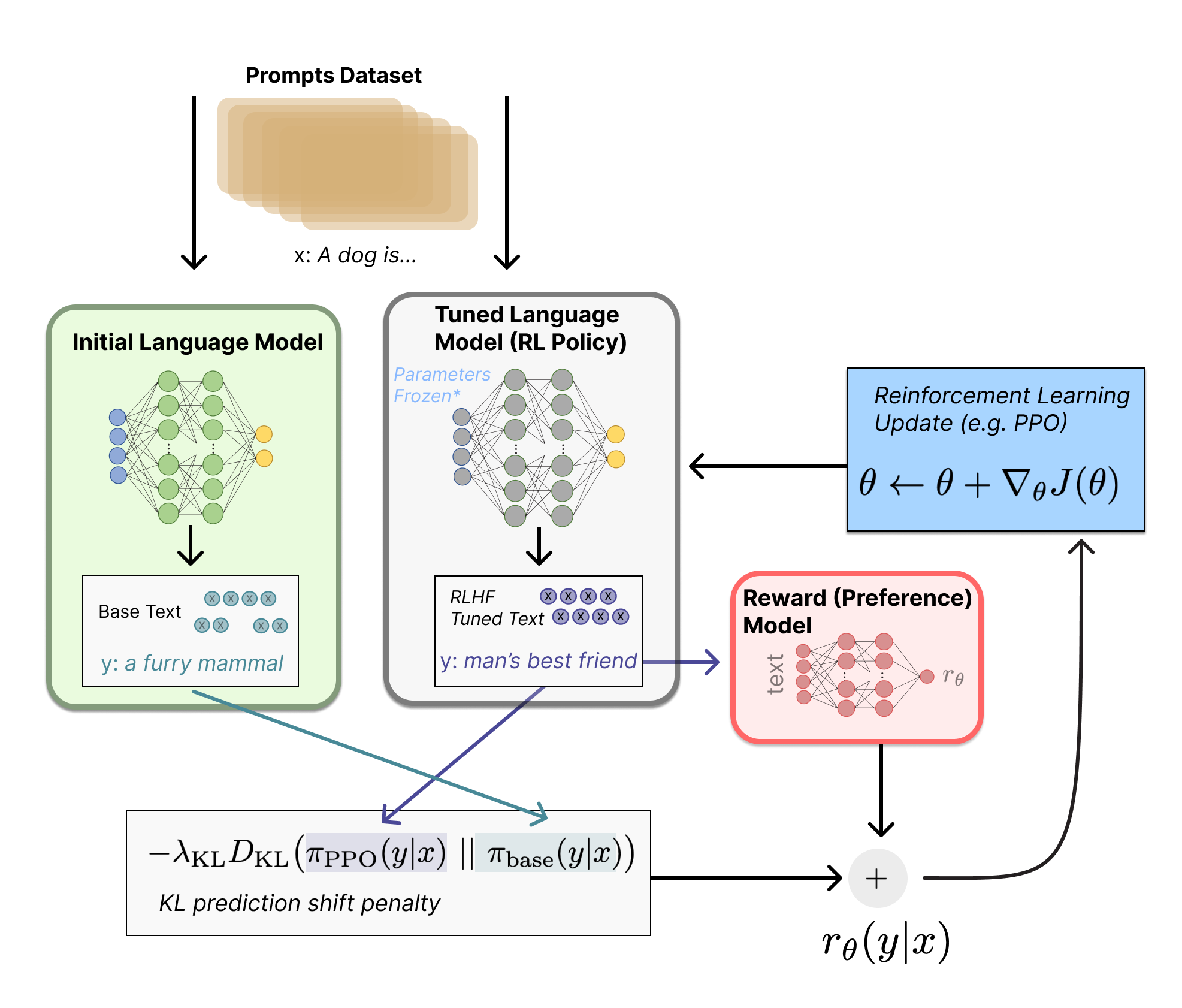

| − | * [https://pub.towardsai.net/paper-review-summarization-using-reinforcement-learning-from-human-feedback-e000a66404ff Paper Review: Summarization using Reinforcement Learning From Human Feedback | - Towards AI] ... AI Alignment, Reinforcement Learning from Human Feedback, [ | + | * [https://pub.towardsai.net/paper-review-summarization-using-reinforcement-learning-from-human-feedback-e000a66404ff Paper Review: Summarization using Reinforcement Learning From Human Feedback | - Towards AI] ... AI Alignment, Reinforcement Learning from Human Feedback, [[ Proximal Policy Optimization (PPO)]] |

Revision as of 13:46, 29 January 2023

YouTube search... ...Google search

- ChatGPT

- Reinforcement Learning (RL)

- Generative Pre-trained Transformer (GPT)

- Introduction to Reinforcement Learning with Human Feedback | Edwin Chen - Surge

- What is Reinforcement Learning with Human Feedback (RLHF)? | Michael Spencer

- Compendium of problems with RLHF | Raphael S - LessWrong

- Reinforcement Learning from Human Feedback(RLHF)-ChatGPT | Sthanikam Santhosh - Medium

- Learning through human feedback | Google DeepMind

- Paper Review: Summarization using Reinforcement Learning From Human Feedback | - Towards AI ... AI Alignment, Reinforcement Learning from Human Feedback, Proximal Policy Optimization (PPO)

Reinforcement Learning from Human Feedback (RLHF) - a simplified explanation | Joao Lages

|

|