Difference between revisions of "Neural Architecture"

m |

m |

||

| Line 15: | Line 15: | ||

* [[Evolutionary Computation / Genetic Algorithms]] | * [[Evolutionary Computation / Genetic Algorithms]] | ||

* [[Algorithm Administration#Hyperparameter|Hyperparameter]]s Optimization | * [[Algorithm Administration#Hyperparameter|Hyperparameter]]s Optimization | ||

| + | * [[Model Search]] | ||

= <span id="Neural Architecture Search (NAS)"></span>Neural Architecture Search (NAS) = | = <span id="Neural Architecture Search (NAS)"></span>Neural Architecture Search (NAS) = | ||

Revision as of 21:34, 6 March 2022

YouTube search... ...Google search

- Hierarchical Temporal Memory (HTM)

- MIT’s AI can train neural networks faster than ever before | Christine Fisher - Engadget

- Other codeless options, Code Generators, Drag n' Drop

- Automated Learning

- Auto Keras

- Evolutionary Computation / Genetic Algorithms

- Hyperparameters Optimization

- Model Search

Neural Architecture Search (NAS)

YouTube search... ...Google search

- Literature on Neural Architecture Search | AutoML.org

- Awesome NAS; a curated list

- Neural Architecture Search (NAS) with Reinforcement Learning | Wikipedia

- Neural Architecture Search (NAS) with Evolution | Wikipedia

- Multi-objective Neural architecture search | Wikipedia

- Neural Architecture Search for Deep Face Recognition | Ning Zhu

- Need to find the best AI model for your problem? Try neural architecture search | Ben Dickson - TDW NAS algorithms are efficient problem solvers

An alternative to manual design is “neural architecture search” (NAS), a series of machine learning techniques that can help discover optimal neural networks for a given problem. Neural architecture search is a big area of research and holds a lot of promise for future applications of deep learning.

Search spaces for deep learning

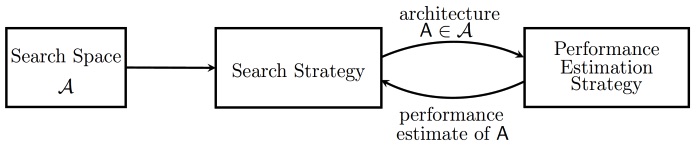

Various approaches to Neural Architecture Search (NAS) have designed networks that are on par or even outperform hand-designed architectures. Methods for NAS can be categorized according to the search space, search strategy and performance estimation strategy used:

- The search space defines which type of ANN can be designed and optimized in principle.

- The search strategy defines which strategy is used to find optimal ANN's within the search space.

- Obtaining the performance of an ANN is costly as this requires training the ANN first. Therefore, performance estimation strategies are used obtain less costly estimates of a model's performance. Neural Architecture Search | Wikipedia

Differentiable Neural Computer (DNC)