Difference between revisions of "Deep Reinforcement Learning (DRL)"

(→Q-learning & SARSA) |

m (→Learning) |

||

| Line 6: | Line 6: | ||

* [http://deeplearning4j.org/deepreinforcementlearning.html Guide] | * [http://deeplearning4j.org/deepreinforcementlearning.html Guide] | ||

| − | === Learning === | + | === Learning; MDP, SARSA === |

* [[Markov Decision Process (MDP)]] | * [[Markov Decision Process (MDP)]] | ||

* [[Deep Q Learning (DQN)]] | * [[Deep Q Learning (DQN)]] | ||

Revision as of 07:26, 27 May 2018

- Gym | OpenAI

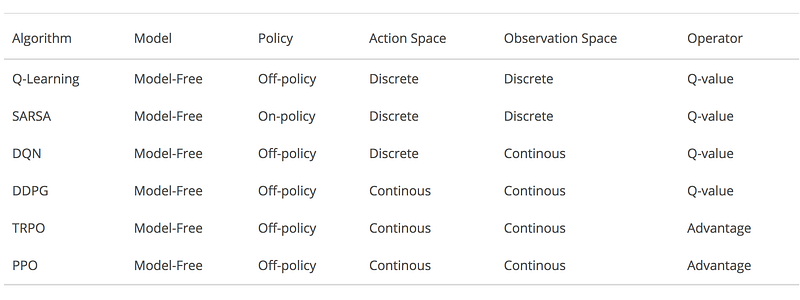

- Introduction to Various Reinforcement Learning Algorithms. Part I (Q-Learning, SARSA, DQN, DDPG) | Steeve Huang

- Introduction to Various Reinforcement Learning Algorithms. Part II (TRPO, PPO) | Steeve Huang

- Guide

Learning; MDP, SARSA

- Markov Decision Process (MDP)

- Deep Q Learning (DQN)

- Neural Coreference

- State-Action-Reward-State-Action (SARSA)

Policy Gradient Methods

- Deep Deterministic Policy Gradient (DDPG)

- Trust Region Policy Optimization (TRPO)

- Proximal Policy Optimization (PPO)

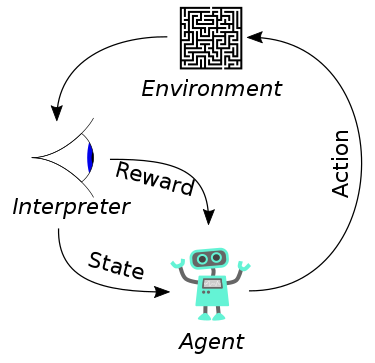

Goal-oriented algorithms, which learn how to attain a complex objective (goal) or maximize along a particular dimension over many steps; for example, maximize the points won in a game over many moves. Reinforcement learning solves the difficult problem of correlating immediate actions with the delayed returns they produce. Like humans, reinforcement learning algorithms sometimes have to wait a while to see the fruit of their decisions. They operate in a delayed return environment, where it can be difficult to understand which action leads to which outcome over many time steps.