Difference between revisions of "Reinforcement Learning (RL) from Human Feedback (RLHF)"

m |

m |

||

| Line 11: | Line 11: | ||

* [[Reinforcement Learning (RL)]] | * [[Reinforcement Learning (RL)]] | ||

* [[Generative Pre-trained Transformer (GPT)]] | * [[Generative Pre-trained Transformer (GPT)]] | ||

| − | |||

* [https://www.surgehq.ai/blog/introduction-to-reinforcement-learning-with-human-feedback-rlhf-series-part-1 Introduction to Reinforcement Learning with Human Feedback | Edwin Chen - Surge] | * [https://www.surgehq.ai/blog/introduction-to-reinforcement-learning-with-human-feedback-rlhf-series-part-1 Introduction to Reinforcement Learning with Human Feedback | Edwin Chen - Surge] | ||

* [https://aisupremacy.substack.com/p/what-is-reinforcement-learning-with What is Reinforcement Learning with Human Feedback (RLHF)? | Michael Spencer] | * [https://aisupremacy.substack.com/p/what-is-reinforcement-learning-with What is Reinforcement Learning with Human Feedback (RLHF)? | Michael Spencer] | ||

| Line 25: | Line 24: | ||

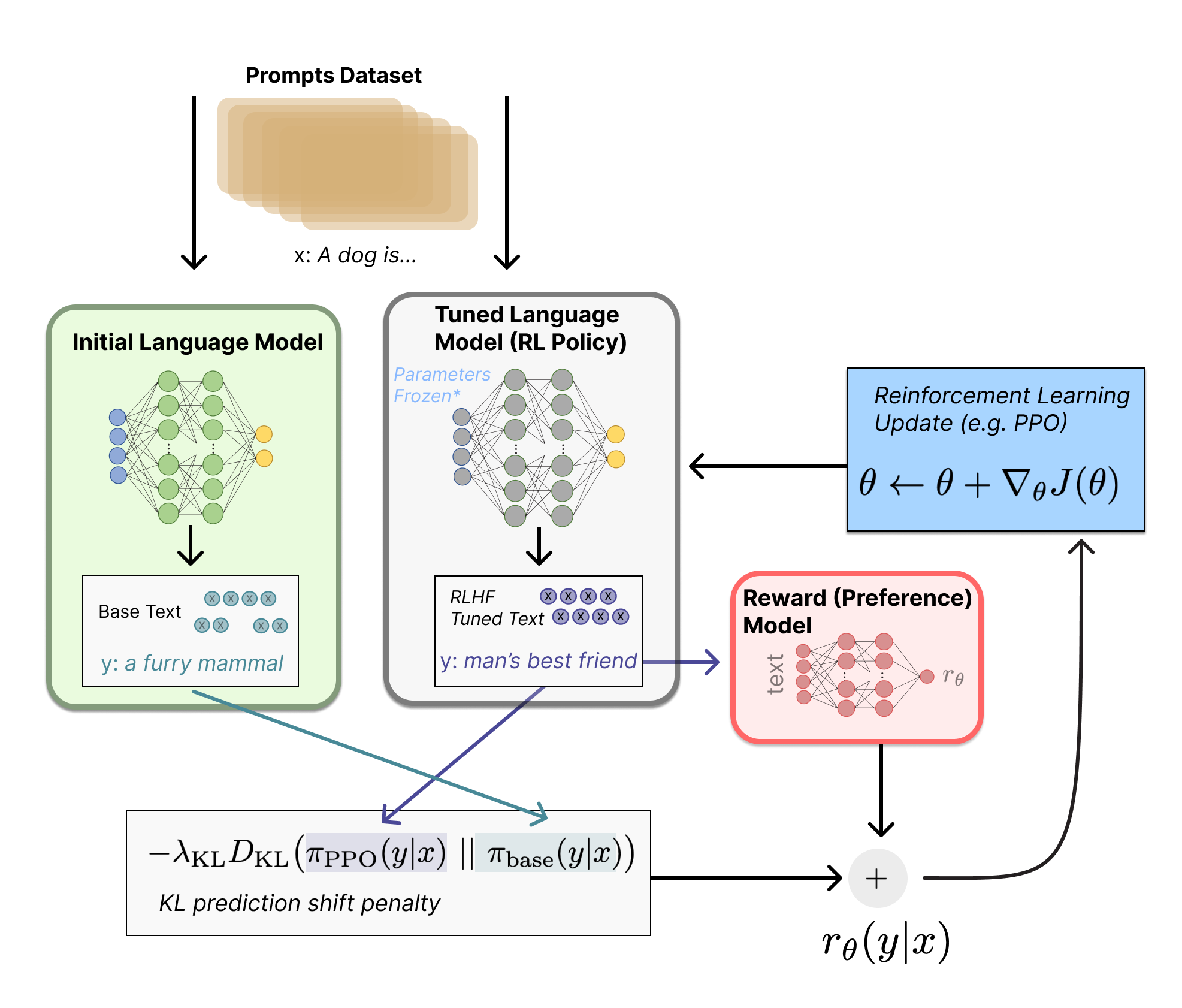

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/rlhf/rlhf.png" width="800"> | <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/blog/rlhf/rlhf.png" width="800"> | ||

| − | + | [https://huggingface.co/blog/rlhf Illustrating Reinforcement Learning from Human Feedback (RLHF) | N. Lambert, L. Castricato, L. von Werra, and A. Havrilla - Hugging Face] | |

{|<!-- T --> | {|<!-- T --> | ||

Revision as of 12:25, 29 January 2023

YouTube search... ...Google search

- ChatGPT

- Reinforcement Learning (RL)

- Generative Pre-trained Transformer (GPT)

- Introduction to Reinforcement Learning with Human Feedback | Edwin Chen - Surge

- What is Reinforcement Learning with Human Feedback (RLHF)? | Michael Spencer

- Compendium of problems with RLHF | Raphael S - LessWrong

- Reinforcement Learning from Human Feedback(RLHF)-ChatGPT | Sthanikam Santhosh - Medium

- Learning through human feedback | Google DeepMind

- Paper Review: Summarization using Reinforcement Learning From Human Feedback | - Towards AI ... AI Alignment, Reinforcement Learning from Human Feedback, Proximal Policy Optimization (PPO)

|

|