Difference between revisions of "Reinforcement Learning (RL) from Human Feedback (RLHF)"

m |

m |

||

| Line 35: | Line 35: | ||

{| class="wikitable" style="width: 550px;" | {| class="wikitable" style="width: 550px;" | ||

|| | || | ||

| − | <youtube> | + | <youtube>wA8rjKueB3Q</youtube> |

| − | <b> | + | <b>How ChatGPT works - From Transformers to Reinforcement Learning with Human Feedback (RLHF) |

| − | </b><br> | + | </b><br>ChatGPT has recently been released by OpenAI, and it is fundamentally a next token/word prediction model. Given the prompt, predict the next token/word(s). When trained on a massive internet corpus, it manages to be very powerful and can do many tasks like summarization, code completion, question and answer zero-shot. |

| + | |||

| + | Amidst the hype of ChatGPT, it can be easy to assume that the model can reason and think for itself. Here, we try to demystify how the model works, first starting with a basic introduction of Transformers, and then how we can improve the model's output using Reinforcement Learning with Human Feedback (RLHF). | ||

| + | |||

| + | Slides and code here: https://github.com/tanchongmin/Tensor... | ||

| + | |||

| + | Transformer Introduction here: https://www.youtube.com/watch?v=iBamM... | ||

| + | |||

| + | References: | ||

| + | Original Transformer Paper (Attention is all you need): https://arxiv.org/pdf/1706.03762.pdf | ||

| + | GPT Paper: https://arxiv.org/pdf/2005.14165.pdf | ||

| + | DialoGPT Paper (conversational AI by Microsoft): https://arxiv.org/pdf/1911.00536.pdf | ||

| + | InstructGPT Paper (with RLHF): https://arxiv.org/pdf/2203.02155.pdf | ||

| + | |||

| + | Illustrated Transformer: https://jalammar.github.io/illustrate... | ||

| + | Illustrated GPT-2: https://jalammar.github.io/illustrate... | ||

| + | |||

| + | * 0:00 Introduction | ||

| + | * 3:09 Embedding Space | ||

| + | * 15:35 Overall Transformer Architecture | ||

| + | * 36:06 Transformer (Details) | ||

| + | * 49:28 GPT Architecture | ||

| + | * 56:38 GPT Training and Loss Function | ||

| + | * 1:05:25 Live Demo of GPT Next Token Generation and Attention Visualisation | ||

| + | * 1:16:55 Conversational AI | ||

| + | * 1:19:00 Reinforcement Learning from Human Feedback (RLHF) | ||

| + | * 1:45:15 Discussion | ||

| + | |||

| + | 08:24, 29 January 2023 (EST)08:24, 29 January 2023 (EST)08:24, 29 January 2023 (EST)08:24, 29 January 2023 (EST)08:24, 29 January 2023 (EST)[[User:BPeat|BPeat]] ([[User talk:BPeat|talk]]) 08:24, 29 January 2023 (EST)` | ||

| + | |||

| + | AI and ML enthusiast. Likes to think about the essences behind breakthroughs of AI and explain it in a simple and relatable way. Also, I am an avid game creator. | ||

| + | |||

| + | Online AI blog: https://delvingintotech.wordpress.com/. | ||

| + | LinkedIn: https://www.linkedin.com/in/chong-min... | ||

| + | Twitch: https://www.twitch.tv/johncm99 | ||

| + | Twitter: https://twitter.com/johntanchongmin | ||

| + | Try out my games here: https://simmer.io/@chongmin | ||

|} | |} | ||

|}<!-- B --> | |}<!-- B --> | ||

Revision as of 09:24, 29 January 2023

YouTube search... ...Google search

- Reinforcement Learning (RL)

- ChatGPT

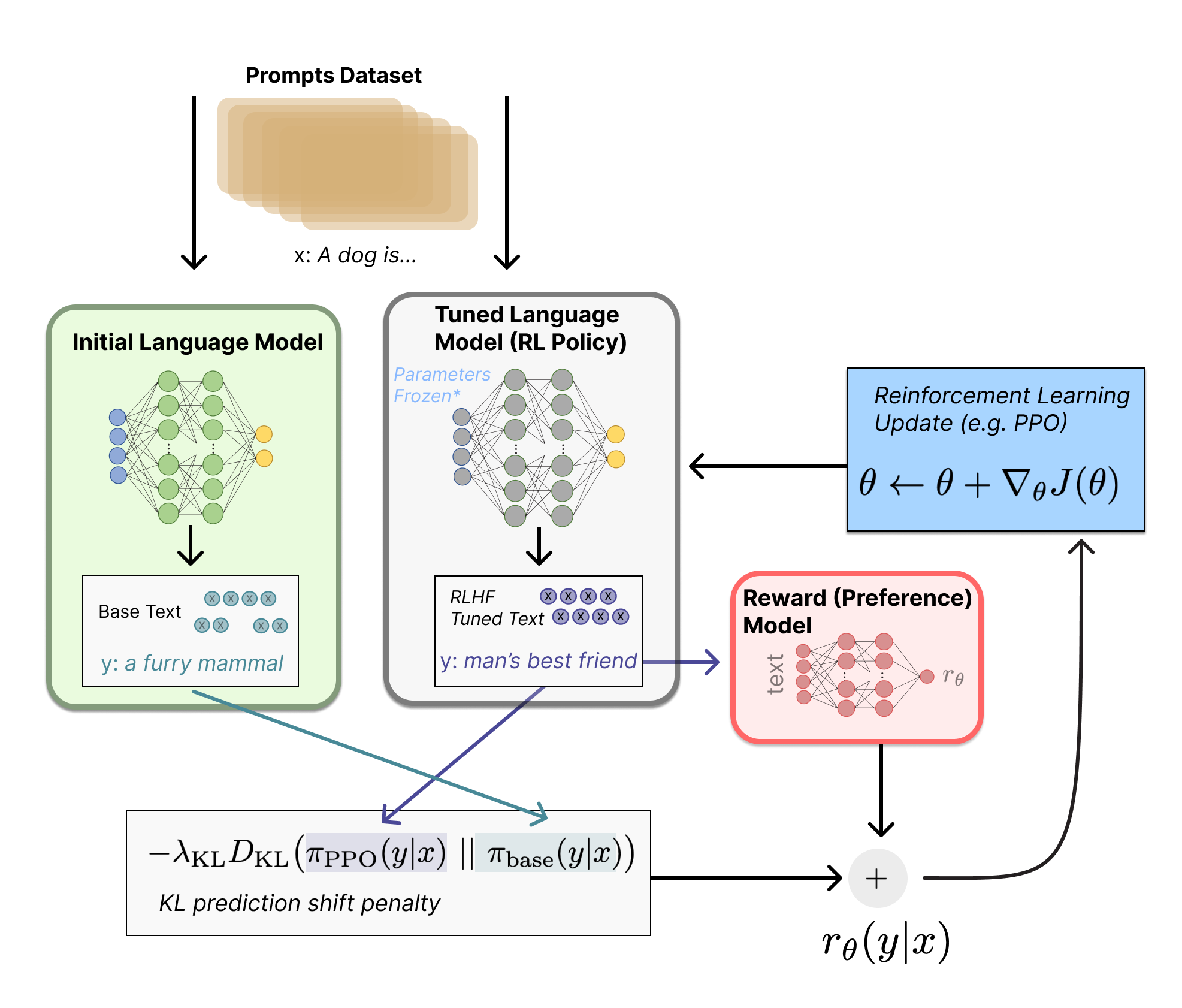

- Illustrating Reinforcement Learning from Human Feedback (RLHF) | N. Lambert, L. Castricato, L. von Werra, and A. Havrilla - Hugging Face

|

|