Difference between revisions of "Generative Pre-trained Transformer (GPT)"

m (→ChatGPT) |

m |

||

| Line 14: | Line 14: | ||

* [[Natural Language Generation (NLG)]] | * [[Natural Language Generation (NLG)]] | ||

* [[Natural Language Tools & Services]] | * [[Natural Language Tools & Services]] | ||

| + | * [[Attention]] | ||

* [[Generated Image]] | * [[Generated Image]] | ||

* [https://openai.com/blog/gpt-2-6-month-follow-up/ OpenAI Blog] | [[OpenAI]] | * [https://openai.com/blog/gpt-2-6-month-follow-up/ OpenAI Blog] | [[OpenAI]] | ||

| Line 88: | Line 89: | ||

|} | |} | ||

|}<!-- B --> | |}<!-- B --> | ||

| − | |||

| − | |||

| − | |||

{|<!-- T --> | {|<!-- T --> | ||

| valign="top" | | | valign="top" | | ||

| Line 122: | Line 120: | ||

|} | |} | ||

|}<!-- B --> | |}<!-- B --> | ||

| + | |||

| + | |||

| + | |||

| + | |||

| + | |||

| + | = Let's build GPT: from scratch, in code, spelled out | Andrej Karpathy = | ||

| + | |||

| + | |||

| + | {|<!-- T --> | ||

| + | | valign="top" | | ||

| + | {| class="wikitable" style="width: 550px;" | ||

| + | || | ||

| + | <youtube>kCc8FmEb1nY</youtube> | ||

| + | <b>Let's build GPT: from scratch, in code, spelled out. | ||

| + | </b><br>We build a Generatively Pretrained Transformer (GPT), following the paper "Attention is All You Need" and OpenAI's GPT-2 / GPT-3. We talk about connections to ChatGPT, which has taken the world by storm. We watch GitHub Copilot, itself a GPT, help us write a GPT (meta :D!) . I recommend people watch the earlier makemore videos to get comfortable with the autoregressive language modeling framework and basics of tensors and PyTorch nn, which we take for granted in this video. | ||

| + | |||

| + | Links: | ||

| + | - Google colab for the video: https://colab.research.google.com/dri... | ||

| + | - GitHub repo for the video: https://github.com/karpathy/ng-video-... | ||

| + | - Playlist of the whole Zero to Hero series so far: https://www.youtube.com/watch?v=VMj-3... | ||

| + | - nanoGPT repo: https://github.com/karpathy/nanoGPT | ||

| + | - my website: https://karpathy.ai | ||

| + | - my twitter: https://twitter.com/karpathy | ||

| + | - our Discord channel: https://discord.gg/3zy8kqD9Cp | ||

| + | |||

| + | Supplementary links: | ||

| + | - Attention is All You Need paper: https://arxiv.org/abs/1706.03762 | ||

| + | - OpenAI GPT-3 paper: https://arxiv.org/abs/2005.14165 | ||

| + | - OpenAI ChatGPT blog post: https://openai.com/blog/chatgpt/ | ||

| + | - The GPU I'm training the model on is from Lambda GPU Cloud, I think the best and easiest way to spin up an on-demand GPU instance in the cloud that you can ssh to: https://lambdalabs.com . If you prefer to work in notebooks, I think the easiest path today is Google Colab. | ||

| + | |||

| + | Suggested exercises: | ||

| + | - EX1: The n-dimensional tensor mastery challenge: Combine the `Head` and `MultiHeadAttention` into one class that processes all the heads in parallel, treating the heads as another batch dimension (answer is in nanoGPT). | ||

| + | - EX2: Train the GPT on your own dataset of choice! What other data could be fun to blabber on about? (A fun advanced suggestion if you like: train a GPT to do addition of two numbers, i.e. a+b=c. You may find it helpful to predict the digits of c in reverse order, as the typical addition algorithm (that you're hoping it learns) would proceed right to left too. You may want to modify the data loader to simply serve random problems and skip the generation of train.bin, val.bin. You may want to mask out the loss at the input positions of a+b that just specify the problem using y=-1 in the targets (see CrossEntropyLoss ignore_index). Does your Transformer learn to add? Once you have this, swole doge project: build a calculator clone in GPT, for all of +-*/. Not an easy problem. You may need Chain of Thought traces.) | ||

| + | - EX3: Find a dataset that is very large, so large that you can't see a gap between train and val loss. Pretrain the transformer on this data, then initialize with that model and finetune it on tiny shakespeare with a smaller number of steps and lower learning rate. Can you obtain a lower validation loss by the use of pretraining? | ||

| + | - EX4: Read some transformer papers and implement one additional feature or change that people seem to use. Does it improve the performance of your GPT? | ||

| + | |||

| + | Chapters: | ||

| + | 00:00:00 intro: ChatGPT, Transformers, nanoGPT, Shakespeare | ||

| + | baseline language modeling, code setup | ||

| + | 00:07:52 reading and exploring the data | ||

| + | 00:09:28 tokenization, train/val split | ||

| + | 00:14:27 data loader: batches of chunks of data | ||

| + | 00:22:11 simplest baseline: bigram language model, loss, generation | ||

| + | 00:34:53 training the bigram model | ||

| + | 00:38:00 port our code to a script | ||

| + | Building the "self-attention" | ||

| + | 00:42:13 version 1: averaging past context with for loops, the weakest form of aggregation | ||

| + | 00:47:11 the trick in self-attention: matrix multiply as weighted aggregation | ||

| + | 00:51:54 version 2: using matrix multiply | ||

| + | 00:54:42 version 3: adding softmax | ||

| + | 00:58:26 minor code cleanup | ||

| + | 01:00:18 positional encoding | ||

| + | 01:02:00 THE CRUX OF THE VIDEO: version 4: self-attention | ||

| + | 01:11:38 note 1: attention as communication | ||

| + | 01:12:46 note 2: attention has no notion of space, operates over sets | ||

| + | 01:13:40 note 3: there is no communication across batch dimension | ||

| + | 01:14:14 note 4: encoder blocks vs. decoder blocks | ||

| + | 01:15:39 note 5: attention vs. self-attention vs. cross-attention | ||

| + | 01:16:56 note 6: "scaled" self-attention. why divide by sqrt(head_size) | ||

| + | Building the Transformer | ||

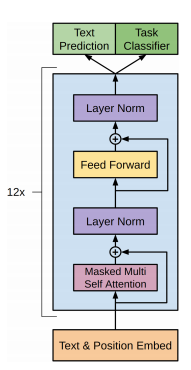

| + | 01:19:11 inserting a single self-attention block to our network | ||

| + | 01:21:59 multi-headed self-attention | ||

| + | 01:24:25 feedforward layers of transformer block | ||

| + | 01:26:48 residual connections | ||

| + | 01:32:51 layernorm (and its relationship to our previous batchnorm) | ||

| + | 01:37:49 scaling up the model! creating a few variables. adding dropout | ||

| + | Notes on Transformer | ||

| + | 01:42:39 encoder vs. decoder vs. both (?) Transformers | ||

| + | 01:46:22 super quick walkthrough of nanoGPT, batched multi-headed self-attention | ||

| + | 01:48:53 back to ChatGPT, GPT-3, pretraining vs. finetuning, RLHF | ||

| + | 01:54:32 conclusions | ||

| + | |||

| + | Corrections: | ||

| + | 00:57:00 Oops "tokens from the future cannot communicate", not "past". Sorry! :) | ||

| + | 01:20:05 Oops I should be using the head_size for the normalization, not C | ||

| + | |||

| + | |<!-- M --> | ||

| + | | valign="top" | | ||

| + | {| class="wikitable" style="width: 550px;" | ||

| + | || | ||

| + | <youtube>9uw3F6rndnA</youtube> | ||

| + | <b>Transformers: The best idea in AI | Andrej Karpathy and Lex Fridman | ||

| + | </b><br>Lex Fridman Podcast full episode: https://www.youtube.com/watch?v=cdiD-... | ||

| + | Please support this podcast by checking out our sponsors: | ||

| + | - Eight Sleep: https://www.eightsleep.com/lex to get special savings | ||

| + | - BetterHelp: https://betterhelp.com/lex to get 10% off | ||

| + | - Fundrise: https://fundrise.com/lex | ||

| + | - Athletic Greens: https://athleticgreens.com/lex to get 1 month of fish oil | ||

| + | |||

| + | GUEST BIO: | ||

| + | Andrej Karpathy is a legendary AI researcher, engineer, and educator. He's the former director of AI at Tesla, a founding member of OpenAI, and an educator at Stanford. | ||

| + | |||

| + | PODCAST INFO: | ||

| + | Podcast website: https://lexfridman.com/podcast | ||

| + | Apple Podcasts: https://apple.co/2lwqZIr | ||

| + | Spotify: https://spoti.fi/2nEwCF8 | ||

| + | RSS: https://lexfridman.com/feed/podcast/ | ||

| + | Full episodes playlist: https://www.youtube.com/playlist?list... | ||

| + | Clips playlist: https://www.youtube.com/playlist?list... | ||

| + | |||

| + | SOCIAL: | ||

| + | - Twitter: https://twitter.com/lexfridman | ||

| + | - LinkedIn: https://www.linkedin.com/in/lexfridman | ||

| + | - Facebook: https://www.facebook.com/lexfridman | ||

| + | - Instagram: https://www.instagram.com/lexfridman | ||

| + | - Medium: https://medium.com/@lexfridman | ||

| + | - Reddit: https://reddit.com/r/lexfridman | ||

| + | - Support on Patreon: https://www.patreon.com/lexfridman | ||

| + | |||

| + | |} | ||

| + | |}<!-- B --> | ||

| + | |||

| + | |||

| + | |||

= Generative Pre-trained Transformer (GPT-3) = | = Generative Pre-trained Transformer (GPT-3) = | ||

Revision as of 09:01, 28 January 2023

YouTube search... ...Google search

- Case Studies

- Text Transfer Learning

- Natural Language Generation (NLG)

- Natural Language Tools & Services

- Attention

- Generated Image

- OpenAI Blog | OpenAI

- Language Models are Unsupervised Multitask Learners | Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, and Ilya Sutskever

- Neural Monkey | Jindřich Libovický, Jindřich Helcl, Tomáš Musil Byte Pair Encoding (BPE) enables NMT model translation on open-vocabulary by encoding rare and unknown words as sequences of subword units.

- Attention Mechanism/Transformer Model

- Bidirectional Encoder Representations from Transformers (BERT)

- ELMo

- SynthPub

- Language Models are Unsupervised Multitask Learners - GitHub

- Microsoft Releases DialogGPT AI Conversation Model | Anthony Alford - InfoQ - trained on over 147M dialogs

- minGPT | Andrej Karpathy - GitHub

- SambaNova Systems ... Dataflow-as-a-Service GPT

- Facebook-owner Meta opens access to AI large language model | Elizabeth Culliford - Reuters ... Facebook 175-billion-parameter language model - Open Pretrained Transformer (OPT-175B)

Contents

ChatGPT

YouTube search... ...Google search

- ChatGPT | OpenAI

- Cybersecurity

- OpenAI invites everyone to test ChatGPT, a new AI-powered chatbot—with amusing results | Benj Edwards - ARS Technica ... ChatGPT aims to produce accurate and harmless talk—but it's a work in progress.

- How to use ChatGPT for language generation in Python in 2023? | John Williams - Its ChatGPT ... Examples ... How to earn money

Generates human-like text, making ChatGPT performs a wide range of natural language processing (NLP) tasks; chatbots, automated writing, language translation, text summarization and generate computer programs. OpenAI states ChatGPT is a significant iterative step in the direction of providing a safe AI model for everyone. ChatGPT interacts in a conversational way. The dialogue format makes it possible for ChatGPT to answer follow-up questions, admit its mistakes, challenge incorrect premises, and reject inappropriate requests. ChatGPT is a sibling model to InstructGPT, which is trained to follow an instruction in a prompt and provide a detailed response.

|

|

|

|

|

|

Let's build GPT: from scratch, in code, spelled out | Andrej Karpathy

Generative Pre-trained Transformer (GPT-3)

Try...

GPT Impact to Development

Generative Pre-trained Transformer (GPT-2)

Coding Train Late Night 2

r/SubSimulatorSubreddit populated entirely by AI personifications of other subreddits -- all posts and comments are generated automatically using: results in coherent and realistic simulated content.

GetBadNews

|