Difference between revisions of "Neural Architecture"

m |

m (→Neural Architecture Search (NAS)) |

||

| Line 28: | Line 28: | ||

* [http://en.wikipedia.org/wiki/Neural_architecture_search#Multi-objective_Neural_architecture_search Multi-objective Neural architecture search | Wikipedia] | * [http://en.wikipedia.org/wiki/Neural_architecture_search#Multi-objective_Neural_architecture_search Multi-objective Neural architecture search | Wikipedia] | ||

* [http://arxiv.org/abs/1904.09523 Neural Architecture Search for Deep Face Recognition | Ning Zhu] | * [http://arxiv.org/abs/1904.09523 Neural Architecture Search for Deep Face Recognition | Ning Zhu] | ||

| − | |||

| − | |||

| − | |||

| − | + | An alternative to manual design is “neural architecture search” (NAS), a series of machine learning techniques that can help discover optimal neural networks for a given problem. Neural architecture search is a big area of research and holds a lot of promise for future applications of deep learning. * [http://thenextweb.com/news/best-ai-model-for-your-problem-try-neural-architecture-search Need to find the best AI model for your problem? Try neural architecture search | Ben Dickson - TDW] NAS algorithms are efficient problem solvers | |

| + | ** [http://bdtechtalks.com/2022/02/28/what-is-neural-architecture-search/ What is neural architecture search (NAS)? | Ben Dickson - TechTalks] | ||

http://cdn0.tnwcdn.com/wp-content/blogs.dir/1/files/2022/03/deep.jpg | http://cdn0.tnwcdn.com/wp-content/blogs.dir/1/files/2022/03/deep.jpg | ||

Revision as of 21:35, 6 March 2022

YouTube search... ...Google search

- Hierarchical Temporal Memory (HTM)

- MIT’s AI can train neural networks faster than ever before | Christine Fisher - Engadget

- Other codeless options, Code Generators, Drag n' Drop

- Automated Learning

- Auto Keras

- Evolutionary Computation / Genetic Algorithms

- Hyperparameters Optimization

- Model Search

Neural Architecture Search (NAS)

YouTube search... ...Google search

- Literature on Neural Architecture Search | AutoML.org

- Awesome NAS; a curated list

- Neural Architecture Search (NAS) with Reinforcement Learning | Wikipedia

- Neural Architecture Search (NAS) with Evolution | Wikipedia

- Multi-objective Neural architecture search | Wikipedia

- Neural Architecture Search for Deep Face Recognition | Ning Zhu

An alternative to manual design is “neural architecture search” (NAS), a series of machine learning techniques that can help discover optimal neural networks for a given problem. Neural architecture search is a big area of research and holds a lot of promise for future applications of deep learning. * Need to find the best AI model for your problem? Try neural architecture search | Ben Dickson - TDW NAS algorithms are efficient problem solvers

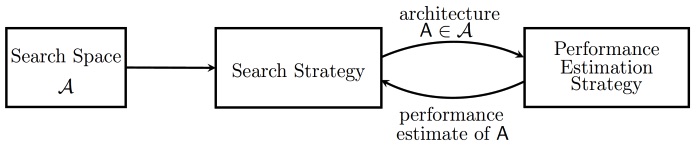

Various approaches to Neural Architecture Search (NAS) have designed networks that are on par or even outperform hand-designed architectures. Methods for NAS can be categorized according to the search space, search strategy and performance estimation strategy used:

- The search space defines which type of ANN can be designed and optimized in principle.

- The search strategy defines which strategy is used to find optimal ANN's within the search space.

- Obtaining the performance of an ANN is costly as this requires training the ANN first. Therefore, performance estimation strategies are used obtain less costly estimates of a model's performance. Neural Architecture Search | Wikipedia

Differentiable Neural Computer (DNC)