Difference between revisions of "Decision Forest Regression"

| Line 1: | Line 1: | ||

| + | {{#seo: | ||

| + | |title=PRIMO.ai | ||

| + | |titlemode=append | ||

| + | |keywords=artificial, intelligence, machine, learning, models, algorithms, data, singularity, moonshot, Tensorflow, Google, Nvidia, Microsoft, Azure, Amazon, AWS | ||

| + | |description=Helpful resources for your journey with artificial intelligence; videos, articles, techniques, courses, profiles, and tools | ||

| + | }} | ||

[http://www.youtube.com/results?search_query=Decision+Forest+Regression YouTube search...] | [http://www.youtube.com/results?search_query=Decision+Forest+Regression YouTube search...] | ||

| + | [http://www.google.com/search?q=Decision+Forest+Regression+machine+learning+ML+artificial+intelligence ...Google search] | ||

* [[AI Solver]] | * [[AI Solver]] | ||

Revision as of 14:31, 2 February 2019

YouTube search... ...Google search

Decision trees are non-parametric models that perform a sequence of simple tests for each instance, traversing a binary tree data structure until a leaf node (decision) is reached. Decision trees have these advantages:

- They are efficient in both computation and memory usage during training and prediction.

- They can represent non-linear decision boundaries.

- They perform integrated feature selection and classification and are resilient in the presence of noisy features.

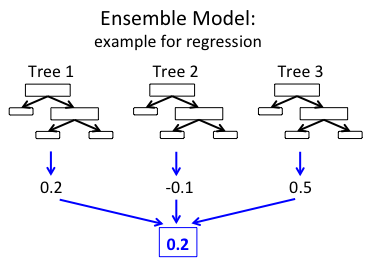

This regression model consists of an ensemble of decision trees. Each tree in a regression decision forest outputs a Gaussian distribution as a prediction. An aggregation is performed over the ensemble of trees to find a Gaussian distribution closest to the combined distribution for all trees in the model.