Difference between revisions of "Bayes"

m |

m |

||

| Line 19: | Line 19: | ||

This algorithm is based on the “Bayes’ Theorem” in probability. Due to that Naive Bayes can be applied only if the features are independent of each other since it is a requirement in Bayes’ Theorem. If we try to predict a flower type by its petal length and width, we can use Naive Bayes approach since both those features are independent. [http://towardsdatascience.com/10-machine-learning-algorithms-you-need-to-know-77fb0055fe0 10 Machine Learning Algorithms You need to Know | Sidath Asir @ Medium] | This algorithm is based on the “Bayes’ Theorem” in probability. Due to that Naive Bayes can be applied only if the features are independent of each other since it is a requirement in Bayes’ Theorem. If we try to predict a flower type by its petal length and width, we can use Naive Bayes approach since both those features are independent. [http://towardsdatascience.com/10-machine-learning-algorithms-you-need-to-know-77fb0055fe0 10 Machine Learning Algorithms You need to Know | Sidath Asir @ Medium] | ||

| + | |||

| + | <img src="http://cdn-images-1.medium.com/max/800/1*ADp6qgk1IVI4oILwQS-1ZA.png" width="400"> | ||

<youtube>3OJEae7Qb_o</youtube> | <youtube>3OJEae7Qb_o</youtube> | ||

| − | + | <youtube>R13BD8qKeTg</youtube> | |

| − | |||

| − | < | ||

=== <span id="Bayes' Theorem"></span>Bayes' Theorem === | === <span id="Bayes' Theorem"></span>Bayes' Theorem === | ||

Revision as of 17:35, 11 October 2020

Youtube search... ...Google search

- AI Solver

- Capabilities

- Statistics for Intelligence

- Ensemble Learning

- Feature Engineering and Selection: A Practical Approach for Predictive Models - 12.1 Naive Bayes Models | Max Kuhn and Kjell Johnson

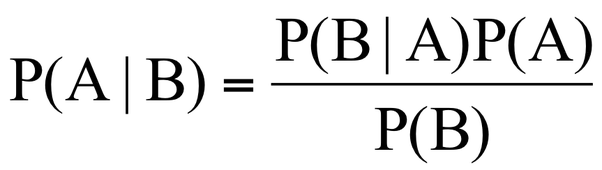

This algorithm is based on the “Bayes’ Theorem” in probability. Due to that Naive Bayes can be applied only if the features are independent of each other since it is a requirement in Bayes’ Theorem. If we try to predict a flower type by its petal length and width, we can use Naive Bayes approach since both those features are independent. 10 Machine Learning Algorithms You need to Know | Sidath Asir @ Medium

Contents

Bayes' Theorem

the probability of an event, based on prior knowledge of conditions that might be related to the event. Bayes' Theorem | Wikipedia

Bayesian Statistics

Bayesian Hypothesis Testing

Naive Bayes

- How to Develop a Naive Bayes Classifier from Scratch in Python | Jason Brownlee - Machine Learning Mastery

- A Beginner's Guide to Bayes' Theorem, Naive Bayes Classifiers and Bayesian Networks | Chris Nicholson - A.I. Wiki pathmind

A Naive Bayes classifier assumes that the presence of a particular feature in a class is unrelated to the presence of any other feature. For example, a fruit may be considered to be an apple if it is red, round, and about 3 inches in diameter. Even if these features depend on each other or upon the existence of the other features, all of these properties independently contribute to the probability that this fruit is an apple and that is why it is known as ‘Naive’.

Two-Class Bayes Point Machine

This algorithm efficiently approximates the theoretically optimal Bayesian average of linear classifiers (in terms of generalization performance) by choosing one "average" classifier, the Bayes Point. Because the Bayes Point Machine is a Bayesian classification model, it is not prone to overfitting to the training data. - Microsoft

Bayesian Linear Regression

YouTube search... ...Google search

- Regression Analysis

- Bayesian Linear Regression | Microsoft

- 10 types of regressions. Which one to use? | Vincent Granville

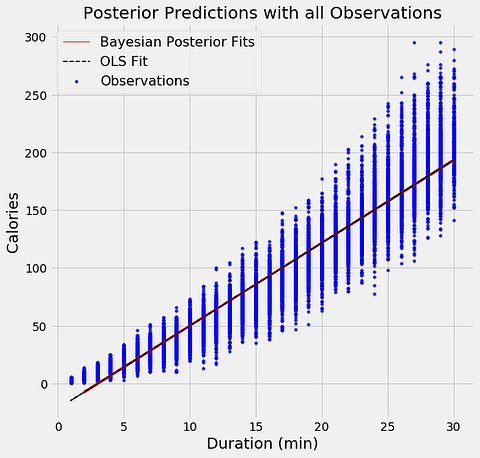

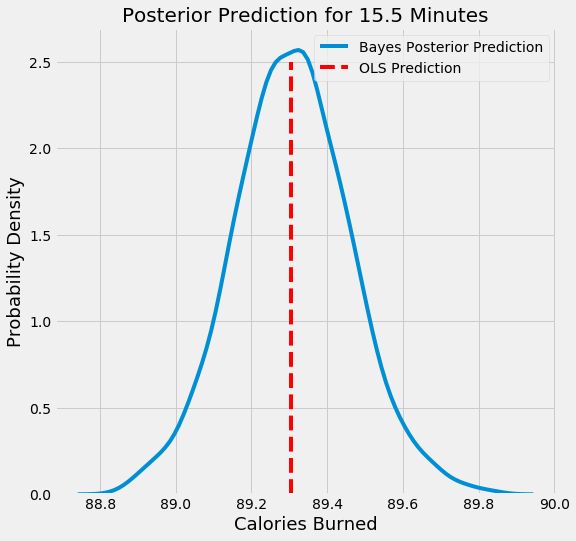

The aim of Bayesian Linear Regression is not to find the single “best” value of the model parameters, but rather to determine the posterior distribution for the model parameters. Not only is the response generated from a probability distribution, but the model parameters are assumed to come from a distribution as well. Introduction to Bayesian Linear Regression | Towards Data Science

In the Bayesian viewpoint, we formulate linear regression using probability distributions rather than point estimates. The response, y, is not estimated as a single value, but is assumed to be drawn from a probability distribution. The output, y is generated from a normal (Gaussian) Distribution characterized by a mean and variance. The mean for linear regression is the transpose of the weight matrix multiplied by the predictor matrix. The variance is the square of the standard deviation σ (multiplied by the Identity matrix because this is a multi-dimensional formulation of the model).

Bayesian methods have a highly desirable quality: they avoid overfitting. They do this by making some assumptions beforehand about the likely distribution of the answer. Another byproduct of this approach is that they have very few parameters. Machine Learning has both Bayesian algorithms for both classification (Two-class Bayes' point machine) and regression (Bayesian linear regression). Note that these assume that the data can be split or fit with a straight line. - Dinesh Chandrasekar

Bayesian Deep Learning (BDL)

Youtube search... ...Google search

BDL provides a deep learning framework which can also model uncertainty. BDL can achieve state-of-the-art results, while also understanding uncertainty. Deep Learning Is Not Good Enough, We Need Bayesian Deep Learning for Safe AI | Alex Kendall

Bayesian Parameter Estimation