Difference between revisions of "Decision Forest Regression"

(Created page with "[http://www.youtube.com/results?search_query=Decision+Forest+Regression YouTube search...] * AI Solver * ...predict values * [http://docs.microsoft.com/en-us/azure/ma...") |

|||

| Line 13: | Line 13: | ||

This regression model consists of an ensemble of decision trees. Each tree in a regression decision forest outputs a Gaussian distribution as a prediction. An aggregation is performed over the ensemble of trees to find a Gaussian distribution closest to the combined distribution for all trees in the model. | This regression model consists of an ensemble of decision trees. Each tree in a regression decision forest outputs a Gaussian distribution as a prediction. An aggregation is performed over the ensemble of trees to find a Gaussian distribution closest to the combined distribution for all trees in the model. | ||

| − | <youtube> | + | https://databricks.com/wp-content/uploads/2015/01/Ensemble-example.png |

| − | <youtube> | + | |

| + | <youtube>5eiE_X6Yr7s</youtube> | ||

| + | <youtube>D_2LkhMJcfY</youtube> | ||

Revision as of 22:39, 31 May 2018

Decision trees are non-parametric models that perform a sequence of simple tests for each instance, traversing a binary tree data structure until a leaf node (decision) is reached. Decision trees have these advantages:

- They are efficient in both computation and memory usage during training and prediction.

- They can represent non-linear decision boundaries.

- They perform integrated feature selection and classification and are resilient in the presence of noisy features.

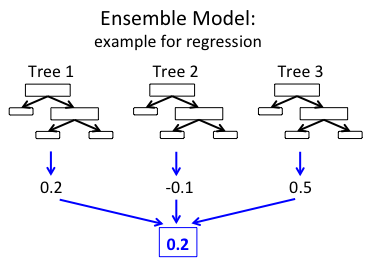

This regression model consists of an ensemble of decision trees. Each tree in a regression decision forest outputs a Gaussian distribution as a prediction. An aggregation is performed over the ensemble of trees to find a Gaussian distribution closest to the combined distribution for all trees in the model.