Difference between revisions of "Generative Pre-trained Transformer (GPT)"

m (BPeat moved page Generative Pre-trained Transformer to Generative Pre-trained Transformer (GPT) without leaving a redirect) |

|||

| Line 10: | Line 10: | ||

* [[Case Studies]] | * [[Case Studies]] | ||

** [[Writing]] | ** [[Writing]] | ||

| + | ** [[Publishing]] | ||

* [[Text Transfer Learning]] | * [[Text Transfer Learning]] | ||

* [[Natural Language Generation (NLG)]] | * [[Natural Language Generation (NLG)]] | ||

Revision as of 11:29, 19 July 2020

YouTube search... ...Google search

- Case Studies

- Text Transfer Learning

- Natural Language Generation (NLG)

- Generated Image

- OpenAI Blog | OpenAI

- Language Models are Unsupervised Multitask Learners | Alec Radford, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, and Ilya Sutskever

- (117M parameter) version of GPT-2 | GitHub

- GPT-2: It learned on the Internet | Janelle Shane

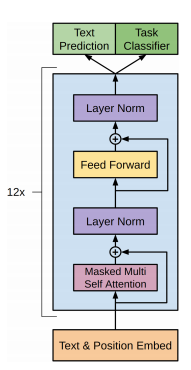

- Attention Mechanism/Transformer Model

- Neural Monkey | Jindřich Libovický, Jindřich Helcl, Tomáš Musil Byte Pair Encoding (BPE) enables NMT model translation on open-vocabulary by encoding rare and unknown words as sequences of subword units.

- Too powerful NLP model (GPT-2): What is Generative Pre-Training | Edward Ma

- Bidirectional Encoder Representations from Transformers (BERT)

- ELMo

- Language Models are Unsupervised Multitask Learners - GitHub

- OpenAI Creates Platform for Generating Fake News. Wonderful | Nick Kolakowski - Dice

- GPT-2 A nascent transfer learning method that could eliminate supervised learning some NLP tasks | Ajit Rajasekharan - Medium

- How to Get Started with OpenAIs GPT-2 for Text Generation | Amal Nair - Analytics India Magazine

- Microsoft Releases DialogGPT AI Conversation Model | Anthony Alford - InfoQ - trained on over 147M dialogs

a text-generating bot based on a model with 1.5 billion parameters. ...Ultimately, OpenAI's researchers kept the full thing to themselves, only releasing a pared-down 117 million parameter version of the model (which we have dubbed "GPT-2 Junior") as a safer demonstration of what the full GPT-2 model could do.Twenty minutes into the future with OpenAI’s Deep Fake Text AI | Sean Gallagher

- Try GPT-2...Talk to Transformer - completes your text. | Adam D King, Hugging Face and OpenAI

Contents

GPT-3

GPT-2

r/SubSimulator

Subreddit populated entirely by AI personifications of other subreddits -- all posts and comments are generated automatically using:

results in coherent and realistic simulated content.

GetBadNews

- Get Bad News game - Can you beat my score? Play the fake news game! Drop all pretense of ethics and choose the path that builds your persona as an unscrupulous media magnate. Your task is to get as many followers as you can while