Difference between revisions of "Ridge Regression"

| Line 14: | Line 14: | ||

* [[Regularization]] | * [[Regularization]] | ||

** [[Lasso Regression]] | ** [[Lasso Regression]] | ||

| − | ** [http://towardsdatascience.com/ridge-and-lasso-regression-a-complete-guide-with-python-scikit-learn-e20e34bcbf0b Ridge and Lasso Regression: A Complete Guide with Python Scikit-Learn | Saptashwa - Towards Data Science] | + | *** [http://towardsdatascience.com/ridge-and-lasso-regression-a-complete-guide-with-python-scikit-learn-e20e34bcbf0b Ridge and Lasso Regression: A Complete Guide with Python Scikit-Learn | Saptashwa - Towards Data Science] |

| + | ** [[Elastic Net Regression]] | ||

* [[Logistic Regression (LR)]] | * [[Logistic Regression (LR)]] | ||

* [[Statistics for Intelligence]] | * [[Statistics for Intelligence]] | ||

Revision as of 01:22, 13 July 2019

YouTube search... ...Google search

- AI Solver

- Capabilities

- Linear Regression

- Regularization

- Logistic Regression (LR)

- Statistics for Intelligence

- Overfitting Challenge

- Boosting

- 7 Types of Regression Techniques you should know! | Sunil Ray

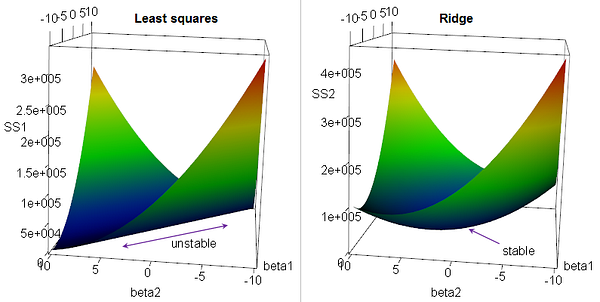

or Tikhonov Regularization, is the most commonly used regression algorithm to approximate an answer for an equation with no unique solution. This type of problem is very common in machine learning tasks, where the "best" solution must be chosen using limited data. Simply, [Regularization]] introduces additional information to an problem to choose the "best" solution for it. This algorithm is used for analyzing multiple regression data that suffer from multicollinearity. Multicollinearity, or collinearity, is the existence of near-linear relationships among the independent variables. When multicollinearity occurs, least squares estimates are unbiased, but their variances are large so they may be far from the true value. By adding a degree of bias to the regression estimates, ridge regression reduces the standard errors.