Difference between revisions of "History of Artificial Intelligence (AI)"

m |

m |

||

| Line 2: | Line 2: | ||

|title=PRIMO.ai | |title=PRIMO.ai | ||

|titlemode=append | |titlemode=append | ||

| − | |keywords=artificial, intelligence, machine, learning, models, algorithms, data, singularity, moonshot, TensorFlow, Google, Nvidia, Microsoft, Azure, Amazon, AWS, Facebook | + | |keywords=artificial, intelligence, machine, learning, models, algorithms, data, singularity, moonshot, TensorFlow, Google, Nvidia, Microsoft, Azure, Amazon, AWS, Meta, Facebook |

|description=Helpful resources for your journey with artificial intelligence; videos, articles, techniques, courses, profiles, and tools | |description=Helpful resources for your journey with artificial intelligence; videos, articles, techniques, courses, profiles, and tools | ||

}} | }} | ||

| Line 73: | Line 73: | ||

<youtube>gG5NCkMerHU</youtube> | <youtube>gG5NCkMerHU</youtube> | ||

<b>The Epistemology of Deep Learning - [[Creatives#Yann LeCun|Yann LeCun]] | <b>The Epistemology of Deep Learning - [[Creatives#Yann LeCun|Yann LeCun]] | ||

| − | </b><br>Deep Learning: Alchemy or Science? Topic: The Epistemology of Deep Learning Speaker: [[Creatives#Yann LeCun|Yann LeCun]] Affiliation: [[Facebook]] AI Research/New York University Date: February 22, 2019 | + | </b><br>Deep Learning: Alchemy or Science? Topic: The Epistemology of Deep Learning Speaker: [[Creatives#Yann LeCun|Yann LeCun]] Affiliation: [[Meta|Facebook]] AI Research/New York University Date: February 22, 2019 |

|} | |} | ||

|}<!-- B --> | |}<!-- B --> | ||

Revision as of 22:27, 8 February 2023

Youtube search... ...Google search

- Creatives

- Gaming

- History of Artificial Intelligence ...Timeline ...Timeline of machine learning | Wikipedia

- A (Very) Brief History of Artificial Intelligence | Bruce G. Buchanan

- How China tried and failed to win the AI race: The inside story | Alison Rayome

- Bio-inspired Computing

- Feature Exploration/Learning

- Using AI to reveal historical mysteries

In AI, there are four generations.

- The first generation is the Good Old-fashioned AI, meaning that you handcraft everything and you learn nothing.

- The second generation is shallow learning — you handcraft the features and learn a classifier.

- The third generation, which a lot of people have enjoyed so far, is deep learning. Basically you handcraft the algorithm, but you learn the features and you learn the predictions, end to end. More learning than shallow learning, right?

- And the fourth generation, this is something new, what I work on, I call it “learning-to-learn.” Google Brain Research Scientist Quoc Le on AutoML and More

|

|

|

|

|

|

|

|

|

|

|

|

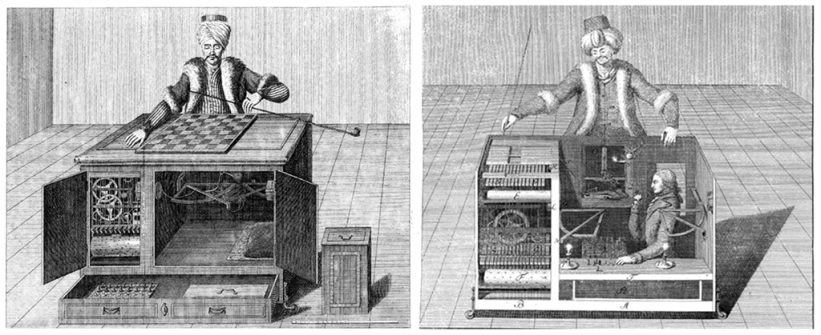

The Turk

|