Difference between revisions of "Bayes"

m |

|||

| Line 2: | Line 2: | ||

|title=PRIMO.ai | |title=PRIMO.ai | ||

|titlemode=append | |titlemode=append | ||

| − | |keywords=artificial, intelligence, machine, learning, models, algorithms, data, singularity, moonshot, | + | |keywords=artificial, intelligence, machine, learning, models, algorithms, data, singularity, moonshot, TensorFlow, Google, Facebook, Nvidia, Microsoft, Azure, Amazon, AWS |

|description=Helpful resources for your journey with artificial intelligence; videos, articles, techniques, courses, profiles, and tools | |description=Helpful resources for your journey with artificial intelligence; videos, articles, techniques, courses, profiles, and tools | ||

}} | }} | ||

| − | [http://www.youtube.com/results?search_query=naive+bayes+artificial+intelligence Youtube search...] | + | [http://www.youtube.com/results?search_query=naive+bayes+artificial+intelligence+ai Youtube search...] |

| − | [http://www.google.com/search?q=naive+bayes+deep+machine+learning+ML+artificial+intelligence ...Google search] | + | [http://www.google.com/search?q=naive+bayes+deep+machine+learning+ML+artificial+intelligence+ai ...Google search] |

* [[AI Solver]] | * [[AI Solver]] | ||

| Line 38: | Line 38: | ||

<youtube>lvFi02LV82g</youtube> | <youtube>lvFi02LV82g</youtube> | ||

| + | |||

| + | = Bayesian Parameter Estimation = | ||

| + | <youtube>xRbrg0P2xv8</youtube> | ||

Revision as of 12:28, 11 October 2020

Youtube search... ...Google search

- AI Solver

- Capabilities

- Bayesian Deep Learning (BDL)

- Bayesian Linear Regression

- How to Develop a Naive Bayes Classifier from Scratch in Python | Jason Brownlee - Machine Learning Mastery

- A Beginner's Guide to Bayes' Theorem, Naive Bayes Classifiers and Bayesian Networks | Chris Nicholson - A.I. Wiki pathmind

- Feature Engineering and Selection: A Practical Approach for Predictive Models - 12.1 Naive Bayes Models | Max Kuhn and Kjell Johnson

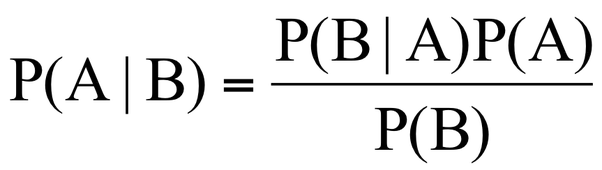

This algorithm is based on the “Bayes’ Theorem” in probability. Due to that Naive Bayes can be applied only if the features are independent of each other since it is a requirement in Bayes’ Theorem. If we try to predict a flower type by its petal length and width, we can use Naive Bayes approach since both those features are independent. 10 Machine Learning Algorithms You need to Know | Sidath Asir @ Medium

A Naive Bayes classifier assumes that the presence of a particular feature in a class is unrelated to the presence of any other feature. For example, a fruit may be considered to be an apple if it is red, round, and about 3 inches in diameter. Even if these features depend on each other or upon the existence of the other features, all of these properties independently contribute to the probability that this fruit is an apple and that is why it is known as ‘Naive’.

Two-Class Bayes Point Machine

This algorithm efficiently approximates the theoretically optimal Bayesian average of linear classifiers (in terms of generalization performance) by choosing one "average" classifier, the Bayes Point. Because the Bayes Point Machine is a Bayesian classification model, it is not prone to overfitting to the training data. - Microsoft

Bayesian Parameter Estimation