Difference between revisions of "Gradient Descent Optimization & Challenges"

| Line 13: | Line 13: | ||

* [[Objective vs. Cost vs. Loss vs. Error Function]] | * [[Objective vs. Cost vs. Loss vs. Error Function]] | ||

* [[Topology and Weight Evolving Artificial Neural Network (TWEANN)]] | * [[Topology and Weight Evolving Artificial Neural Network (TWEANN)]] | ||

| + | |||

| + | |||

| + | http://cdn-images-1.medium.com/max/800/1*NRCWfdXa7b-ak2nBtmwRvw.png | ||

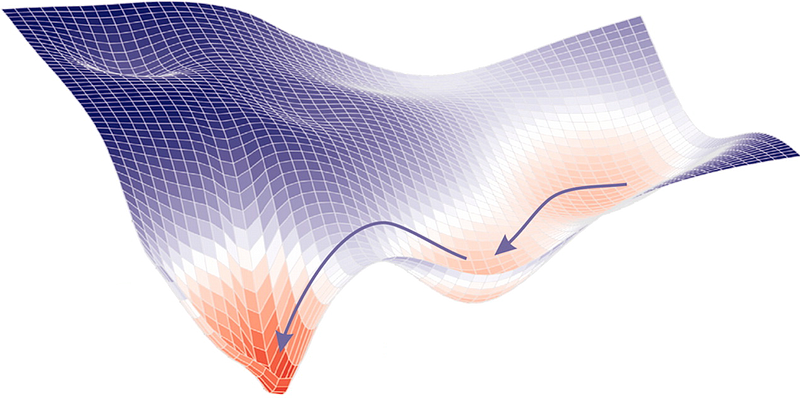

== Gradient Descent - Stochastic (SGD), Batch (BGD) & Mini-Batch == | == Gradient Descent - Stochastic (SGD), Batch (BGD) & Mini-Batch == | ||

Revision as of 22:09, 16 February 2019

YouTube search... ...Google search

- Gradient Boosting Algorithms

- Backpropagation

- Objective vs. Cost vs. Loss vs. Error Function

- Topology and Weight Evolving Artificial Neural Network (TWEANN)

Gradient Descent - Stochastic (SGD), Batch (BGD) & Mini-Batch

Vanishing & Exploding Gradients Problems

Vanishing & Exploding Gradients Challenges with Long Short-Term Memory (LSTM) and Recurrent Neural Networks (RNN)